NOAA Loses 1M km2 of Arctic Ice in July

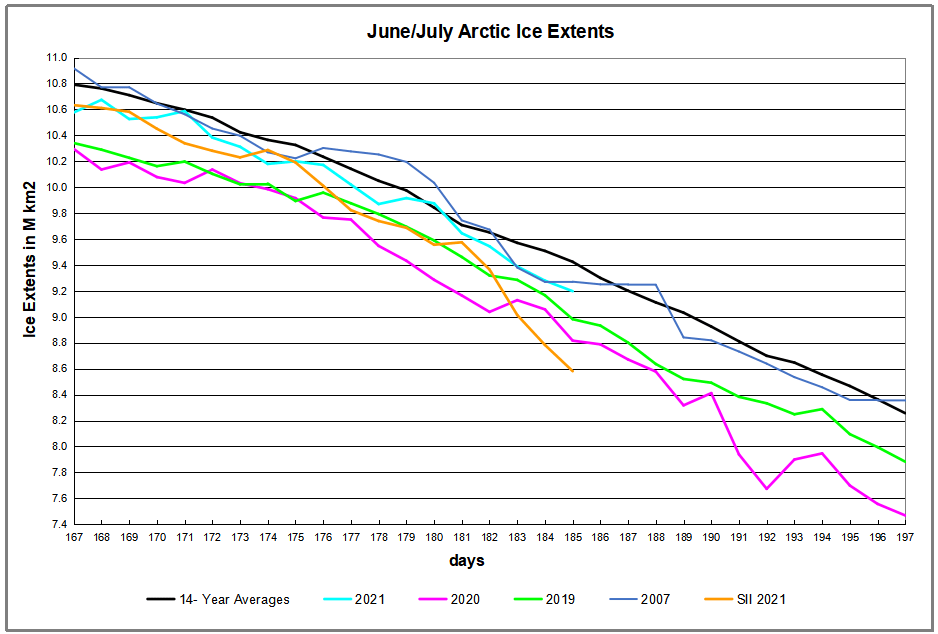

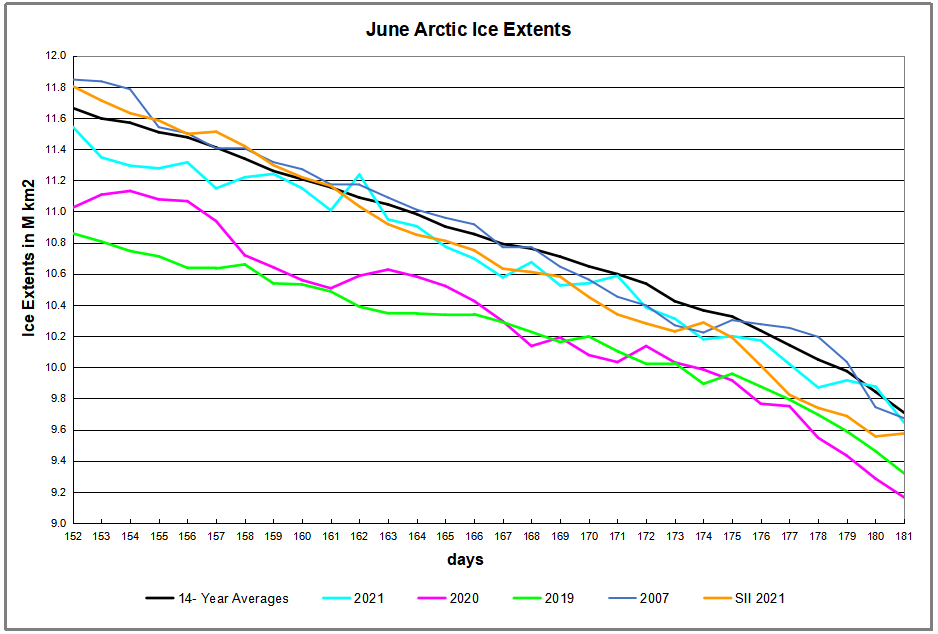

NOAA’s Sea Ice Index (shown in orange above) dramatically lost 1M km2 of Arctic sea ice extent in just the last four days. Meanwhile MASIE from the National Ice Center (NIC) (cyan color) declined much less, ~400k km2, a more typical decline since the two datasets were nearly the same on June 30, 2021. Note that extreme drops in ice extents can happen in July, as seen last year (purple line after day 190), so the issue bears watching. There is history of satellite difficulties discriminating between open water and surface melt water during both the melting and refreezing seasons. A background post below explains differences between the two datasets.

Background from previous post Support MASIE Arctic Ice Dataset

MASIE: “high-resolution, accurate charts of ice conditions”

Walt Meier, NSIDC, October 2015 article in Annals of Glaciology.

Update February 4, 2017 Below

The home page for MASIE (here) invites visitors to show their interest in the dataset and analysis tools since continued funding is not assured. The page says:

NSIDC has received support to develop MASIE but not to maintain MASIE. We are actively seeking support to maintain the Web site and products over the long term. If you find MASIE helpful, please let us know with a quick message to NSIDC User Services.

For the reasons below, I hope people will go there and express their support.

1. MASIE is Rigorous.

Note on Sea Ice Resolution:

Northern Hemisphere Spatial Coverage

Sea Ice Index (SII) from NOAA is based on 25 km cells and 15% ice coverage. That means if a grid cell 25X25, or 625 km2 is estimated to have at least 15% ice, then 625 km2 is added to the total extent. In the mapping details, grid cells vary between 382 to 664 km2 with latitudes. And the satellites’ Field of View (FOV) is actually an ellipsoid ranging from 486 to 3330 km2 depending on the channel and frequency. More info is here.

MASIE is based on 4 km cells and 40% ice coverage. Thus, for MASIE estimates, if a grid cell is deemed to have at least 40% ice, then 16 km2 is added to the total extent.

The significantly higher resolution in MASIE means that any error in detecting ice cover at the threshold level affects only 16 km2 in the MASIE total, compared to at least 600 km2 variation in SII. A few dozen SII cells falling below the 15% threshold is reported as a sizable loss of ice in the Arctic.

2. MASIE is Reliable.

MASIE is an operational ice product developed from multiple sources to provide the most accurate possible description of Arctic ice for the sake of ships operating in the region.

Operational analyses combine a variety of remote-sensing inputs and other sources via manual integration to create high-resolution, accurate charts of ice conditions in support of navigation and operational forecast models. One such product is the daily Multisensor Analyzed Sea Ice Extent (MASIE). The higher spatial resolution along with multiple input data and manual analysis potentially provide more precise mapping of the ice edge than passive microwave estimates. From Meier et al., link below.

Some people have latched onto a line from the NSIDC background page:

Use the Sea Ice Index when comparing trends in sea ice over time or when consistency is important. Even then, the monthly, not the daily, Sea Ice Index views should be used to look at trends in sea ice. The Sea Ice Index documentation explains how linear regression is used to say something about trends in ice extent, and what the limitations of that method are. Use MASIE when you want the most accurate view possible of Arctic-wide ice on a given day or through the week.

That statement was not updated to reflect recent developments:

“In June 2014, we decided to make the MASIE product available back to 2006. This was done in response to user requests, and because the IMS product output, upon which MASIE is based, appeared to be reasonably consistent.”

The fact that MASIE employs human judgment is discomforting to climatologists as a potential source of error, so Meier and others prefer that the analysis be done by computer algorithms. Yet, as we shall see, the computer programs are themselves human inventions and when applied uncritically by machines produce errors of their own.

3. MASIE serves as Calibration for satellite products.

The NSIDC Background cites as support a study by Partington et al (2003). Reading that study, one finds that the authors preferred the MASIE data and said this:

“Passive microwave sensors from the U.S. Defense Meteorological Satellite Program have long provided a key source of information on Arctic-wide sea ice conditions, but suffer from some known deficiencies, notably a tendency to underestimate ice concentrations in summer. With the recent release of digital and quality controlled ice charts extending back to 1972 from the U.S. National Ice Center (NIC), there is now an alternative record of late twentieth century Northern Hemisphere sea ice conditions to compare with the valuable, but imperfect, passive microwave sea ice record.”

“This analysis has been based on ice chart data rather than the more commonly analyzed passive microwave derived ice concentrations. Differences between the NIC ice chart sea ice record and the passive microwave sea ice record are highly significant despite the fact that the NIC charts are semi-dependent on the passive microwave data, and it is worth noting these differences. . .In summer, the difference between the two sources of data rises to a maximum of 23% peaking in early August, equivalent to ice coverage the size of Greenland.” (my bold) For clarity: the ice chart data show higher extents than passive microwave data.

The differences are even greater for Canadian regions.

“More than 1380 regional Canadian weekly sea-ice charts for four Canadian regions and 839 hemispheric U.S. weekly sea-ice charts from 1979 to 1996 are compared with passive microwave sea-ice concentration estimates using the National Aeronautics and Space Administration (NASA) Team algorithm. Compared with the Canadian regional ice charts, the NASA Team algorithm underestimates the total ice-covered area by 20.4% to 33.5% during ice melt in the summer and by 7.6% to 43.5% during ice growth in the late fall.”

From: The Use of Operational Ice Charts for Evaluating Passive Microwave Ice Concentration Data, Agnew and Howell http://www.tandfonline.com/doi/pdf/10.3137/ao.410405

More recently Walter Meier, who is in charge of SII, and several colleagues compared SII and MASIE and published their findings October 2015 (here). The purpose of the analysis was stated thus:

Our comparison is not meant to be an extensive validation of either product, but to illustrate as guidance for future use how the two products behave in different regimes.

The abstract concludes:

Comparisons indicate that MASIE shows higher Arctic-wide extent values throughout most of the year, largely because of the limitations of passive microwave sensors in some conditions (e.g. surface melt). However, during some parts of the year, MASIE tends to indicate less ice than estimated by passive microwave sensors. These comparisons yield a better understanding of operational and research sea-ice data products; this in turn has important implications for their use in climate and weather models.

A more extensive comparison of MASIE from NIC and SII from NOAA is here.

4. MASIE continues a long history of Arctic Ice Charts.

Naval authorities have for centuries prepared ice charts for the safety of ships operating in the Arctic. There are Russian, Danish, Norwegian, and Canadian charts, in addition to MASIE, the US version. These estimates rely on multiple sources of data, including the NASA reports. Charts are made with no climate ax to grind, only to get accurate locations and extents of Arctic ice each day.

Figure 16-3: Time series of April sea-ice extent in Nordic Sea (1864-1998) given by 2-year running mean and second-order polynomial curves. Top: Nordic Sea; middle: eastern area; bottom: western area (after Vinje, 2000). IPCC Third Assessment Report

Since these long-term records show a quasi-60 year cycle in ice extents, it is vital to have a modern dataset based on the same methodology, albeit with sophisticated modern tools.

Summary

Measuring anything in the Arctic is difficult, and especially sea ice that is constantly moving around. It is a good thing to have independent measures using different methodologies, since any estimate is prone to error.

Please take the time to express your appreciation for NIC’s contribution and your support for their products at MASIE home page.

Update February 4, 2017

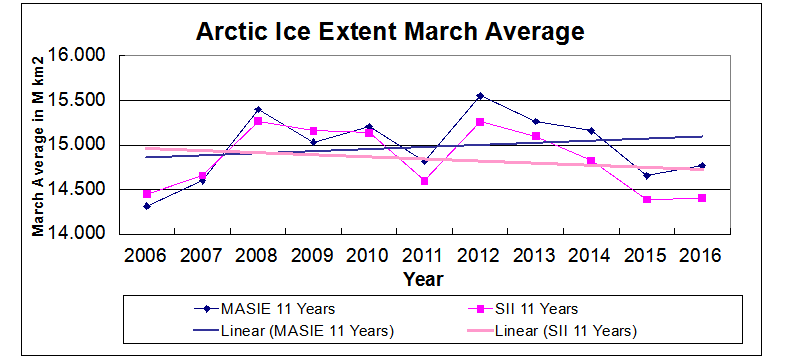

In the comments Neven said MASIE was unusable because it was biased low before 2010 and high afterward. I have looked into that and he is mistaken. Below is the pattern that is observed most months. March is the annual maximum and coming up soon.

As the graph shows, the two datasets were aligned through 2010, and then SII began underestimating ice extent, resulting in a negative 11-year trend. MASIE shows the same fluctuations, but with higher extents and a slightly positive trend for March extents. The satellite sensors have a hard time with mixed ice/water conditions (well-documented).

More on the two datasets NOAA has been Losing Arctic Ice

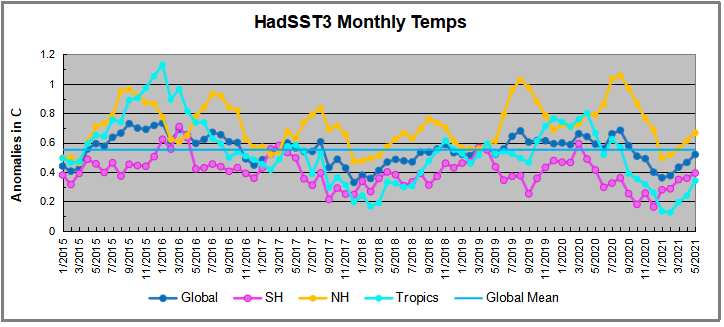

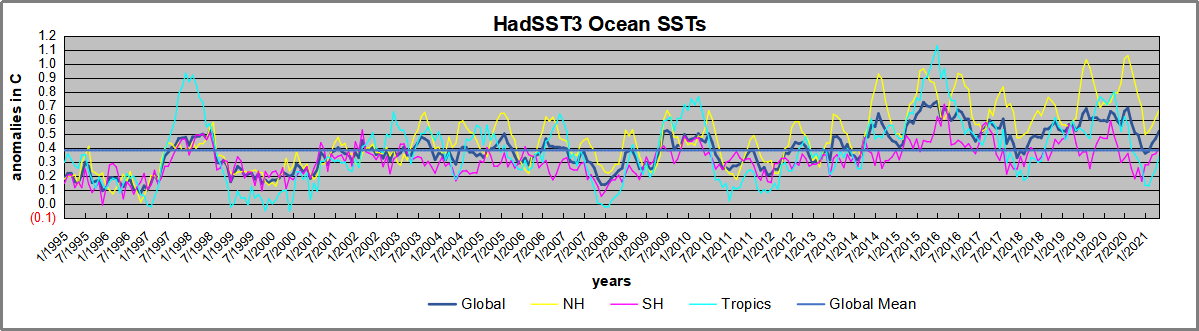

1995 is a reasonable (ENSO neutral) starting point prior to the first El Nino. The sharp Tropical rise peaking in 1998 is dominant in the record, starting Jan. ’97 to pull up SSTs uniformly before returning to the same level Jan. ’99. For the next 2 years, the Tropics stayed down, and the world’s oceans held steady around 0.2C above 1961 to 1990 average.

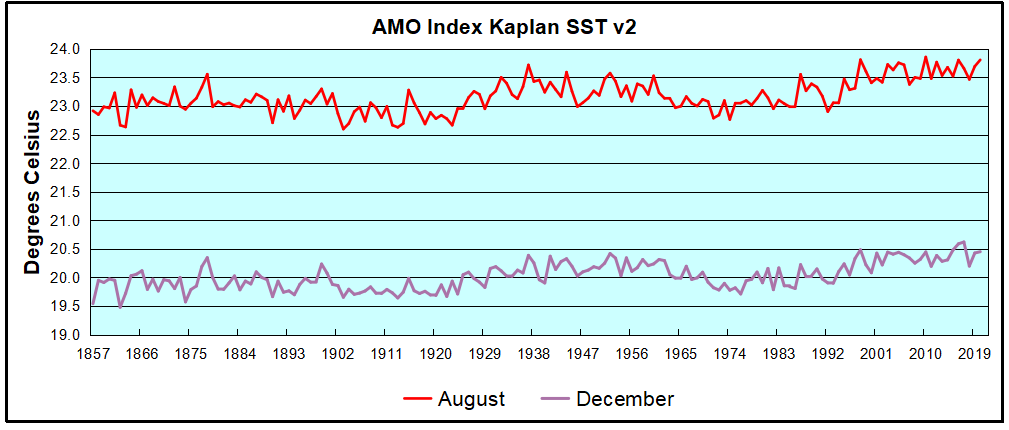

1995 is a reasonable (ENSO neutral) starting point prior to the first El Nino. The sharp Tropical rise peaking in 1998 is dominant in the record, starting Jan. ’97 to pull up SSTs uniformly before returning to the same level Jan. ’99. For the next 2 years, the Tropics stayed down, and the world’s oceans held steady around 0.2C above 1961 to 1990 average. The AMO Index is from from Kaplan SST v2, the unaltered and not detrended dataset. By definition, the data are monthly average SSTs interpolated to a 5×5 grid over the North Atlantic basically 0 to 70N. The graph shows August warming began after 1992 up to 1998, with a series of matching years since, including 2020. Because the N. Atlantic has partnered with the Pacific ENSO recently, let’s take a closer look at some AMO years in the last 2 decades.

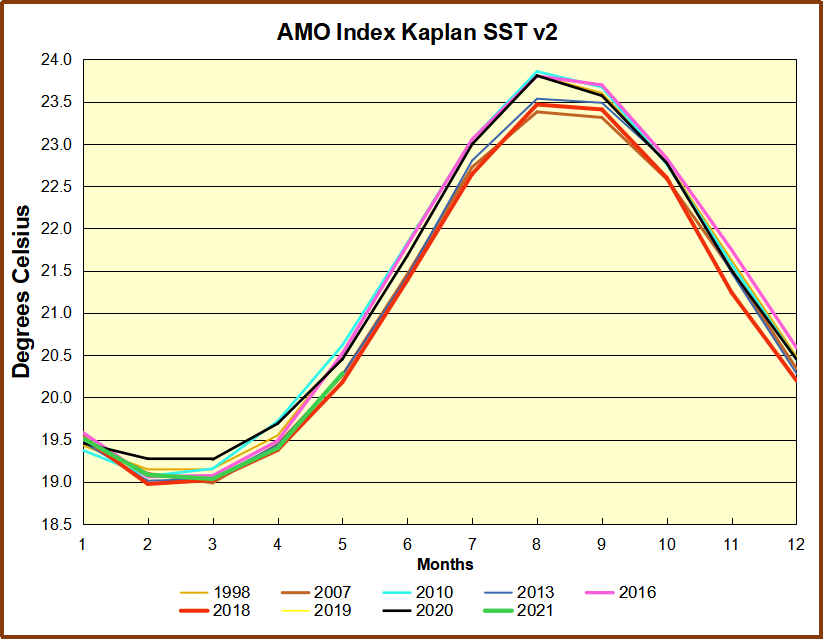

The AMO Index is from from Kaplan SST v2, the unaltered and not detrended dataset. By definition, the data are monthly average SSTs interpolated to a 5×5 grid over the North Atlantic basically 0 to 70N. The graph shows August warming began after 1992 up to 1998, with a series of matching years since, including 2020. Because the N. Atlantic has partnered with the Pacific ENSO recently, let’s take a closer look at some AMO years in the last 2 decades. This graph shows monthly AMO temps for some important years. The Peak years were 1998, 2010 and 2016, with the latter emphasized as the most recent. The other years show lesser warming, with 2007 emphasized as the coolest in the last 20 years. Note the red 2018 line is at the bottom of all these tracks. The black line shows that 2020 began slightly warm, then set records for 3 months. then dropped below 2016 and 2017, peaked in August ending below 2016. Now in 2021, AMO is tracking the coldest years.

This graph shows monthly AMO temps for some important years. The Peak years were 1998, 2010 and 2016, with the latter emphasized as the most recent. The other years show lesser warming, with 2007 emphasized as the coolest in the last 20 years. Note the red 2018 line is at the bottom of all these tracks. The black line shows that 2020 began slightly warm, then set records for 3 months. then dropped below 2016 and 2017, peaked in August ending below 2016. Now in 2021, AMO is tracking the coldest years.