In December 2014, Willis Eschenbach posted GMT series generated by 42 CMIP5 models, along with HADCRUT4 series, all obtained from KNMI.

CMIP5 Model Temperature Results in Excel

The dataset includes a single run showing GMT from each of 42 CMIP5 models. Each model estimates monthly global mean temperatures in degrees Kelvin backwards to 1861 and forwards to 2101, a period of 240 years. The dataset from CMIP5 models includes 145 years of history to 2005, and 95 years of projections from 2006 onward.

The estimated global mean temperatures are considered to be an emergent property generated by the model. Thus it is of interest to compare them to measured surface temperatures. The models produce variability year over year, and on decadal and centennial scales.

These models can be thought of as 42 “proxies” for global mean temperature change. Without knowing what parameters and assumptions were used in each case, we can still make observations about the models’ behavior, without assuming that any model is typical of the actual climate. Also the central tendency tells us something about the set of models, without necessarily being descriptive of the real world.

What temperatures are projected by the average model?

| Periods |

HADCRUT4 |

ALL SERIES |

ALL MINUS HADCRUT4 |

|

|

|

|

| 1850-1878 |

0.035 |

0.051 |

0.016 |

| 1878-1915 |

-0.052 |

0.024 |

0.076 |

| 1915-1944 |

0.143 |

0.050 |

-0.093 |

| 1944-1976 |

-0.040 |

-0.008 |

0.032 |

| 1976-1998 |

0.194 |

0.144 |

-0.050 |

| 1998-2014 |

0.053 |

0.226 |

0.173 |

| 1850-2014 |

0.049 |

0.060 |

0.011 |

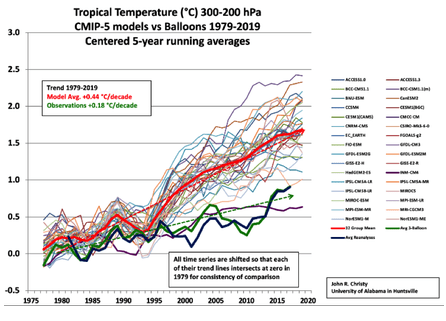

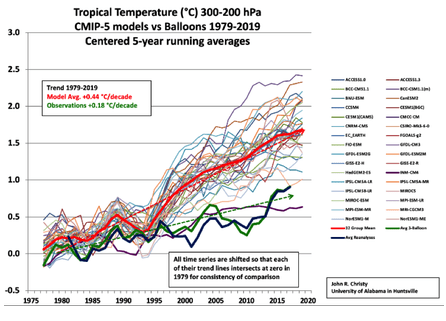

The rates in the table are C/decade. Over the entire 240 years time series, the average model has a warming trend of 1.26C per century. This compares to UAH global trend of 1.38C, measured by satellites since 1979.

However, the average model over the same period as UAH shows a rate of +2.15C/cent. Moreover, for the 30 years from 2006 to 2035, the warming rate is projected at 2.28C. These estimates are in contrast to the 145 years of history in the models, where the trend shows as 0.41C per century.

Clearly, the CMIP5 models are programmed for the future to warm more than 5 times the rate as the past.

Is one model better than the others?

In presenting the CMIP5 dataset, Willis raised a question about which of the 42 models could be the best one. I put the issue this way: Does one of the CMIP5 models reproduce the temperature history convincingly enough that its projections should be taken seriously?

I identified the models that produced an historical trend nearly 0.5K/century over the 145 year period, and those whose trend from 1861 to 2014 was in the same range. Then I looked to see which of the subset could match the UAH trend 1979 to 2014.

Out of these comparisons the best performance was Series 31, which Willis confirms is output from the INMCM4 model. Rates in the table below are C/decade.

| Periods |

HADCRUT4 |

SERIES 31 |

31 MINUS HADCRUT4 |

|

|

|

|

| 1850-1878 |

0.035 |

0.036 |

0.001 |

| 1878-1915 |

-0.052 |

-0.011 |

0.041 |

| 1915-1944 |

0.143 |

0.099 |

-0.044 |

| 1944-1976 |

-0.040 |

0.056 |

0.096 |

| 1976-1998 |

0.194 |

0.098 |

-0.096 |

| 1998-2014 |

0.053 |

0.125 |

0.072 |

| 1850-2014 |

0.049 |

0.052 |

0.003 |

Note that this model closely matches HADCrut4 over 60 year periods, but shows variances over 30 year periods. That is, shorter periods of warming in HADCrut4 run less warm in the model, and shorter periods of cooling in HADCrut4 run flat or slightly warming in the model. Over 60 years the differences offset.

It shows warming 0.52K/century from 1861 to 2014, with a plateau from 2006 to 2014, and 0.91K/century from 1979-2014. It projects 1.0K/century from 2006 to 2035 and 1.35K/century from now to 2101. Those forward projections are much lower than the consensus claims, and not at all alarming

In contrast with Series 31, the other 41 models typically match the historical warming rate of 0.05C by accelerating warming from 1976 onward and projecting it into the future. For example, while UAH shows warming of 0.14/decade from 1979-2014, CMIP5 models estimates average 0.215/decade, ranging from 0.088 to 0.324/decade.

For the next future climate period, 2006-2035, CMIP5 models project an average warming of 0.28C/decade, ranging from 0.097 to 0.375/decade.

The longer the plateau continues, the more overheated are these projections by the models.

What’s different about the best model?

Above, I showed how one CMIP5 model produced historical temperature trends closely comparable to HADCRUT4. That same model, INMCM4, was also closest to Berkeley Earth and RSS series.

Curious about what makes this model different from the others, I consulted several comparative surveys of CMIP5 models. There appear to be 3 features of INMCM4 that differentiate it from the others.

1.INMCM4 has the lowest CO2 forcing response at 4.1K for 4XCO2. That is 37% lower than multi-model mean.

2.INMCM4 has by far the highest climate system inertia: Deep ocean heat capacity in INMCM4 is 317 W yr m^-2 K^-1, 200% of the mean (which excluded INMCM4 because it was such an outlier)

3.INMCM4 exactly matches observed atmospheric H2O content in lower troposphere (215 hPa), and is biased low above that. Most others are biased high.

So the model that most closely reproduces the temperature history has high inertia from ocean heat capacities, low forcing from CO2 and less water for feedback. Why aren’t the other models built like this one?

Conclusions:

In the real world, temperatures go up and down. This is also true of HADCRUT4. In the world of climate models, temperatures only go up. Some variation in rates of warming, but always warming, nonetheless.

Not all models are created equal, and the ensemble average is far from reality and projects unreasonable rates of future warming. It would be much better to take the best model and build upon its success.

Excel workbook is here: CMIP5 VS hADCRUT