Climate Model Upgraded: INMCM5 Under the Hood

2018 Update: Best Climate Model INMCM5 October 22, 2018 (follow link to latest info on INMCM5)

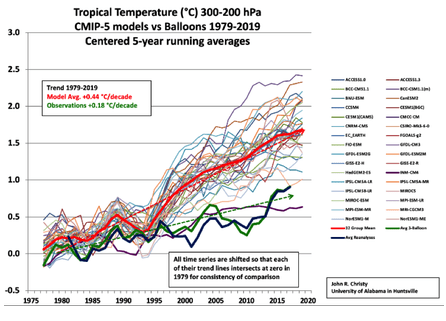

A previous analysis Temperatures According to Climate Models showed that only one of 42 CMIP5 models was close to hindcasting past temperature fluctuations. That model was INMCM4, which also projected an unalarming 1.4C warming to the end of the century, in contrast to the other models programmed for future warming five times the past.

In a recent comment thread, someone asked what has been done recently with that model, given that it appears to be “best of breed.” So I went looking and this post summarizes further work to produce a new, hopefully improved version by the modelers at the Institute of Numerical Mathematics of the Russian Academy of Sciences.

In a recent comment thread, someone asked what has been done recently with that model, given that it appears to be “best of breed.” So I went looking and this post summarizes further work to produce a new, hopefully improved version by the modelers at the Institute of Numerical Mathematics of the Russian Academy of Sciences.

Earlier this year came this publication Simulation of the present-day climate with the climate model INMCM5 by E.M. Volodin et al. Excerpts below with my bolds.

In this paper we present the fifth generation of the INMCM climate model that is being developed at the Institute of Numerical Mathematics of the Russian Academy of Sciences (INMCM5). The most important changes with respect to the previous version (INMCM4) were made in the atmospheric component of the model. Its vertical resolution was increased to resolve the upper stratosphere and the lower mesosphere. A more sophisticated parameterization of condensation and cloudiness formation was introduced as well. An aerosol module was incorporated into the model. The upgraded oceanic component has a modified dynamical core optimized for better implementation on parallel computers and has two times higher resolution in both horizontal directions.

Analysis of the present-day climatology of the INMCM5 (based on the data of historical run for 1979–2005) shows moderate improvements in reproduction of basic circulation characteristics with respect to the previous version. Biases in the near-surface temperature and precipitation are slightly reduced compared with INMCM4 as well as biases in oceanic temperature, salinity and sea surface height. The most notable improvement over INMCM4 is the capability of the new model to reproduce the equatorial stratospheric quasi-biannual oscillation and statistics of sudden stratospheric warmings.

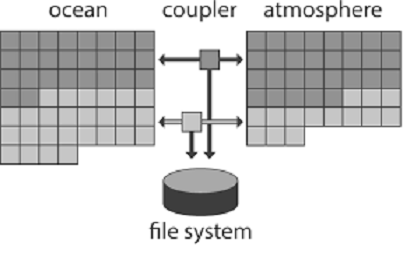

The family of INMCM climate models, as most climate system models, consists of two main blocks: the atmosphere general circulation model, and the ocean general circulation model. The atmospheric part is based on the standard set of hydrothermodynamic equations with hydrostatic approximation written in advective form. The model prognostic variables are wind horizontal components, temperature, specific humidity and surface pressure.

Atmosphere Module

The INMCM5 borrows most of the atmospheric parameterizations from its previous version. One of the few notable changes is the new parameterization of clouds and large-scale condensation. In the INMCM5 cloud area and cloud water are computed prognostically according to Tiedtke (1993). That includes the formation of large-scale cloudiness as well as the formation of clouds in the atmospheric boundary layer and clouds of deep convection. Decrease of cloudiness due to mixing with unsaturated environment and precipitation formation are also taken into account. Evaporation of precipitation is implemented according to Kessler (1969).

In the INMCM5 the atmospheric model is complemented by the interactive aerosol block, which is absent in the INMCM4. Concentrations of coarse and fine sea salt, coarse and fine mineral dust, SO2, sulfate aerosol, hydrophilic and hydrophobic black and organic carbon are all calculated prognostically.

Ocean Module

The oceanic module of the INMCM5 uses generalized spherical coordinates. The model “South Pole” coincides with the geographical one, while the model “North Pole” is located in Siberia beyond the ocean area to avoid numerical problems near the pole. Vertical sigma-coordinate is used. The finite-difference equations are written using the Arakawa C-grid. The differential and finite-difference equations, as well as methods of solving them can be found in Zalesny etal. (2010).

The INMCM5 uses explicit schemes for advection, while the INMCM4 used schemes based on splitting upon coordinates. Also, the iterative method for solving linear shallow water equation systems is used in the INMCM5 rather than direct method used in the INMCM4. The two previous changes were made to improve model parallel scalability. The horizontal resolution of the ocean part of the INMCM5 is 0.5 × 0.25° in longitude and latitude (compared to the INMCM4’s 1 × 0.5°).

Both the INMCM4 and the INMCM5 have 40 levels in vertical. The parallel implementation of the ocean model can be found in (Terekhov etal. 2011). The oceanic block includes vertical mixing and isopycnal diffusion parameterizations (Zalesny et al. 2010). Sea ice dynamics and thermodynamics are parameterized according to Iakovlev (2009). Assumptions of elastic-viscous-plastic rheology and single ice thickness gradation are used. The time step in the oceanic block of the INMCM5 is 15 min.

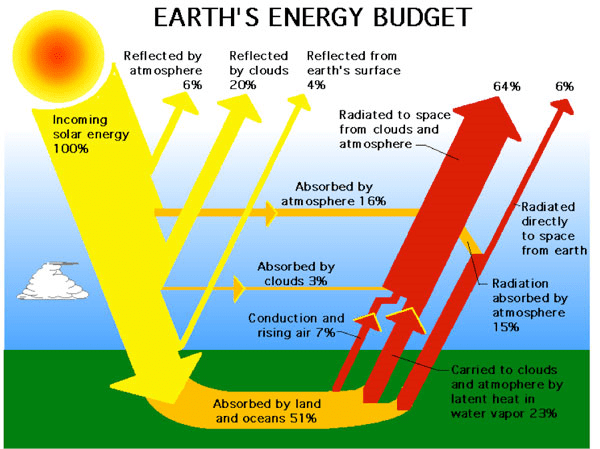

Note the size of the human emissions next to the red arrow.

Carbon Cycle Module

The climate model INMCM5 has а carbon cycle module (Volodin 2007), where atmospheric CO2 concentration, carbon in vegetation, soil and ocean are calculated. In soil, а single carbon pool is considered. In the ocean, the only prognostic variable in the carbon cycle is total inorganic carbon. Biological pump is prescribed. The model calculates methane emission from wetlands and has a simplified methane cycle (Volodin 2008). Parameterizations of some electrical phenomena, including calculation of ionospheric potential and flash intensity (Mareev and Volodin 2014), are also included in the model.

Surface Temperatures

When compared to the INMCM4 surface temperature climatology, the INMCM5 shows several improvements. Negative bias over continents is reduced mainly because of the increase in daily minimum temperature over land, which is achieved by tuning the surface flux parameterization. In addition, positive bias over southern Europe and eastern USA in summer typical for many climate models (Mueller and Seneviratne 2014) is almost absent in the INMCM5. A possible reason for this bias in many models is the shortage of soil water and suppressed evaporation leading to overestimation of the surface temperature. In the INMCM5 this problem was addressed by the increase of the minimum leaf resistance for some vegetation types.

Nevertheless, some problems migrate from one model version to the other: negative bias over most of the subtropical and tropical oceans, and positive bias over the Atlantic to the east of the USA and Canada. Root mean square (RMS) error of annual mean near surface temperature was reduced from 2.48 K in the INMCM4 to 1.85 K in the INMCM5.

Precipitation

In mid-latitudes, the positive precipitation bias over the ocean prevails in winter while negative bias occurs in summer. Compared to the INMCM4, the biases over the western Indian Ocean, Indonesia, the eastern tropical Pacific and the tropical Atlantic are reduced. A possible reason for this is the better reproduction of the tropical sea surface temperature (SST) in the INMCM5 due to the increase of the spatial resolution in the oceanic block, as well as the new condensation scheme. RMS annual mean model bias for precipitation is 1.35mm day−1 for the INMCM5 compared to 1.60mm day−1 for the INMCM4.

Cloud Radiation Forcing

Cloud radiation forcing (CRF) at the top of the atmosphere is one of the most important climate model characteristics, as errors in CRF frequently lead to an incorrect surface temperature.

In the high latitudes model errors in shortwave CRF are small. The model underestimates longwave CRF in the subtropics but overestimates it in the high latitudes. Errors in longwave CRF in the tropics tend to partially compensate errors in shortwave CRF. Both errors have positive sign near 60S leading to warm bias in the surface temperature here. As a result, we have some underestimation of the net CRF absolute value at almost all latitudes except the tropics. Additional experiments with tuned conversion of cloud water (ice) to precipitation (for upper cloudiness) showed that model bias in the net CRF could be reduced, but that the RMS bias for the surface temperature will increase in this case.

A table from another paper provides the climate parameters described by INMCM5.

| Climate Parameters | Observations | INMCM3 | INMCM4 | INMCM5 |

| Incoming solar radiation at TOA | 341.3 [26] | 341.7 | 341.8 | 341.4 |

| Outgoing solar radiation at TOA | 96–100 [26] | 97.5 ± 0.1 | 96.2 ± 0.1 | 98.5 ± 0.2 |

| Outgoing longwave radiation at TOA | 236–242 [26] | 240.8 ± 0.1 | 244.6 ± 0.1 | 241.6 ± 0.2 |

| Solar radiation absorbed by surface | 154–166 [26] | 166.7 ± 0.2 | 166.7 ± 0.2 | 169.0 ± 0.3 |

| Solar radiation reflected by surface | 22–26 [26] | 29.4 ± 0.1 | 30.6 ± 0.1 | 30.8 ± 0.1 |

| Longwave radiation balance at surface | –54 to 58 [26] | –52.1 ± 0.1 | –49.5 ± 0.1 | –63.0 ± 0.2 |

| Solar radiation reflected by atmosphere | 74–78 [26] | 68.1 ± 0.1 | 66.7 ± 0.1 | 67.8 ± 0.1 |

| Solar radiation absorbed by atmosphere | 74–91 [26] | 77.4 ± 0.1 | 78.9 ± 0.1 | 81.9 ± 0.1 |

| Direct hear flux from surface | 15–25 [26] | 27.6 ± 0.2 | 28.2 ± 0.2 | 18.8 ± 0.1 |

| Latent heat flux from surface | 70–85 [26] | 86.3 ± 0.3 | 90.5 ± 0.3 | 86.1 ± 0.3 |

| Cloud amount, % | 64–75 [27] | 64.2 ± 0.1 | 63.3 ± 0.1 | 69 ± 0.2 |

| Solar radiation-cloud forcing at TOA | –47 [26] | –42.3 ± 0.1 | –40.3 ± 0.1 | –40.4 ± 0.1 |

| Longwave radiation-cloud forcing at TOA | 26 [26] | 22.3 ± 0.1 | 21.2 ± 0.1 | 24.6 ± 0.1 |

| Near-surface air temperature, °С | 14.0 ± 0.2 [26] | 13.0 ± 0.1 | 13.7 ± 0.1 | 13.8 ± 0.1 |

| Precipitation, mm/day | 2.5–2.8 [23] | 2.97 ± 0.01 | 3.13 ± 0.01 | 2.97 ± 0.01 |

| River water inflow to the World Ocean,10^3 km^3/year | 29–40 [28] | 21.6 ± 0.1 | 31.8 ± 0.1 | 40.0 ± 0.3 |

| Snow coverage in Feb., mil. Km^2 | 46 ± 2 [29] | 37.6 ± 1.8 | 39.9 ± 1.5 | 39.4 ± 1.5 |

| Permafrost area, mil. Km^2 | 10.7–22.8 [30] | 8.2 ± 0.6 | 16.1 ± 0.4 | 5.0 ± 0.5 |

| Land area prone to seasonal freezing in NH, mil. Km^2 | 54.4 ± 0.7 [31] | 46.1 ± 1.1 | 48.3 ± 1.1 | 51.6 ± 1.0 |

| Sea ice area in NH in March, mil. Km^2 | 13.9 ± 0.4 [32] | 12.9 ± 0.3 | 14.4 ± 0.3 | 14.5 ± 0.3 |

| Sea ice area in NH in Sept., mil. Km^2 | 5.3 ± 0.6 [32] | 4.5 ± 0.5 | 4.5 ± 0.5 | 6.1 ± 0.5 |

Heat flux units are given in W/m^2; the other units are given with the title of corresponding parameter. Where possible, ± shows standard deviation for annual mean value. Source: Simulation of Modern Climate with the New Version Of the INM RAS Climate Model (Bracketed numbers refer to sources for observations)

Ocean Temperature and Salinity

The model biases in potential temperature and salinity averaged over longitude with respect to WOA09 (Antonov et al. 2010) are shown in Fig.12. Positive bias in the Southern Ocean penetrates from the surface downward for up to 300 m, while negative bias in the tropics can be seen even in the 100–1000 m layer.

Nevertheless, zonal mean temperature error at any level from the surface to the bottom is small. This was not the case for the INMCM4, where one could see negative temperature bias up to 2–3 K from 1.5 km to the bottom nearly al all latitudes, and 2–3 K positive bias at levels of 700–1000 m. The reason for this improvement is the introduction of a higher background coefficient for vertical diffusion at high depth (3000 m and higher) than at intermediate depth (300–500m). Positive temperature bias at 45–65 N at all depths could probably be explained by shortcomings in the representation of deep convection [similar errors can be seen for most of the CMIP5 models (Flato etal. 2013, their Fig.9.13)].

Another feature common for many present day climate models (and for the INMCM5 as well) is negative bias in southern tropical ocean salinity from the surface to 500 m. It can be explained by overestimation of precipitation at the southern branch of the Inter Tropical Convergence zone. Meridional heat flux in the ocean (Fig.13) is not far from available estimates (Trenberth and Caron 2001). It looks similar to the one for the INMCM4, but maximum of northward transport in the Atlantic in the INMCM5 is about 0.1–0.2 × 1015 W higher than the one in the INMCM4, probably, because of the increased horizontal resolution in the oceanic block.

Sea Ice

In the Arctic, the model sea ice area is just slightly overestimated. Overestimation of the Arctic sea ice area is connected with negative bias in the surface temperature. In the same time, connection of the sea ice area error with the positive salinity bias is not evident because ice formation is almost compensated by ice melting, and the total salinity source for these pair of processes is not large. The amplitude and phase of the sea ice annual cycle are reproduced correctly by the model. In the Antarctic, sea ice area is underestimated by a factor of 1.5 in all seasons, apparently due to the positive temperature bias. Note that the correct simulation of sea ice area dynamics in both hemispheres simultaneously is a difficult task for climate modeling.

The analysis of the model time series of the SST anomalies shows that the El Niño event frequency is approximately the same in the model and data, but the model El Niños happen too regularly. Atmospheric response to the El Niño vents is also underestimated in the model by a factor of 1.5 with respect to the reanalysis data.

Conclusion

Based on the CMIP5 model INMCM4 the next version of the Institute of Numerical Mathematics RAS climate model was developed (INMCM5). The most important changes include new parameterizations of large scale condensation (cloud fraction and cloud water are now the prognostic variables), and increased vertical resolution in the atmosphere (73 vertical levels instead of 21, top model level raised from 30 to 60 km). In the oceanic block, horizontal resolution was increased by a factor of 2 in both directions.

The climate model was supplemented by the aerosol block. The model got a new parallel code with improved computational efficiency and scalability. With the new version of climate model we performed a test model run (80 years) to simulate the present-day Earth climate. The model mean state was compared with the available datasets. The structures of the surface temperature and precipitation biases in the INMCM5 are typical for the present climate models. Nevertheless, the RMS error in surface temperature, precipitation as well as zonal mean temperature and zonal wind are reduced in the INMCM5 with respect to its previous version, the INMCM4.

The model is capable of reproducing equatorial stratospheric QBO and SSWs.The model biases for the sea surface height and surface salinity are reduced in the new version as well, probably due to increasing spatial resolution in the oceanic block. Bias in ocean potential temperature at depths below 700 m in the INMCM5 is also reduced with respect to the one in the INMCM4. This is likely because of the tuning background vertical diffusion coefficient.

Model sea ice area is reproduced well enough in the Arctic, but is underestimated in the Antarctic (as a result of the overestimated surface temperature). RMS error in the surface salinity is reduced almost everywhere compared to the previous model except the Arctic (where the positive bias becomes larger). As a final remark one can conclude that the INMCM5 is substantially better in almost all aspects than its previous version and we plan to use this model as a core component for the coming CMIP6 experiment.

Summary

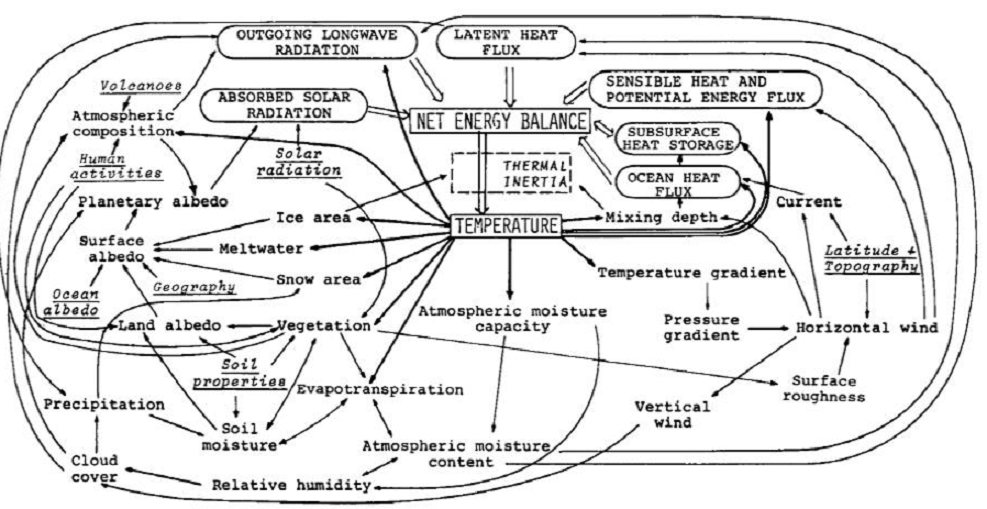

One the one hand, this model example shows that the intent is simple: To represent dynamically the energy balance of our planetary climate system. On the other hand, the model description shows how many parameters are involved, and the complexity of processes interacting. The attempt to simulate operations of the climate system is a monumental task with many outstanding challenges, and this latest version is another step in an iterative development.

Note: Regarding the influence of rising CO2 on the energy balance. Global warming advocates estimate a CO2 perturbation of 4 W/m^2. In the climate parameters table above, observations of the radiation fluxes have a 2 W/m^2 error range at best, and in several cases are observed in ranges of 10 to 15 W/m^2.

We do not yet have access to the time series temperature outputs from INMCM5 to compare with observations or with other CMIP6 models. Presumably that will happen in the future.

Early Schematic: Flows and Feedbacks for Climate Models