An International Temperature Data Review Project has been announced, along with a call for analyses of surface temperature records to be submitted. The project is described here: http://www.tempdatareview.org/

Below is my submission.

Update April 27: Notice was received today that this submission has gone to the Panel.

Overview

I did a study of 2013 records from the CRN top rated US surface stations. It was published Aug. 20, 2014 at No Tricks Zone. Most remarkable about these records is the extensive local climate diversity that appears when station sites are relatively free of urban heat sources. 35% (8 of 23) of the stations reported cooling over the century. Indeed, if we remove the 8 warmest records, the average rate flips from +0.16°C to -0.14°C. In order to respect the intrinsic quality of temperatures, I calculated monthly slopes for each station, and averaged them for station trends.

Recently I updated that study with 2014 data and compared adjusted to unadjusted records. The analysis shows the effect of GHCN adjustments on each of the 23 stations in the sample. The average station was warmed by +0.58 C/Century, from +.18 to +.76, comparing adjusted to unadjusted records. 19 station records were warmed, 6 of them by more than +1 C/century. 4 stations were cooled, most of the total cooling coming at one station, Tallahassee. So for this set of stations, the chance of adjustments producing warming is 19/23 or 83%.

Adjustments Multiply Warming at US CRN1 Stations

A study of US CRN1 stations, top-rated for their siting quality, shows that GHCN adjusted data produces warming trends several times larger than unadjusted data.

The unadjusted files from ghcn.v3.qcu have been scrutinized for outlier values, and for step changes indicative of non-climatic biases. In no case was the normal variability pattern interrupted by step changes. Coverages were strong, the typical history exceeding 95%, and some achieved 100%.(Measured by the % of months with a reported Tavg value out of the total months in the station’s lifetime.)

The adjusted files are another story. Typically, years of data are deleted, often several years in a row. Entire rows are erased including the year identifier, so finding the missing years is a tedious manual process looking for gaps in the sequence of years. All stations except one lost years of data through adjustments, often in recent years. At one station, four years of data from 2007 to 2010 were deleted; in another case, 5 years of data from 2002 to 2006 went missing. Strikingly, 9 stations that show no 2014 data in the adjusted file have fully reported 2014 in the unadjusted file.

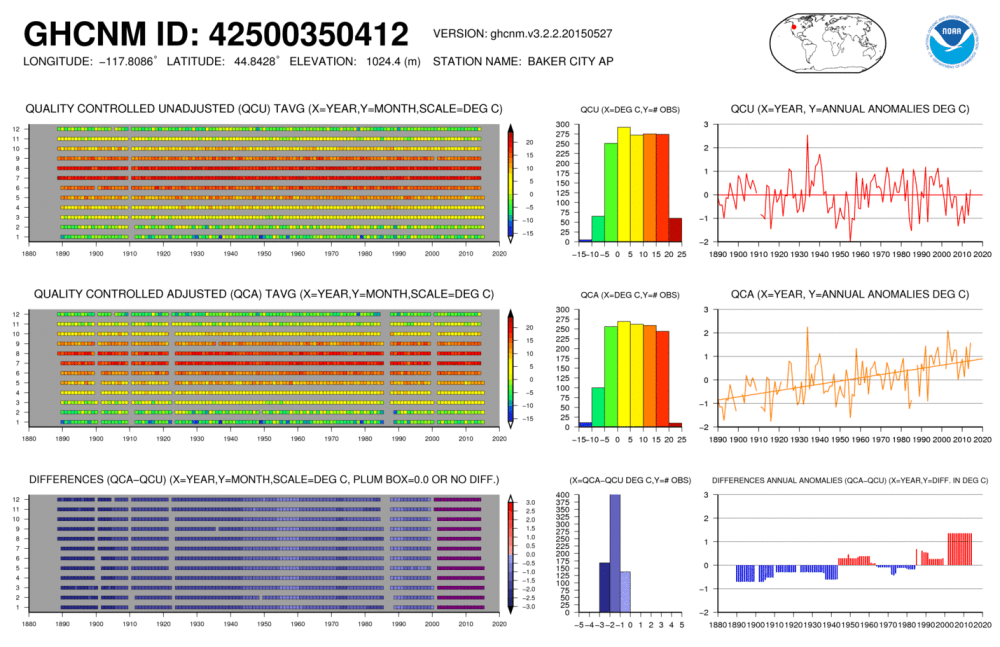

It is instructive to see the effect of adjustments upon individual stations. A prime example is 350412 Baker City, Oregon.

Over 125 years GHCN v.3 unadjusted shows a trend of -0.0051 C/century. The adjusted data shows +1.48C/century. How does the difference arise? The coverage is about the same, though 7 years of data are dropped in the adjusted file. However, the values are systematically lowered in the adjusted version: Average annual temperature is +6C +/-2C for the adjusted file; +9.4C +/-1.7C unadjusted.

How then is a warming trend produced? In the distant past, prior to 1911, adjusted temperatures decade by decade are cooler by more than -2C each month. That adjustment changes to -1.8C 1912-1935, then changes to -2.2 for 1936 to 1943. The rate ranges from -1.2 to -1.5C 1944-1988, then changes to -1C. From 2002 onward, adjusted values are more than 1C higher than the unadjusted record.

Some apologists for the adjustments have stated that cooling is done as much as warming. Here it is demonstrated that by cooling selectively in the past, a warming trend can be created, even though the adjusted record ends up cooler on average over the 20th Century.

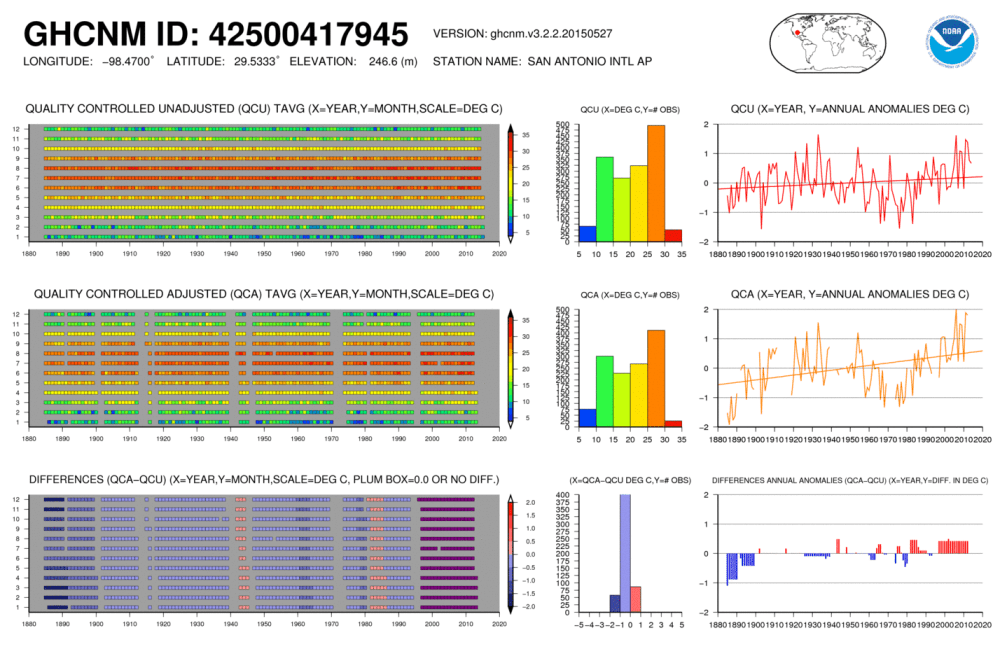

A different kind of example is provided by 417945 San Antonio, Texas. Here the unadjusted record had a complete 100% coverage, and the adjustments deleted 262 months of data, reducing the coverage to 83%. In addition, the past was cooled, adjustments ranging from -1.2C per month in 1885 gradually coming to -0.2C by 1970. These cooling adjustments were minor, only reducing the average annual temperature by 0.16C. Temperatures since 1997 were increased by about 0.5C each year. Due to deleted years of data along with recent increases, San Antonio went from an unadjusted trend of +0.30C/century to an adjusted trend of +0.92C/century, tripling the warming at that location.

The overall comparison for the set of CRN1 stations:

| Area | FIRST CLASS US STATIONS | ||

| History | 1874 to 2014 | ||

| Stations | 23 | ||

| Dataset | Unadjusted | Adjusted | |

| Average Trend | 0.18 | 0.76 | °C/Century |

| Std. Deviation | 0.66 | 0.54 | °C/Century |

| Max Trend | 1.18 | 1.91 | °C/Century |

| Min Trend | -2.00 | -0.48 | °C/Century |

| Ave. Length | 119 | Years |

These stations are sited away from urban heat sources, and the unadjusted records reveal a diversity of local climates, as shown by the deviation and contrasting Max and Min results. Seven stations showed negative trends over their lifetimes through 2014.

Adjusted data reduces the diversity and shifts the results toward warming. The average trend is 4 times warmer, only 2 stations show any cooling, and at smaller rates. Many stations had warming rates increased by multiples from the unadjusted rates. Whereas 4 months had negative trends in the unadjusted dataset, no months show cooling after adjustments.

Periodic Rates from US CRN1 Stations

| °C/Century | °C/Century | ||

| Start | End | Unadjusted | Adjusted |

| 1915 | 1944 | 1.22 | 1.51 |

| 1944 | 1976 | -1.48 | -0.92 |

| 1976 | 1998 | 3.12 | 4.35 |

| 1998 | 2014 | -1.67 | -1.84 |

| 1915 | 2014 | 0.005 | 0.68 |

Looking at periodic trends within the series, it is clear that adjustments at these stations increased the trend over the last 100 years from flat to +0.68 C/Century. This was achieved by reducing the cooling mid-century and accelerating the warming prior to 1998.

Methodology

Surfacestations.org provides a list of 23 stations that have the CRN#1 Rating for the quality of the sites. I obtained the records from the latest GHCNv3 monthly qcu report, did my own data quality review and built a Temperature Trend Analysis workbook. I made a companion workbook using the GHCNv3 qca report. Both datasets are available here:

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v3/

As it happens, the stations are spread out across the continental US (CONUS): NW: Oregon, North Dakota, Montana; SW: California, Nevada, Colorado, Texas; MW: Indiana, Missouri, Arkansas, Louisiana; NE: New York, Rhode Island, Pennsylvania; SE: Georgia, Alabama, Mississippi, Florida.

The method involves creating for each station a spreadsheet with monthly average temperatures imported into a 2D array, a row for each year, a column for each month. The sheet calculates a trend for each month for all of the years recorded at that station. Then the monthly trends are averaged together for a lifetime trend for that station. To be comparable to others, the station trend is presented as degrees per 100 years. A summary sheet collects all the trends from all the sheets to provide trend analysis for the set of stations and the geographical area of interest. Thus the temperatures themselves are not compared, but rather the change derivative expressed as a slope.

I have built Excel workbooks to do this analysis, and have attached two workbooks: USHCN1 Adjusted and Unadjusted.

Conclusion

These 23 US stations comprise a random sample for studying the effects of adjustments upon historical records. Included are all USHCN stations inspected by surfacestations.org that, in their judgment, met the CRN standard for #1 rating. The sample was formed on a physical criterion, siting quality, independent of the content of the temperature records. The only bias in the selection is the expectation that the measured temperatures should be uncontaminated by urban heat sources.

It is startling to see how distorted and degraded are the adjusted records compared to the records submitted by weather authorities. No theory is offered here as to how or why this has happened, only to disclose the records themselves and make the comparisons.

In conclusion, it is not only a matter of concern that individual station histories are altered by adjustments. But also the adjusted dataset is the one used as input into programs computing global anomalies and averages. This much diminished dataset does not inspire confidence in the temperature reconstruction products built upon it.

Thank you for undertaking this project. Hopefully my analyses are useful in your work.

Sincerely, Ron Clutz

“The adjusted files are another story. Typically, years of data are deleted, often several years in a row.” Do you know the explanation(s) for the missing years?

I suppose missing years are effectively placed “on the trend line of the existing values”, as you mention for missing months in “Is It Warmer Now than a Century ago? It Depends.” Or, no?

LikeLike

I have no explanation for why data is changed or deleted. The climate centers say that it is done by algorithms.

LikeLike

Thank you. And thanks for submitting your work to the Project.

LikeLike

Let’s clarify things. The unadjusted records are from national weather services (NWS) and there are usually some days and months unreported, and thus missing in the record. Trend analysis effectively considers those missing data to be on the trendline of the existing data.

In the adjusted records, some data reported by NWS were removed, deleted from the files. The trend analysis does consider both missing and deleted data to be on the trendline. Adjustments alter the trends both by changing some data and also by deleting data from the original records.

LikeLiked by 1 person

Hey Ron! Great analysis! Glad to see that you have submitted it.

You say, “No theory is offered here as to how or why this has happened, only to disclose the records themselves and make the comparisons.” Smart move. It is difficult enough to get CAGW supporters to even look at the alterations, but especially so when one points out the most plausible motivations for the pattern of changes.

LikeLike

Ron-

Have you noticed any pattern in the deleted data? If you were to compare the unadjusted data to the same data, but with the deleted months removed, would the trends change significantly? Or do the deletions appear to be random?

LikeLike

Ted, thanks for your comment. We know that all but 4 of the stations were warmed by a combination of altering and deleting data in the submitted NWS reports. Other than San Antonio, I did not study the deleted data in detail. I will need to do that

LikeLike

Thanks for looking into it.

If you let me remove 10+% of pretty much any data set, I could certainly induce a trend that wasn’t in the original. While I have my problems with the explanations they give, I can conceive of legitimate reasons for adjustments that would alter the trends. But deletions, on the other hand, should be entirely random, and every one of them should come with a footnote explaining why that data point was deemed invalid. If not, I see that as more of a smoking gun than the adjustments could ever be.

LikeLike

Ted, I looked again into San Antonio, the record with the most deletions

Deletions could be occurring because of the missing daily data flags added during qc (quality control) processing of incoming records. FYI in the qcu file when a monthly average has the flag “a” attached, it means one daily measurement was missing, “b” means two days missing, etc., up to nine days missing. Ten or more days missing, and the month average is missing.

In my analysis I accepted all monthly averages with flags, reasoning that an average from 2/3 or more of the days is preferable to any other representation of that month’s temperature at that station. Theoretically, if an algorithm set the standard higher (say <4 days missing or no days missing), then some monthly numbers I accepted would be deleted in that adjustment processing. It is also possible that pairwise homogenization could create blank months to be infilled from other stations in the region, though I am not knowledgeable about that algorithm.

Also, I wanted to see how entire years go missing, especially in the last decade, including the 9 records with 2014 results deleted.

Studying again San Antonio, I can find no rhyme or reason to the deletions. Very few values have flags (very clean record), and no relation to deletions. As I said in the submission, 262 months of data were deleted from this record, and deleted months tended to come in clusters (below is month/year from latest to oldest):

5/2013-12/2014

4/1994-11/1996

5/77-2/79

9/70-3/74

6/45-4/47

11/39-7/42

10/16-4/18

9/12-8/15

5/1900-3/1902

All months suffered about the same number of deletions and I found no pattern relating to warmer or cooler years.

LikeLike

Reblogged this on Climate Collections.

LikeLike