Precipitation Misunderstandings

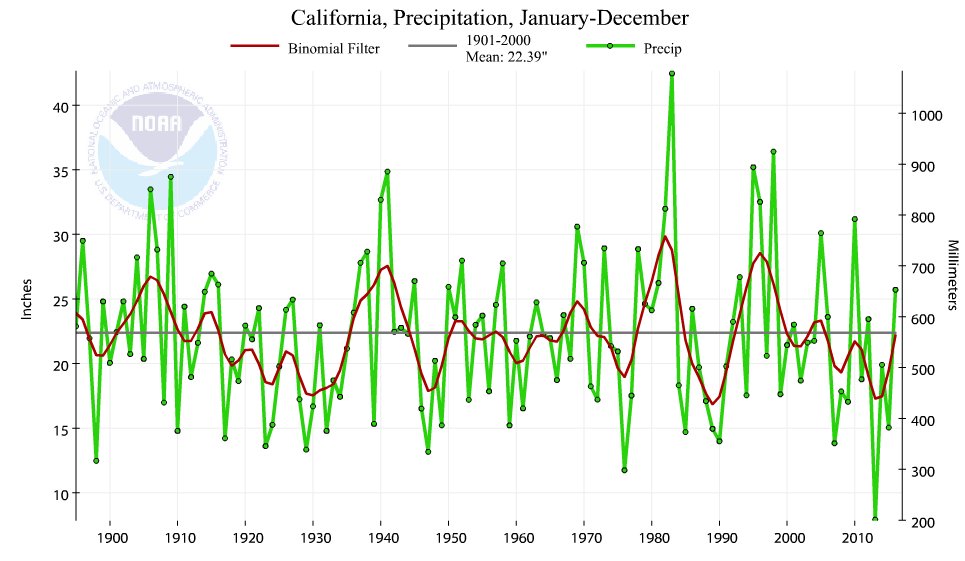

A previous post on Temperature Misunderstandings addressed mistaken notions about the meaning of temperature measurements and records. This post looks at rainfall, the other primary determinant of climates. For this topic California provides the means for everyone to see how misconceptions arise, and how to see precipitation statistics in context.

Lessons learned from the end of California’s “permanent drought”

A report by Larry Kummer documents how extensively California’s recent shortage of water was proclaimed as a “permanent drought”. And it goes on to document how El Nino conditions have ended the water shortage.

Status of the California drought

“During the past week, a series of storms bringing widespread rain and snow showers impacted the states along the Pacific Coast and northern Rockies. In California, the cumulative effect of several months of abundant precipitation has significantly improved drought conditions across the state.”

— US Drought monitor – California, February 9.

Precipitation over California in the water year so far (October 1 to January 31) is 178% of average for this date. The snowpack is 179% of average, as of Feb 8. Our reservoirs are at 125% of average capacity. See the bottom line summary as of February 7, from the US Drought monitor for California.

The improvement has been tremendous. The area with exceptional drought conditions have gone year over year from 38% of California to 0%, extreme drought from 23% to 1%, severe drought from 20% to 10% — while dry and moderate drought went from 18% to 48%, and no drought from <1% to 41%. See the map below. And the rain continues to fall.

In addition there is the saga of Oroville dam threatened by its reservoir overfilling.

Confusing Weather and Climate

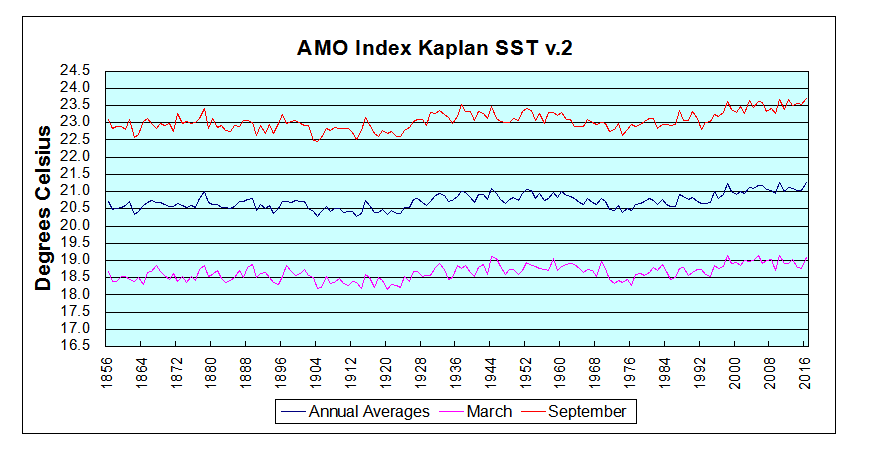

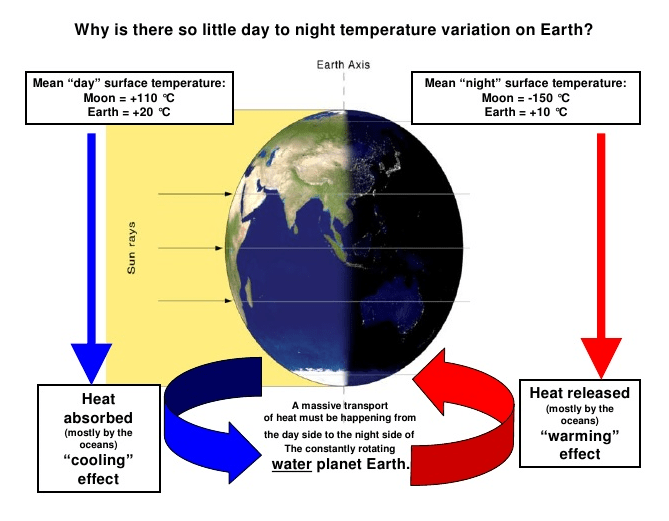

As with temperature, rainy weather is not climate. Neither is fair, sunny weather permanent. Precipitation is variable in any particular climate, with the seasons and on decadal and mult-decadal bases. For a context on precipitation patterns around the world see Here Comes the Rain Again.

It is a mistake to call a temporary lack of rain a drought, or worse a permanent drought, and equally a mistake to call a return of rainfall the end of a drought. California’s history as a desert environment does not change just because politicians and the public have short memories.

H/T to Eric Simpson for reminding us of that history:

There is also this perceptive comment by tomholsinger

I wouldn’t be so quick about the drought ending. Droughts are ALWAYS multi-season events. I was very impressed by the references below, which made the point that the 20th Century average of ~200 million acre feet of precipitation in California (rain and snow combined) is way more than the average of ~140 million acre feet over the last 2000 years.

Drying of the West, National Geographic

The West without Water: What Past Floods, Droughts, and Other Climatic Clues Tell Us about Tomorrow, Ingram, B. Lynn, and Malamud-Roam, Frances, 2013, University of California Press

Tom goes on to quote himself from a Modesto Bee op-ed almost two years ago.

Global warming has nothing to do with this – history is bad enough. A long-standing pre-industrial regional climate fluctuation seems underway, returning us from the wettest century in the past 1000 years to at least the historic average of much less (~70%) rain and snow. Many paleoclimatologists believe we are entering a still worse mega-drought .

An extreme drought by historic standards means a drop to 35-40% of the 20th Century average for 10-20 years. California has experienced two centuries-long such extreme mega-droughts in the past 2000 years.

Our average 20th Century precipitation (rain and snow combined) produced about 200 million acre feet of water annually over the whole state. 118 million acre feet went to nature in 2000, and 82 million was allocated by humans – the first 39 million for federal mandates, 9 million was used by people and industry, and the last 34 million for irrigation. A drop to the historic average of ~140 million acre feet over the past 2000 years means extinction for California agriculture – it would bear almost all the burden of the decrease even if the federal water is released. An extreme drought means a drop to about 75 million acre feet, and we might be starting 1-2 centuries of that.

This is happening to the entire Southwest . ~20 million acre feet of the Southwest’s precipitation annually entered the Colorado River in the 20th Century, of which ~12 million is currently withdrawn by Americans. Colorado River flow too has averaged much less over the past 2000 years (12-14 million acre fee annually), and it drops to 7-8 million in droughts which sometimes last centuries.

A drop to only the historic average precipitation over the past 2000 years means catastrophe for the Southwest. 2/3 of the very wet 20th Century average is normal for the entire area. We can expect ALL of California’s allotment of Colorado River to be diverted to urban areas in Arizona and Nevada in the decades of drought the region seems to be entering.

Summary

As with temperatures, changes in precipitation are misinterpreted when taken out of historical context. This is usually done to hype a sociopolitical agenda by distracting people from the baseline realities to which we can only adapt, not prevent.

The rainfall measures above show that California enjoyed an unusually wet century and it would have been prudent to take advantage of it by storing water resources. As the fable tells us, grasshoppers live for today, ants prepare for tomorrow.