Fishy Activists Destroying Hydro Dams

AP Photo/Nicholas K. Geranios

John Stossel bring us up to date on the fishy case for removing hydroelectric dams on the Snake River in Washington state. His Townhall article is A Dam Good Argument. Excerpts in italics with my bolds.and added images.

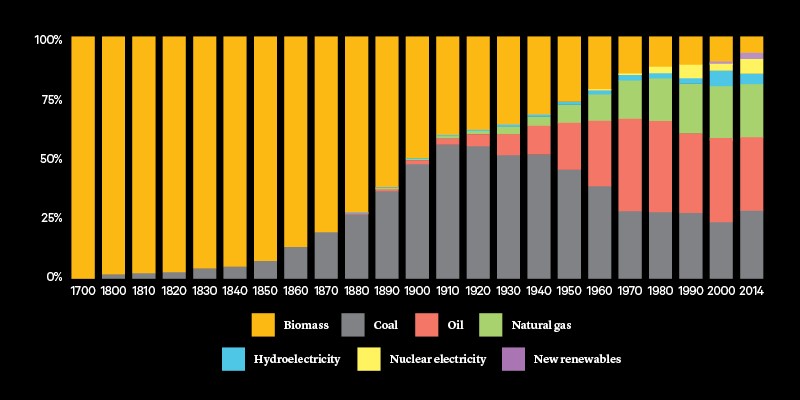

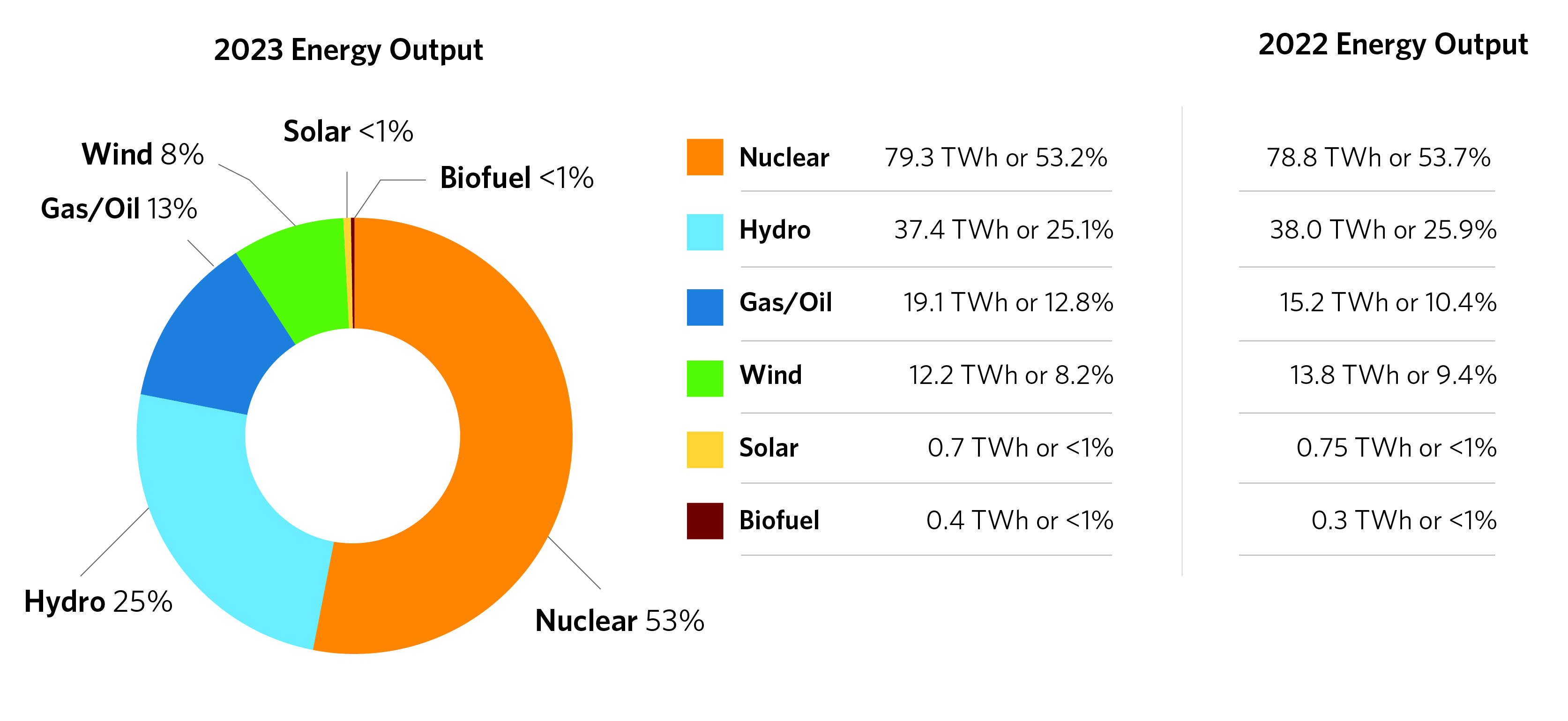

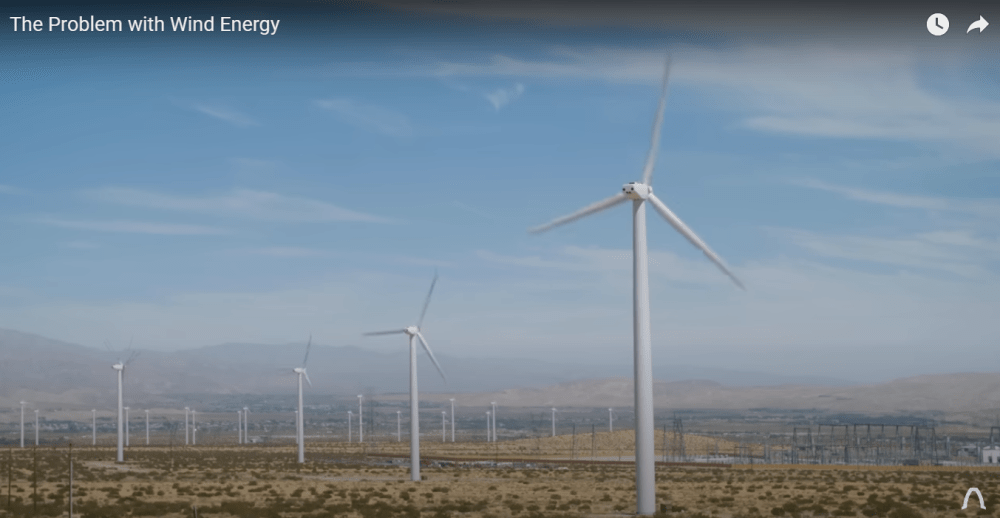

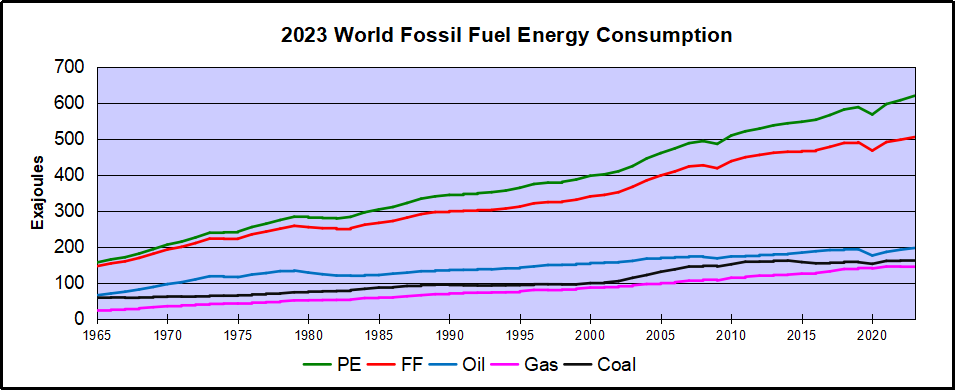

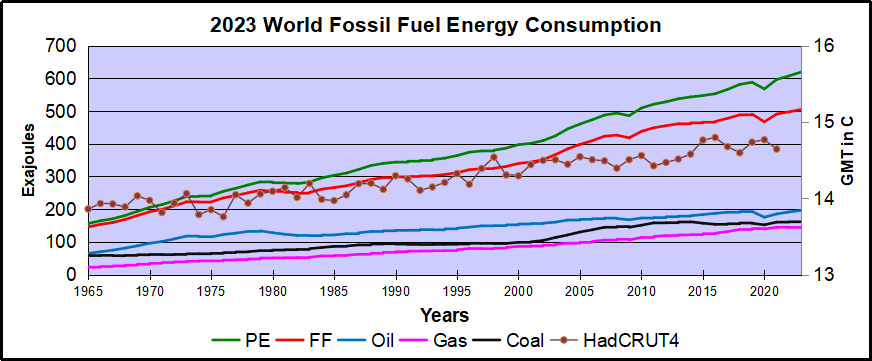

Instead of using fossil fuels, we’re told to use “clean” energy: wind, solar or hydropower. Hydro is the most reliable. Unlike wind and sunlight, it flows steadily.

But now, environmental groups want to destroy dams that create hydro power.

The Klamath River flows by the remaining pieces of the Copco 2 Dam after deconstruction in June 2023. |Located on Oregon/California border.Juliet Grable / JPR

“Breach those dams,” an activist shouts in my new video. “Now is the time, our fish are on the line!“

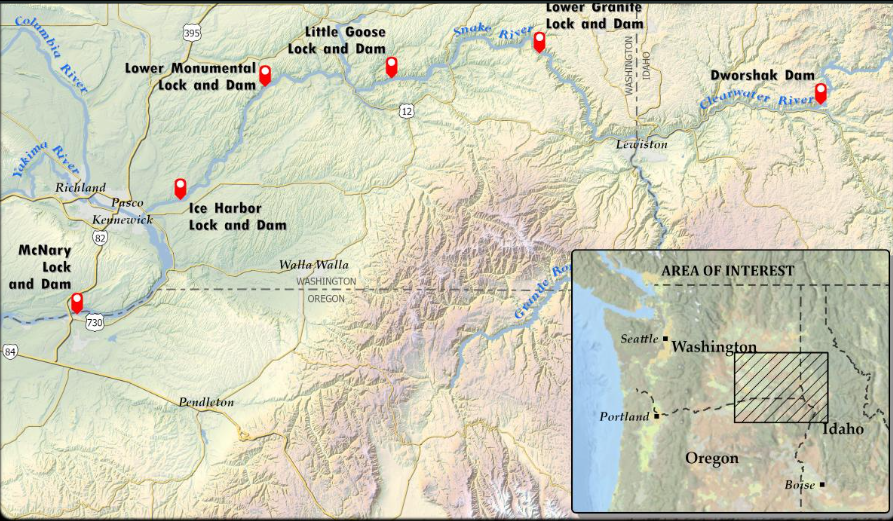

The activists have targeted four dams on the Snake River in Washington State. They claim the dams are driving salmon to extinction.

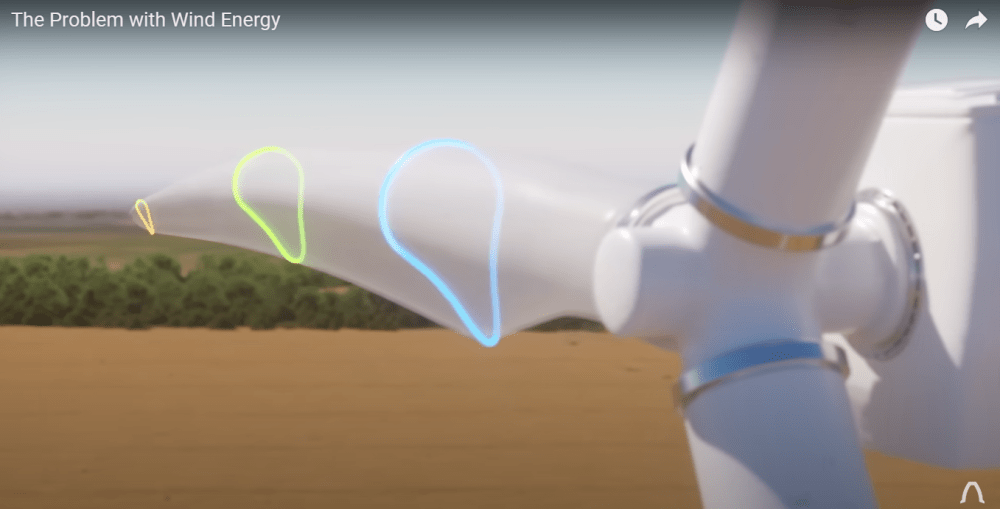

It’s true that dams once killed lots of salmon. Pregnant fish need to swim upriver to have babies, and their babies swim downriver to the ocean. Suddenly, dams were in the way. Salmon population dropped sharply.

But that was in the 1970s.Today, most salmon

make it past the dam without trouble.

How? Fish-protecting innovations like fish ladders and spillways guide most of the salmon away from the turbines that generate electricity.

Lower Granite fish count station & ladder (left, bottom right); Lower Monumental fish ladder (top right) Source: Fish Passage Thru the Lower Snake & Columbia Rivers

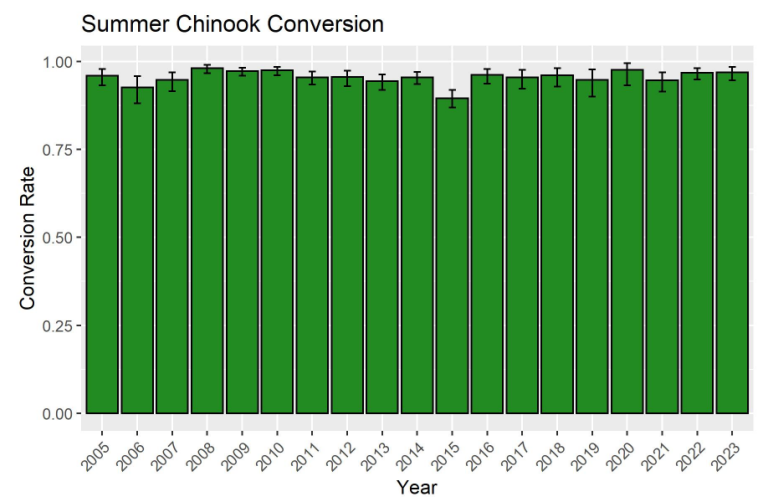

“Between 96% and 98% of the salmon successfully pass each dam,” says Todd Myers, Environmental Director at the Washington Policy Center. Even federal scientific agencies now say we can leave dams alone and fish will be fine.

But environmental groups don’t raise money by acknowledging good news. “Snake River Salmon Are in Crisis,” reads a headline from Earthjustice. Gullible media fall for it. The Snake River is the “most endangered in the country!” claimed the evening news anchor.

“That’s simply not true,” Myers explains. “All you have to do is look at the actual population numbers to know that that’s absurd.” Utterly absurd. In recent years, salmon populations are higher than they were in the 1980s and 90s.

The fish passage report for 2023 (here) has many results like this for various species. Conversion refers to completing the Snake River run from Ice Harbor through Lower Granite.

“They make these claims,” Myers says, “because they know people will believe them … they don’t want to believe that their favorite environmental group is dishonest.”

But many are. In 1999, environmental groups bought an ad in the New York Times saying “salmon … will be extinct by 2017.” “Did the environmentalists apologize?” I ask Meyers. “No,” he says. “They repeat almost the exact same arguments today, they just changed the dates.

I invited 10 activist groups that want to destroy dams to come to my studio and defend their claims about salmon extinction. Not one agreed. I understand why. They’ve already convinced the public and gullible politicians. Idaho’s Republican Congressman Mike Simpson says, “There is no viable path that can allow us to keep the dams in place.”

“We keep doing dumb things,” says Myers. “We put money into places where it doesn’t have an environmental impact, and then we wonder 10, 20, 30 years (later) why we haven’t made any environmental progress.”

Politicians and activists want to tear down Snake River dams

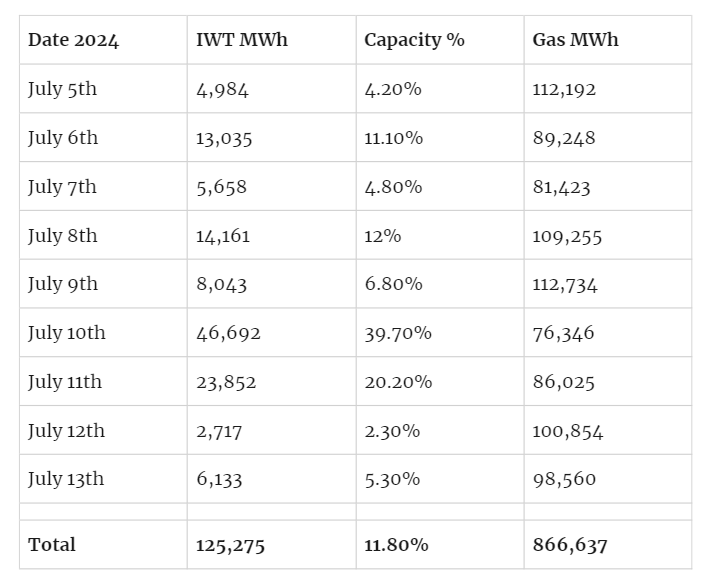

even though they generate tons of electricity.

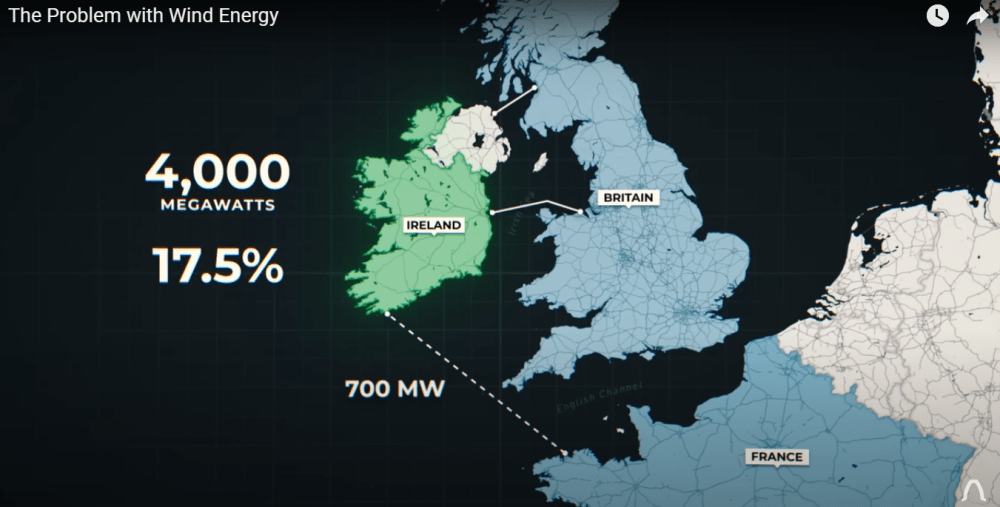

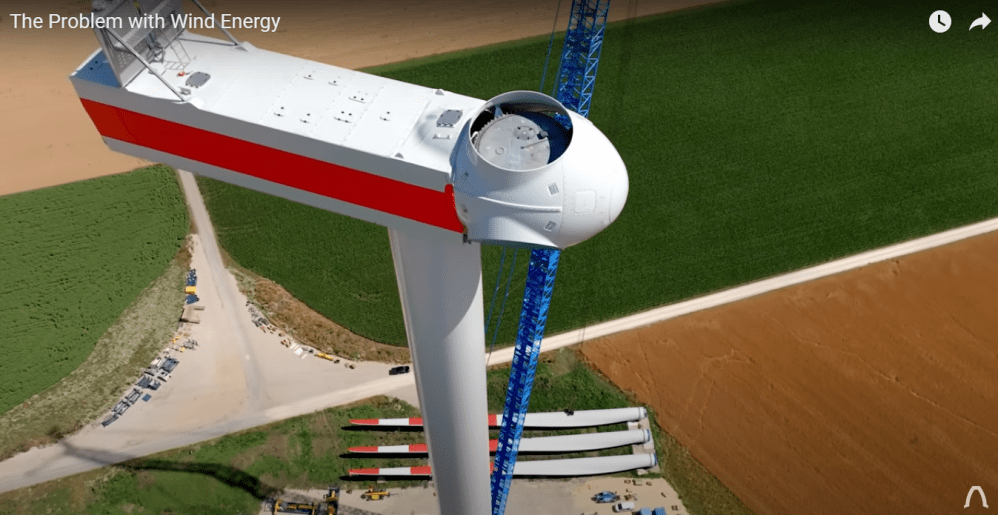

“Almost the same amount as all of the wind and solar turbines in Washington state,” says Myers, “Imagine if I told the environmental community we need to tear down every wind turbine and every solar panel. They would lose their minds. But that’s essentially what they’re advocating by tearing down Snake River dams.”

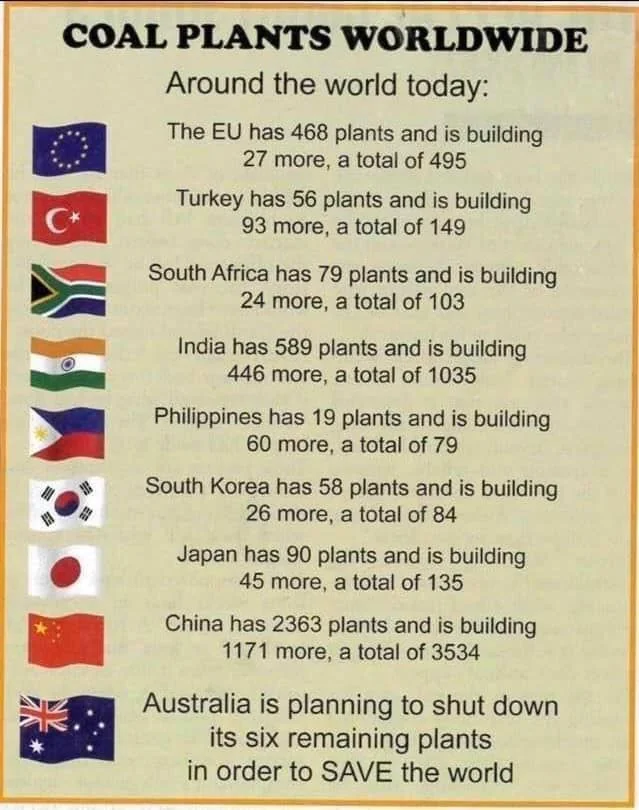

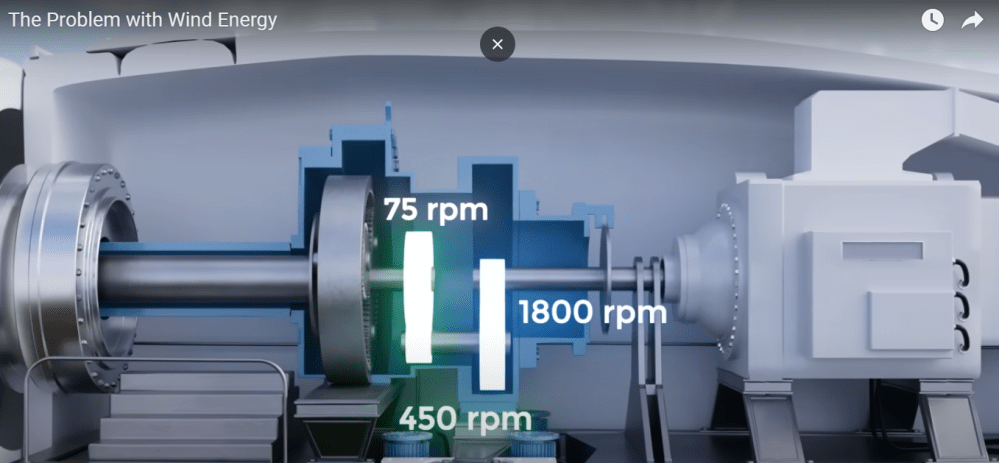

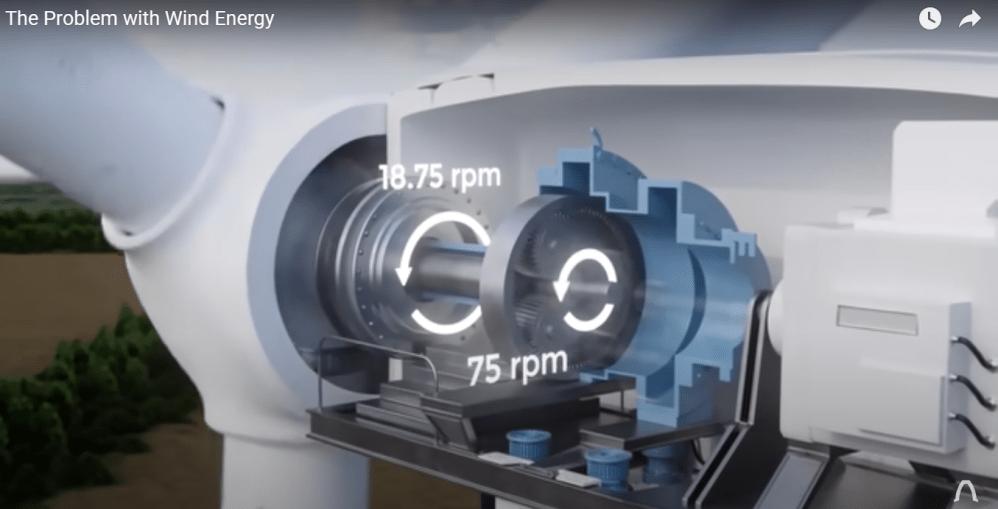

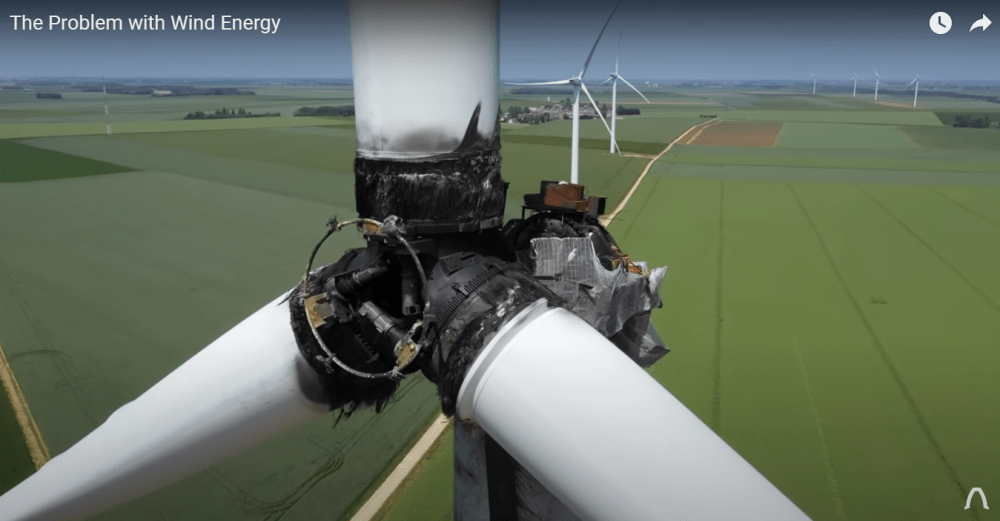

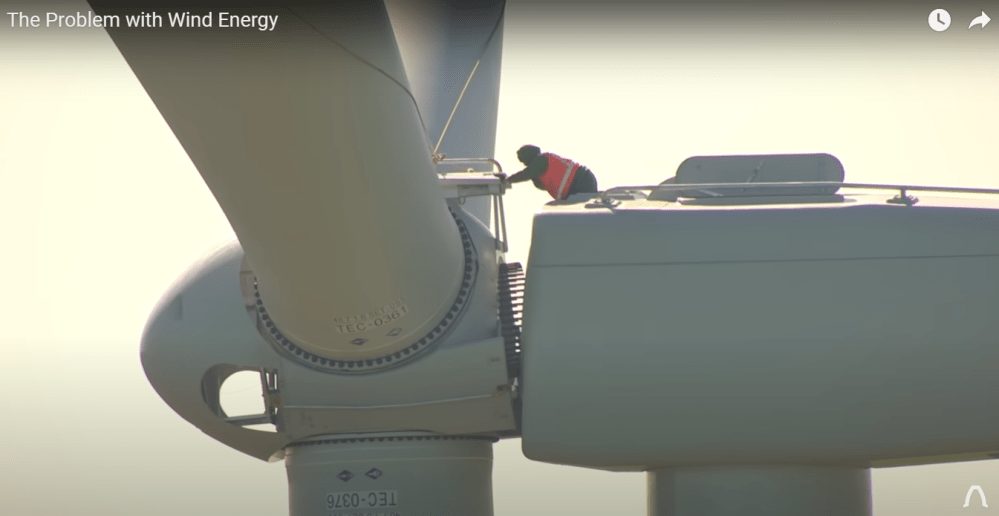

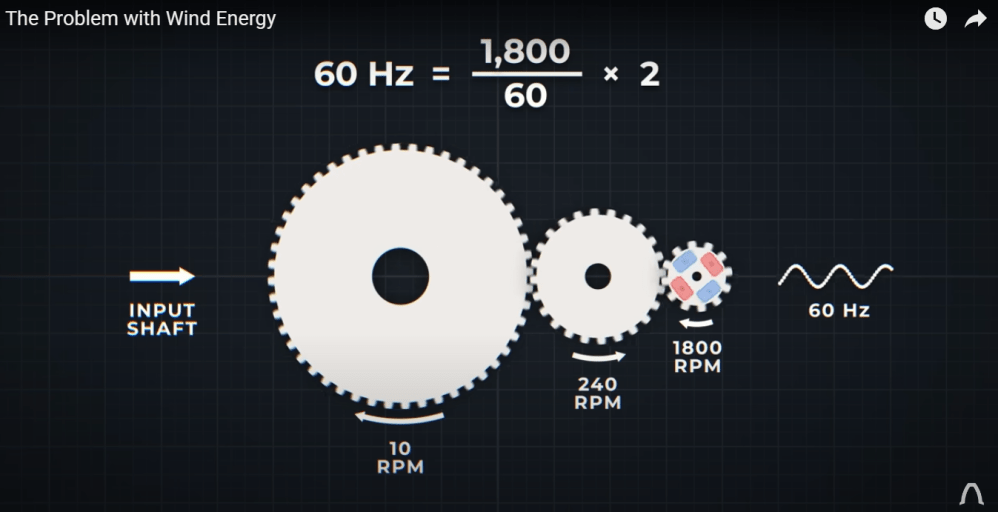

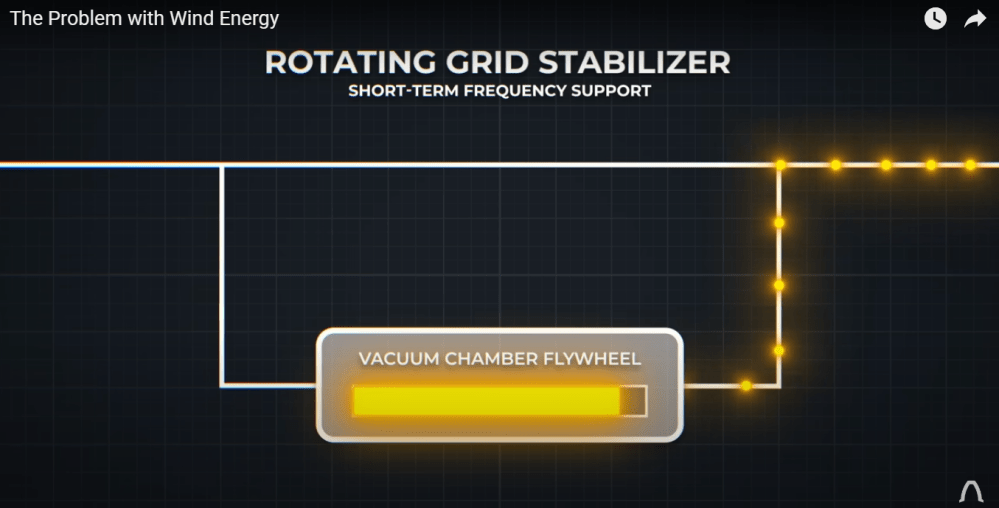

I push back: “They say, ‘Just build more wind turbines.’” “The problem is, several times a year, there’s no wind,” he replies. “You could build 10 times as many wind turbines, but if there’s no wind, there’s no electricity.”

Hydro, on the other hand, “can turn on and off whenever it’s needed. Destroying hydro and replacing it with wind makes absolutely no sense. It will do serious damage to our electrical grid.”

“It’s not their money,” I point out.”Exactly,” he says. “If you want to spend $35 billion on salmon, there’s lots of things we can do that would have a real impact.” Like what?

“Reduce the population of) seals and sea lions,” he says, “The Washington Academy of Sciences says that unless we reduce the populations, we will not recover salmon.” “People used to hunt sea lions,” I note. “Yeah, that’s why the populations are higher today.”

But environmentalists don’t want people to hunt sea lions or seals. Instead, they push for destruction of dams. “Because it’s sexy and dramatic, it sells,” says Myers. “It’s more about feeling good than environmental results.”

PostScript

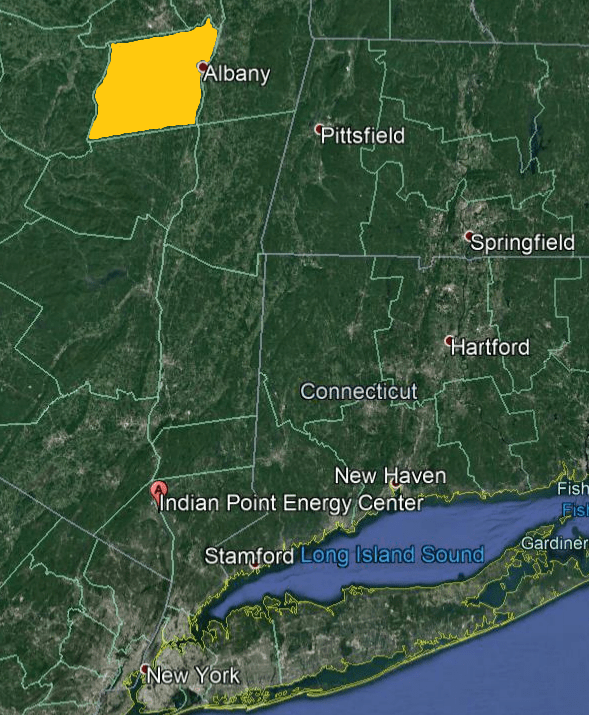

Of course there is a political dimension to this movement. Left coast woke progressives are targeting Lower Snake River dams located in Eastern Washington state. Folks there and in Eastern Oregon would rather be governed by common sense leaders like those in Idaho.

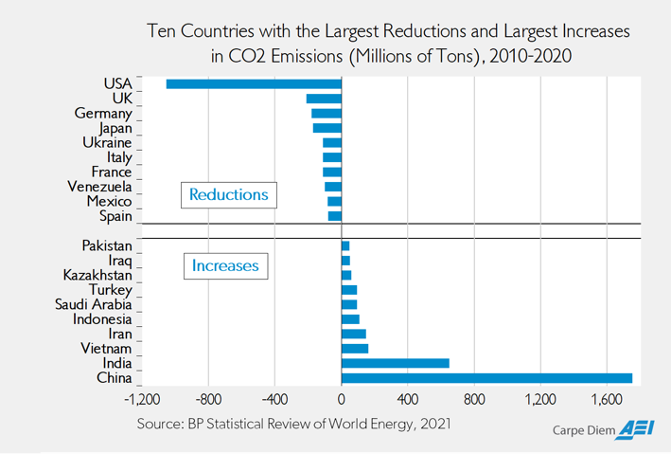

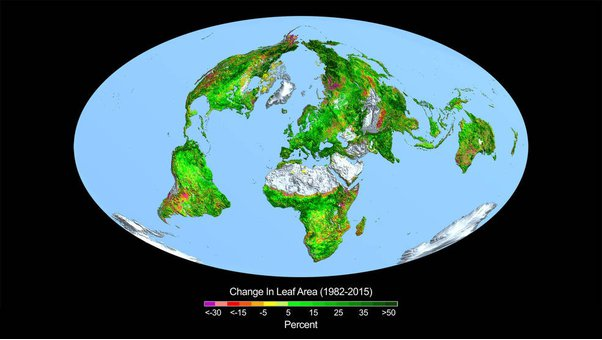

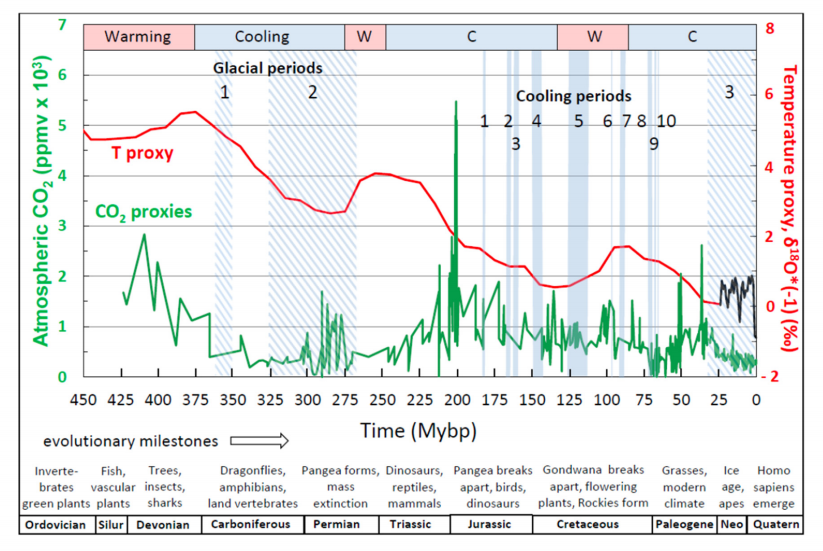

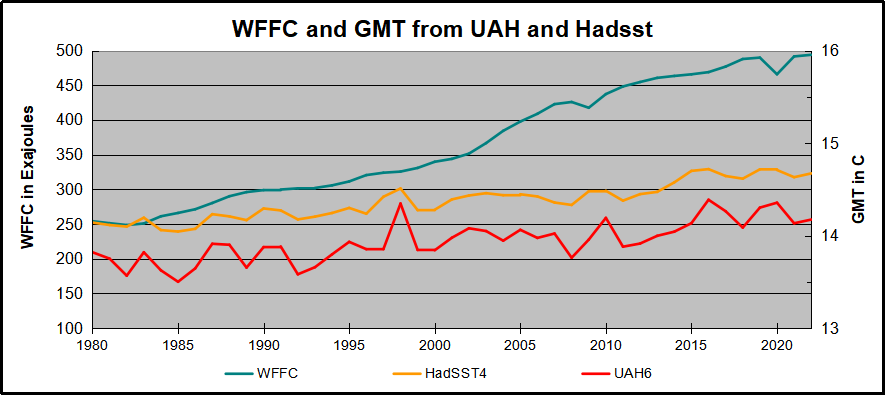

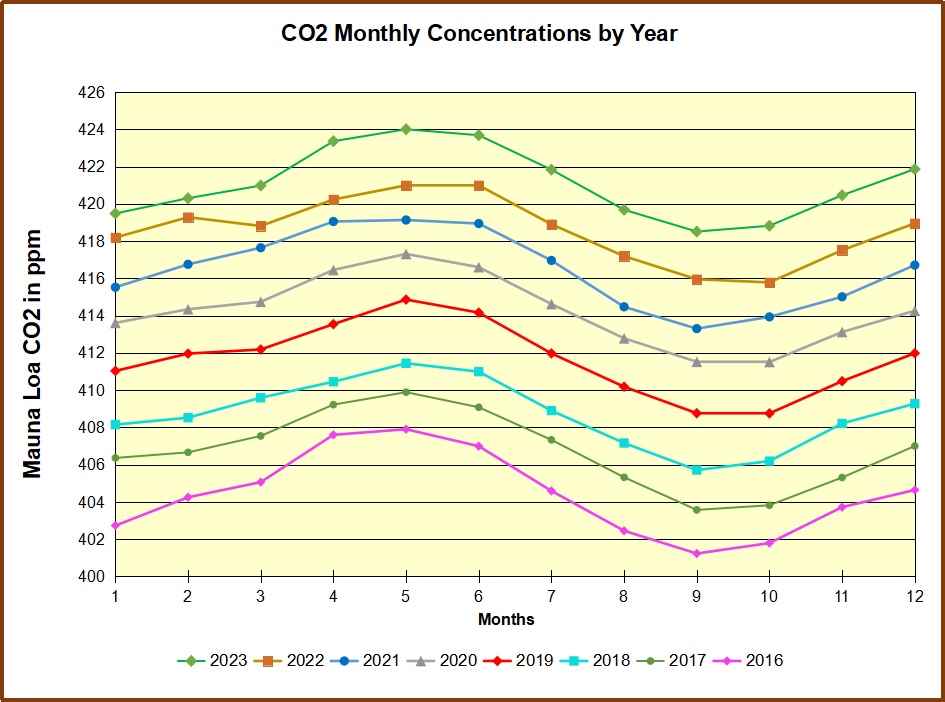

The case against the dams is actually about climatism. The fish are not at risk, as shown by many scientific reports. But climatists do not include hydro in their definition of “renewable.” And they promote fear of methane, claiming dam reservoirs increase methane emissions.

So here’s the political solution. Keep the dams open and the fish running to their spawning grounds. And to appease climatists ban any transmission of electricity from those dams to Seattle and Western Washington state. Deal?

Background Post