Jennifer Beam Dowd writes at Slate The Sturgis Biker Rally Did Not Cause 266,796 Cases of COVID-19. Excerpts in italics with my bolds.

The recent mass gathering in South Dakota for the annual Sturgis Motorcycle Rally seemed like the perfect recipe for what epidemiologists call a “superspreading” event. Beginning Aug. 7, an estimated 460,000 attendees from all over the country descended on the small town of Sturgis for a 10-day event filled with indoor and outdoor events such as concerts and drag racing.

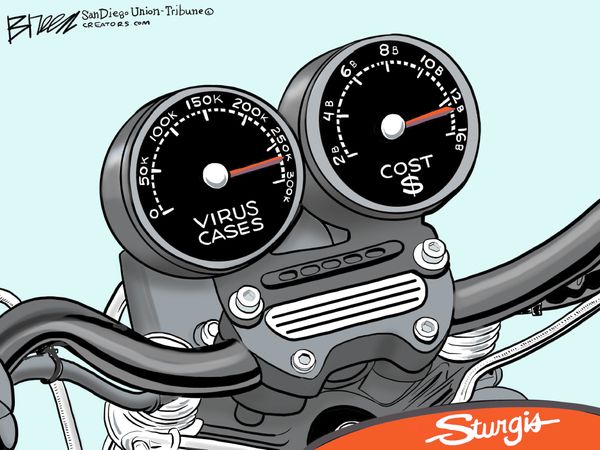

Now a new working paper by economist Dhaval Dave and colleagues is making headlines with their estimate that the Sturgis rally led to a shocking 266,796 new cases in the U.S. over a four-week period, which would account for a staggering 19 percent of newly confirmed cases in the U.S. in that time. They estimate the economic cost of these cases at $12.2 billion, based on previous estimates of the statistical cost of treating a COVID-19 patient.

Modeling infection transmission dynamics is hard, as we have seen by the less than stellar performance of many predictive COVID-19 models thus far. (Remember back in April, when the IHME model from the University of Washington predicted zero U.S. deaths in July?) Pandemic spread is difficult both to predict and to explain after the fact—like trying to explain the direction and intensity of current wildfires in the West. While some underlying factors do predict spread, there is a high degree of randomness, and small disturbances (like winds) can cause huge variation across time and space. Many outcomes that social scientists typically study, like income, are more stable and not as susceptible to these “butterfly effects” that threaten the validity of certain research designs.

While this approach may sound sensible, it relies on strong assumptions that rarely hold in the real world. For one thing, there are many other differences between counties full of bike rally fans versus those with none, and therein lies the challenge of creating a good “counterfactual” for the implied experiment—how to compare trends in counties that are different on many geographic, social, and economic dimensions? The “parallel trends” assumption assumes that every county was on a similar trajectory and the only difference was the number of attendees sent to the Sturgis rally. When this “parallel trends” assumption is violated, the resulting estimates are not just off by a little—they can be completely wrong.

This type of modeling is risky, and the burden of proof for the believability of the assumptions very high.

If thinking through the required transmission dynamics doesn’t raise your alarm bells, consider this: The paper’s results show that the significant increase in transmission was only evident after Aug. 26. That makes sense—it would be consistent with a lag time for infections from the beginning of the rally. Nonetheless, the authors state that their estimate of the total number of cases, 266,796, represents “19 percent of the 1.4 million cases of COVID-19 in the United States between August 2nd 2020 and September 2nd.” (Italics mine.) In reality, these extra cases must have occurred in the second half of the month, meaning these estimates would account for a staggering 45 percent of U.S. cases over those two weeks. This simply doesn’t seem plausible.

The 266,796 number also overstates the precision of the estimates in the paper even if the model is taken at face value. The confidence intervals for the “high inflow” counties seem to include zero (meaning the authors can’t say with statistical confidence that there was any difference in infections across counties due to the rally). No standard errors (measures of the variability around the estimate) are provided for the main regression results, and many of the p-values for key results are not statistically significant at conventional levels. So even if one believes the design and assumptions, the results are very “noisy” and subject to caveats that don’t merit the broadcasting of the highly specific 266,796 figure with confidence, though I imagine that “somewhere between zero and 450,000 infections” would not have been as headline-grabbing.

The paper also estimates the rise in cases in Meade County, South Dakota, the site of the rally, and reports an increase of between 177 and 195 cases compared with a “synthetic control” of similar counties, an approach similar in spirit to the difference-in-difference model. This represents a 100 to 200 percent increase in cases, which also appears to be a serious overestimate. Looking at the raw case data for Meade Country, cumulative cases from Aug. 3 to Sept. 2 increased from by 45 to 74, an increase of only total 29 cases (though a 64 percent increase). With a cumulative case count of only 74 in Meade County by Sept. 2, an estimated increase of 103 more than the total observed over the whole pandemic suggests serious problems with the model.

Again, the authors employ a method that implicitly compares what happened in Meade County to similar hypothetical “twin” counties. Counties from within South Dakota and bordering states were excluded since they may also have been directly affected by the rally. Counties that shared similar urbanicity rates, population density, and pre-rally COVID-19 cases per capita were considered good candidates for this counterfactual group. Finding valid comparisons is key. Upon inspection, one of the counties weighted heavily as a “control” was in Hawaii—I think we can agree that islands during a pandemic are not likely a good control group for what is happening in the lower 48.

None of this means that the rally was probably harmless. Common sense would tell us that such a large event with close contact was risky and did increase transmission. The rise in Meade County was real and noticeable, albeit on the scale of 29 cases. Given the huge inflow to this specific location along with increased testing for the event, a bump was not surprising.

Contact tracing reports have identified cases and deaths linked to the event, but in the range of hundreds.

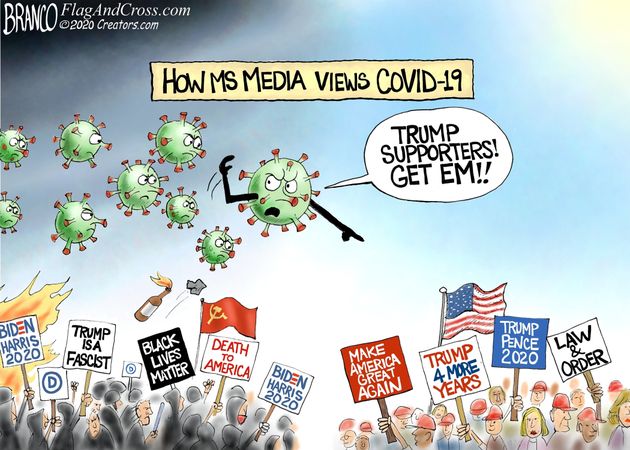

More broadly, while it’s important for us to understand factors driving COVID-19 transmission, the methodological challenges to identifying these effects at the aggregate level are difficult to overcome. Improved contact tracing and surveys at the individual level are the best way to gain insights into transmission dynamics. (At Dear Pandemic, a COVID-19 science communication effort I run with colleagues, we unfortunately spend much of our time explaining and correcting such misleading statistics.) The authors of this study have used the same study design to estimate the effects of other mass gatherings including the BLM protests and Trump’s June Tulsa, Oklahoma, rally. Each paper has given some part of the political spectrum something they might want to hear but has done very little to illuminate the actual risks of COVID-19 transmission at these events.

Exaggerated headlines and cherry-picking of results for “I told you so” media moments can dangerously undermine the long-term integrity of the science—something we can little afford right now.

You note:

“significant increase in transmission was only evident after Aug. 26. That makes sense—it would be consistent with a lag time for infections from the beginning of the rally.”

I would like to point out that if there was a real contagious disease in the population, people would be in various stages of infection as they7 converged on Sturgis and people would have been manifesting symptoms starting with day one. Not a single attendee was reported as sick. There was no virus.

LikeLike

txp, I took that comment by the author to mean the study was reasonable to assume a two-week lag for evidence of transmissions to appear. The criticism was about the implausible number they estimated. Your point is well-taken since people lacking enough viral load to be sick do not infect others; the exception being if they get sick a few days later.

LikeLike