These days the media are full of stories about people setting targets to “decarbonize” the energy sources fueling their societies. Some are claiming (and some have failed notoriously) to achieve zero carbon electrification. We should take a deep breath, step back and rationally consider what is being discussed and proposed.

The History of Energy Transitions

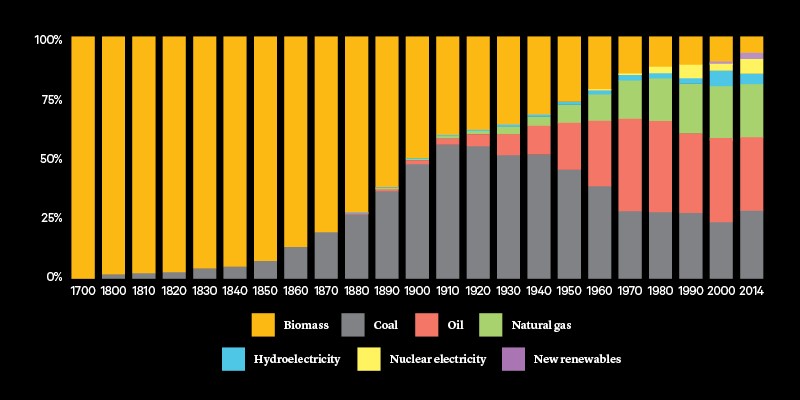

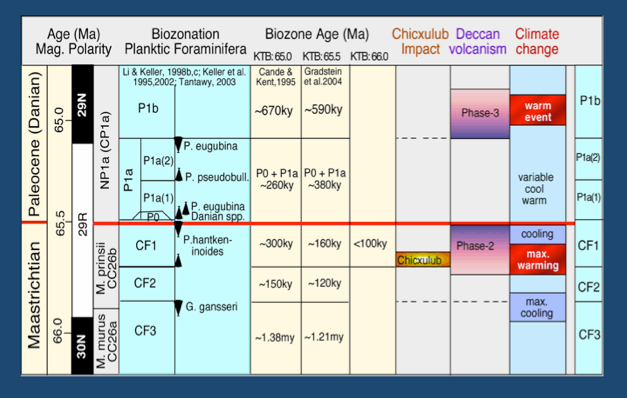

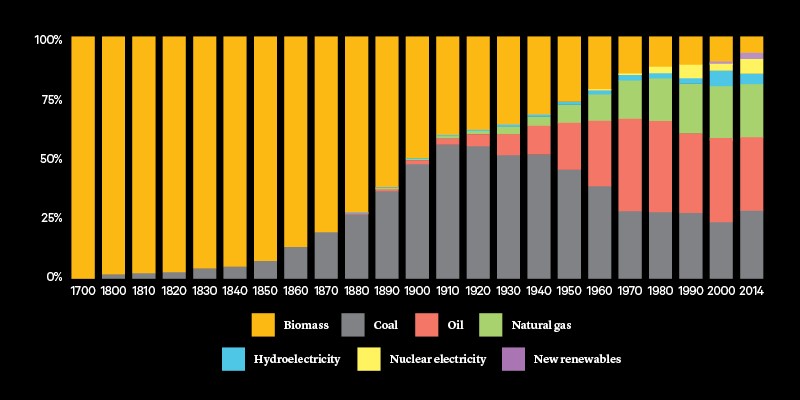

Thanks to Bill Gates we have this helpful graph showing the progress of human civilization resulting from shifts in the mix of energy sources.

Before the 19th century, it is all biomass, especially wood. Some historians think that the Roman Empire collapsed partly because the cost of importing firewood from the far territories exceeded the benefits. More recently, the 1800’s saw the rise of coal and the industrial revolution and a remarkable social transformation, along of course with issues of mining and urban pollution. The 20th century is marked first by the discovery and use of oil and later by natural gas. Since the chart is proportional, it shows how oil and gas took greater importance, but in fact the total amount of energy produced and consumed in the modern world has grown exponentially. So energy from all sources, even biomass has increased in absolute terms.

The global view also hides the great disparity between advanced societies who exploited carbon-based energy to become wealthy and build large middle classes composed of human resources multiplying the social capital and extending the general prosperity. Those societies have also used their wealth to protect to a greater extent their natural environments.

The 21st Century Energy Concern

Largely due to reports of rising temperatures 1980 to 2000, alarms were sounded about global warming/climate change and calls to stop using carbon-based energy. To understand what “decarbonization” actually means, we have two recent resources that explain clearly what is involved and why we should be skeptical and rationally critical.

First, Master Resource describes how the anti-carbon agenda is now embedded in societal structures. Mark Krebs writes Paris Lives! “Deep Decarbonization” at DOE. Excerpts in italics with my bolds.

Despite President Trump’s announcement that the U.S. would withdraw for the Paris Agreement, the basis of that agreement–“deep decarbonization” through “beneficial electrification”–is proceeding virtually unabated. The reason that this is occurring is because it serves the purposes of the electric utility industry and their environmentalist allies, e.g., the Natural Resources Defense Council (NRDC).

According to the Paris Agreement, the fundamental strategy for climate stabilization would be by “deep decarbonization” primarily through “beneficial electrification” powered with “clean energy.” But how are these terms defined exactly?

Deep decarbonization: [4]

The primary strategy of the Paris Agreement for climate stabilization through an 80% reduction in the global use of fossil fuels to “decarbonize” the World’s energy systems by 2050.

Beneficial Electrification: [5]

Replacing consumers direct consumption of natural gas and gasoline, along with other forms of fossil fuels, and on to electricity (with the assumption that electricity generation will be dominated by “clean energy”).

Clean Energy:

Strictly interpreted, it’s just renewables. And more specifically, renewable electric generation. However, many variant definitions exist. For example, DOE includes nuclear, bioenergy and fuel cells as “clean.” And so-called clean coal also appears to qualify via “carbon capture & sequestration” (CCS) as does natural gas, if it is used as a feedstock to make electricity. Energy efficiency (e.g., “nega-watts”) is also deemed “clean energy” by some.

Think about it: Transitioning to a global clean energy economy means there must be a transition from something. By the process of elimination, about the only energy sources not clean are the direct use of fossil fuels. In addition to natural gas direct use, “not clean energy” also includes gasoline, propane, etc. Regardless, “clean energy” (i.e. electrification) is being put forth as the universal cure without disclosure of side effects. In essence the ‘clean energy’ future striven for by EERE exports environmental impacts to others and at high costs. Such non-climate related impacts are ignored.

Whether it’s called regulatory capture, rent seeking or political capitalism, the result is the same: Power accrues to the powerful. In addition to receiving taxpayer funding, advocates of “deep decarbonization” have profited greatly by climate change fear mongering for donations as well as from the deep pockets of Tom Steyer and the like. And now these advocates have officially joined forces with the electric utility industry as evidenced by the recent pact between NRDC and EEI that includes the pursuit of “efficient electrification of transportation, buildings, and facilities.” [21]

In large measure, EERE’s current activities should be viewed as inappropriate subsidies for deep decarbonization via electrification in contravention of President Trump’s proclamation to withdraw from the Paris Agreement. It is also contrary to President Trump’s Executive Order 13783.

Decarbonists in Denial of History

Against this backdrop of imperatives against fossil fuels, we have Lessons from technology development for energy and sustainability by Cambridge Professor Michael J. Kelly (H/T Friends of Science). Excerpts in italics with my bolds.

Abstract: There are lessons from recent history of technology introductions which should not be forgotten when considering alternative energy technologies for carbon dioxide emission reductions.

The growth of the ecological footprint of a human population about to increase from 7B now to 9B in 2050 raises serious concerns about how to live both more efficiently and with less permanent impacts on the finite world. One present focus is the future of our climate, where the level of concern has prompted actions across the world in mitigation of the emissions of CO2. An examination of successful and failed introductions of technology over the last 200 years generates several lessons that should be kept in mind as we proceed to 80% decarbonize the world economy by 2050. I will argue that all the actions taken together until now to reduce our emissions of carbon dioxide will not achieve a serious reduction, and in some cases, they will actually make matters worse. In practice, the scale and the different specific engineering challenges of the decarbonization project are without precedent in human history. This means that any new technology introductions need to be able to meet the huge implied capabilities. An altogether more sophisticated public debate is urgently needed on appropriate actions that (i) considers the full range of threats to humanity, and (ii) weighs more carefully both the upsides and downsides of taking any action, and of not taking that action.

Key Points

Only fossil fuels and nuclear fuels have the ability to power megacities in 2050, when over half of the then 9B people will live in them.

As the more severe predictions of climate change over the last 25 years are simply not happening, it makes no sense to deploy the more costly options for renewable energy.

Abandoned infrastructure projects (such as derelict wind and solar farms in the Mojave desert) remain to have their progenitors mocked.

In this review, I want to concentrate on the measures taken to reduce the global emissions of carbon dioxide, and how the lessons from recent history of technology introductions can inform the decarbonization project. I want to review the last 20 years in particular and see what this portends for the next 40 years which will take us beyond 2050, which is the pivotal date in the public discourse. A Royal Commission into Environmental Pollution in 2000 advocated a 60% reduction of carbon dioxide emissions for the UK by 2050. 14 The date was fixed by the response to the enquiry as to when energy from nuclear fusion might supply 10% of the world’s energy needs. The answer was not before 2050, and we will need to get there without it. The revision from 60% to 80% reduction came from concern that developed countries should make allowances for developing countries using fossil fuel to escape poverty, i.e., they can take the same route as developed countries did to their relative affluence.

We have had over 20 years since the first Earth Summit in Rio de Janeiro in 1992, where 1990 emissions of carbon dioxide were agreed upon as the benchmark for reductions. Before discussing specific technologies, I want to establish the scale of the challenge in engineering, technology, and project delivery terms: this does include economics, societal attitudes, and the public discourse. I also discuss some engineering fundamentals. I will then summarize the many lessons of technology introductions, the preparation for other global challenges, and finally discuss a realistic way forward.

Scale

It is important to note the scale of the perceived problem. The entire history of modern civilization that started with the first industrial revolution has been enabled by the burning of fossil fuels. Our mobility, our health and lifestyles, our diet and its variety, our education system, particularly at the higher level, and our high culture would be quite impossible without fossil fuels, which have provided over 90% of the energy consumed on the earth since 1800. Today, geothermal, hydro- and nuclear power, together with the historic biofuels of wood and straw, account for about 15% of our energy use. 18 Even though it is 40 years since the first oil shocks kick-started the modern renewable energy developments (wind, solar, and cultivated biomass), we still get rather less than 1% of our world energy from these sources. Indeed the rate at which fossil fuels are growing is seven times that at which the low carbon energies are growing, as the ratio of fossil fuel energy used to total energy used has remained unchanged since 1990 at 85%. 19 The call to decarbonize the global economy by 80% by 2050 can now only be described as glib in my opinion, as the underlying analysis shows it is only possible if we wish to see large parts of the population die from starvation, destitution or violence in the absence of enough low-carbon energy to sustain society.

A further insight into the scale of present day energy consumption is as follows. In Europe, today, we use about 6–7 times as much energy per person per day as was used in 1800, and there are seven times as many people on earth now as compared with then. 18 The energy of 1800 was expended on heating and lighting one room in a house and producing hot water used in that same room, and on the purchase of local produce and manufactures. By examining the breakdown of today’s energy usage in the UK and Europe, 20 this energy use persists today, but with lighting and central heating of whole buildings. In addition, Europeans today use as much energy per person per day on private motoring as they used in total in 1800. They use an equal amount on mobility through public transport: trains, ships, and aeroplanes. Three times the personal consumption of 1800 is used in the manufacture and logistics of things we consume or use, such as food or manufactured goods.

Over the next 20 years, the World Bank estimates that the middle class will rise from 3B to 5B, on the basis of which BP estimates a further increase in global energy demand of 40% still to be met in the main by fossil fuels. The graph in Fig. 1(a) is on the wrong scale to show that the total installed renewable energy capacity as of today is equal to the combined capacity of the nuclear power plants shut down in Japan and scheduled to close in Germany, making the challenge of carbon free energy impossible to meet.

Energy return on investment (EROI)

The debate over decarbonization has focussed on technical feasibility and economics. There is one emerging measure that comes closely back to the engineering and the thermodynamics of energy production. The energy return on (energy) investment is a measure of the useful energy produced by a particular power plant divided by the energy needed to build, operate, maintain, and decommission the plant. This is a concept that owes its origin to animal ecology: a cheetah must get more energy from consuming his prey than expended on catching it, otherwise it will die. If the animal is to breed and nurture the next generation then the ratio of energy obtained from energy expended has to be higher, depending on the details of energy expenditure on these other activities. Weißbach et al. 23 have analysed the EROI for a number of forms of energy production and their principal conclusion is that nuclear, hydro-, and gas- and coal-fired power stations have an EROI that is much greater than wind, solar photovoltaic (PV), concentrated solar power in a desert or cultivated biomass: see Fig. 2. In human terms, with an EROI of 1, we can mine fuel and look at it—we have no energy left over. To get a society that can feed itself and provide a basic educational system we need an EROI of our base-load fuel to be in excess of 5, and for a society with international travel and high culture we need EROI greater than 10. The new renewable energies do not reach this last level when the extra energy costs of overcoming intermittency are added in. In energy terms the current generation of renewable energy technologies alone will not enable a civilized modern society to continue!

Successful new technologies improve the lot of mankind

I have already referred to the use of Watt’s steam energy as a source of energy to improve harvesting, greatly aiding agricultural productivity. Notice too that the windmills of Europe stopped turning: the new source of energy was compact, moveable, reliable, available when needed, and of relatively low maintenance. This differential has widened ever since, and the recent windmills do not greatly close the gap in practical utility or cost. Later in the 19th century, electricity from steam turbines became available to lighten the darkness, power an increasing range of machinery, and increase the productivity of mankind to the extent that can be seen today when one contrasts an industrial city with a remote off-grid rural community. It is this energy which has underpinned the ability to improve sanitation, transport goods, and allow modern communications and advanced healthcare. During the 20th century, jet engines greatly reduced the time taken to get between two distant places, with semiconductor technologies eliminating that time with virtual presence anywhere anytime. The genetic engineering technologies have greatly speeded up the processes of plant breeding and the recent green revolution means that the larger population of the world now is better fed than ever before. The remaining areas of starvation are universally associated with war, and/or bad governance interfering with supply chains.

R&D in new technologies is a good use of public money

Really new technologies often span several existing sectors of private industry which would benefit or lose out if the new technology were introduced: coachmen in the age of buses and trains, pigeon carriers in the age of telegraph. It is difficult to have foreseen the rise of electronics to its current pervasive state if governments around the world had not supported relevant R&D in the early stages. Today the global R&D budget exceeds $1T, and the public purse contributes much of that. 33 In many advanced countries, there is significant public support of private R&D in the perceived total public interest, an interest that is not the particular focus of any one company in the private sector. The analysis of the origin of Apple’s technologies is an exemplary case of the private capture of public investment. 34

In the last 40 years, there has continued to be public-good R&D undertaken in many countries on new energy technologies that have given rise to the first generation of renewables. The support goes well down the development channel as well as the background research. This is because the private risk of initial small scale deployment to test the effectiveness of a new technology is often too high for a single company or consortium to bear. The USA has the most effective ecosystem of innovation in the world, eclipsed only for a brief period in the 1980s by Japan.

Premature roll-out of immature/uneconomic technologies is a recipe for failure

The virtuous role of government funding in R&D is to be contrast with the litany of failure in recent times of subsidies in support of the premature rollout of technologies that are uneconomic and/or immature.

At its prime, the Carrizo Plain (S. California) was by far the largest photovoltaic array in the world, with 100,000 1′x 4′ photovoltaic arrays generating 5.2 megawatts at its peak. The plant was originally constructed by ARCO in 1983 and was dismantled in the late 1990s. The used panels are still being resold throughout the world.

In the late 1980s, large scale installations were made in the Mojave desert of farms of windmills and solar panels. One can see the square kilometers of green industrial dereliction by googling the phrases ‘abandoned wind farm’ and ‘abandoned solar farm’, respectively. The useful energy generated within these farms was insufficient to pay the interest on capital and to maintain production. The companies have gone bankrupt, and there is no one to decommission the infrastructure and return the sites to their pristine condition. I note that the remains are there to be mocked as an infrastructure project has gone wrong, and they will remain for decades, a modern version of the hubris of Ozymandias or the builders of the Tower of Babel. It is important to note that some (but not all 36 ) second and third generation wind and solar farms in the Mojave desert have fared and are faring better, 37 but the lesson here remains that premature roll-out of unready technology is unwise.

The primary problem is the use of public money, i.e., subsidies, to encourage the roll-out. They have a plethora of unintended consequences in the energy infrastructure sector. During the economic crisis of 2008/9 many of the subsidies were reduced or withdrawn in the USA. Many small companies went bankrupt. This has continued with subsidy reductions in the UK, Germany, Spain and elsewhere with further bankruptcies in the alternative energy sector. 38 Indeed there is an index for the stock value of alternative energy companies, RENIXX, that lost 80% of its value between 2008 and 2013, although it has recovered a little of that fall more recently. It is certainly not the place for pension fund investments: if the market were mature and stable, a 40-year programme to renew the global energy infrastructure should be the place for pension funds. 39 The reason so far for these failures is that the technologies are uneconomic over their lifecycles and immature in terms of the energy return on their investment (as in section “Energy return on investment (EROI)” above). In China, public subsidies continue with solar panels being sold at about a 30% loss on the cost of production. 40 That is a political strategy at work rather than an industrial strategy. In democracies, there is unlikely to be multiparty, multigovernment consensus lasting for the multidecadal timescales implied by major infrastructure change.

There is an unintended and unwanted social consequence of the roll out of these new technologies. There is ample evidence in the UK of increasing fuel poverty (i.e., household spending over 10% of disposable income keeping warm in winter) in the regions of wind farm deployment where higher electricity bills are needed to cover the rent of the land (from usually already rich) landowners, a direct reversal of the process whereby cheap energy over the last century has lifted a significant fraction of the world’s poor from their poverty. 41 Renewable energy supplements are viewed as socially divisive.

Technology breakthroughs are not pre-programmable

When public commentators such as Thomas L Friedman enter the debate about energy technologies, they urge more research to produce a breakthrough energy technology, in his case, a ‘plentiful supply of clean green cheap electrons’. 43 It is salutary to realize that all but two of the energy technologies used today have counterparts in biblical times, the only newcomers being nuclear energy and solar photovoltaics. The delivery of coal, gas, wind, water, and solar energy may be quite different today from then but the underlying principles of operation have not changed. Since nuclear fusion was first demonstrated, there has been a 60-year effort to tame it for a source of electrical energy, but so far without success. One can ask the experts whether they might have made more progress with more money, but the challenges have remained profound. Even if there were a breakthrough tomorrow in the basic processes, it would still take of order 40 years (rather than 20 in my opinion) to complete the further engineering and technology work and deploy fusion reactions to be able to provide (say) 10% of the world’s electricity. We must get to 2050 without it.

Finance is limited, so actions at scale must be prioritized

The sum involved in renewing the energy infrastructure in the UK is about £200B over the next decade. 46 A large element of this cost is to make good the lack of infrastructure investment over the last 20 years since a privatized energy market was introduced. In addition the large scale modification of the grid to cope with multiple renewable energy inputs has to be included. There remains a dispute in the public domain as to where these costs lie. The grid as we know it in major industrial countries has evolved over a period of 100 years on the basis of a relatively few large sources of energy connected to the grid which circulates power to substations from which it is transmitted to individual end-users in a broadcast mode. With multiple small and independent sources of energy from wind and solar installations, the grid topology has to change to cope with this very different quality of energy. The conventional suppliers of energy say that they should not have to cover these extra costs which should be book-kept with the renewable energies in the overall balance sheet of costs. A similar book-keeping problem arises with the costs of back-up to intermittent renewable energies. The combined cycle gas turbine generators that have delivered base load electricity to the grid in Germany are now being asked to act in back-up mode, with frequent acceleration and deceleration of the turbines, for which purpose they were not designed and they shorten the in-service life time as a result. 47 The cost analyses of future energy are bedevilled by the assignments of additional consequential costs. In practice as consumers, we buy the energy provided by electricity, blind to the particular way it is produced.

The scale of the costs of these energy bills is such that one cannot make mistakes in infrastructure investment decisions. A wrong investment is a missed opportunity on a large scale.

Finally, it is as well to remember that there are only ever two sources of payment, the consumer and the taxpayer, and the only issue at stake between them is the directness with which costs are recovered.

The way forward

It is surely time to review the current direction of the decarbonization project which can be assumed to start in about 1990, the reference point from which carbon dioxide emission reductions are measured. No serious inroads have been made into the lion’s share of energy that is fossil fuel based. Some moves represent total madness. The closure of all but one of the aluminium smelters that used gas-fired electricity in the UK (because of rising electricity costs from the green tariffs that are over and above any global background fossil fuel energy costs) reduces our nation’s carbon dioxide emissions. 62 However, the aluminium is now imported from China where it is made with more primitive coal-based sources of energy, making the global problem of emissions worse! While the UK prides itself in reducing indigenous carbon dioxide emissions by 20% since 1990, the attribution of carbon emissions by end use shows a 20% increase over the same period. 63

It is also clear that we must de-risk all energy infrastructure projects over the next two decades. While the level of uncertainty remains high, the ‘insurance policy’ justification of urgent large-scale intervention is untenable, and we do not pay premiums if we would go bankrupt as a consequence. Certain things we do not insure against, such as a potential future mega-tsunami, 64 or a supervolcano, 65 or indeed a meteor strike, even though there have been over 20 of these since 2000 with the local power of the Hiroshima bomb! 66 Using a significant fraction of the global GDP to possibly capture the benefits of a possibly less troublesome future climate leaves more urgent actions not undertaken.

Two important points remain. The first is that there is no alternative to business as usual carrying on, with one caveat expressed in the following paragraph. Since energy use has a cost, it is normal business practice to minimize energy use, by increasing energy efficiency (see especially the recent improvement in automobile performance), 67 using less resource material and more effective recycling. These drivers have become more intense in recent years, but they were always there for a business trying to remain competitive.

The second is that, over the next two decades, the single place where the greatest impact on carbon dioxide emissions can be achieved is in the area of personal behaviour. Its potential dwarfs that of new technology interventions. Within the EU over the last 40 years there has been a notable change in public attitudes and behaviour in such diverse arenas as drinking and driving, smoking in public confined spaces, and driving without a seatbelt. If society’s attitude to the profligate consumption of any materials and resources including any forms of fuel and electricity was to regard this as deeply antisocial, it has been estimated we could live something like our present standard of living on half the energy consumption we use today in the developed world. 68 This would mean fewer miles travelled, fewer material possessions, shorter supply chains, and less use of the internet. While there is no public appetite to follow this path, the short term technology fix path is no panacea.

Conclusions

Over the last 200 years, fossil fuels have provided the route out of grinding poverty for many people in the world (but still less than half of all people) and Fig. 1 shows that this trend is certain to continue for at least the next 20 years based on the technologies of scale that are available today. A rapid decarbonization is simply impossible over the next 20 years unless the trend of a growing number who succeed to improve their lot is stalled by rich and middle class people downgrading their own standard of living. The current backlash against subsidies for renewable energy systems in the UK, EU and USA is a sign that all is not well with current renewable energy systems in meeting the aspirations of humanity.

Finally, humanity is owed a serious investigation of how we have gone so far with the decarbonization project without a serious challenge in terms of engineering reality. Have the engineers been supine and lacking in courage to challenge the orthodoxy? Or have their warnings been too gentle and dismissed or not heard? Science and politicians can take too much comfort from undoubted engineering successes over the last 200 years. When the sums at stake are on the scale of 1–10% of the world’s GDP, this is a serious business.

See also: Climateers Tilting at Windmills Updated

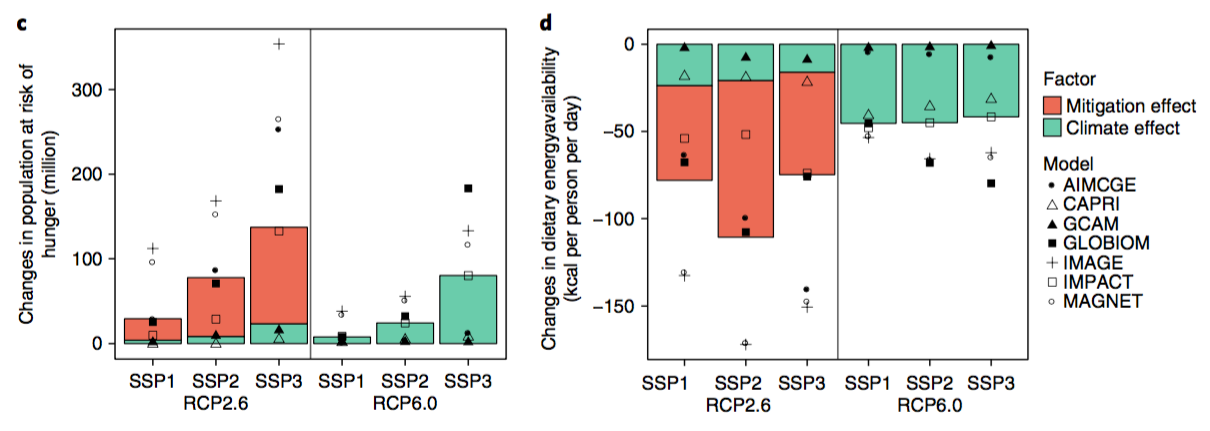

Figure 12: Figure 9 with Y-scale expanded to 100% and thermal generation included, illustrating the magnitude of the problem the G20 countries still face in decarbonizing their energy sectors.

H/T GWPF BENEFITS OF GLOBAL WARMING: RECORD HARVESTS REPORTED IN NUMEROUS COUNTRIES

H/T GWPF BENEFITS OF GLOBAL WARMING: RECORD HARVESTS REPORTED IN NUMEROUS COUNTRIES

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/2323356/Screen_Shot_2014-10-03_at_5.35.14_PM.0.png)

/cdn.vox-cdn.com/uploads/chorus_image/image/57806463/800px_RumfordFireplaceAlc1.0.jpg)