Why the Left Coast is Still Burning

Update September 13, 2020: This reprint of a post two years ago shows nothing has changed, except for the worse.

It is often said that truth is the first casualty in the fog of war. That is especially true of the war against fossil fuels and smoke from wildfires. The forests are burning in California, Oregon and Washington, all of them steeped in liberal, progressive and post-modern ideology. There are human reasons that fires are out of control in those places, and it is not due to CO2 emissions. As we shall see, Zinke is right and Brown is wrong. Some truths the media are not telling you in their drive to blame global warming/climate change. Text below is excerpted from sources linked at the end.

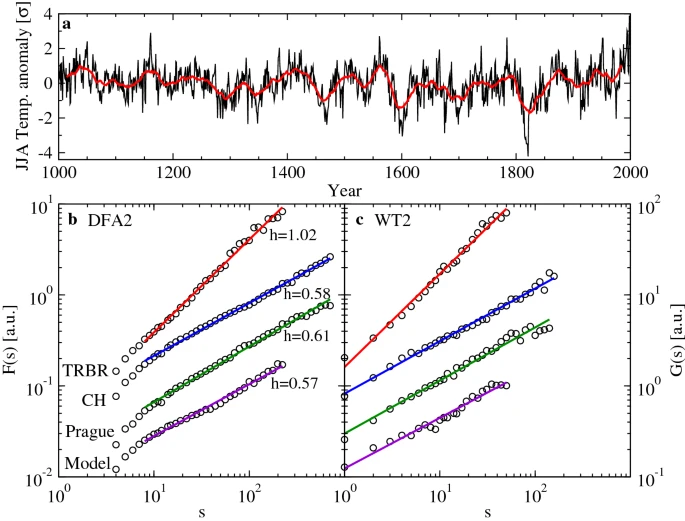

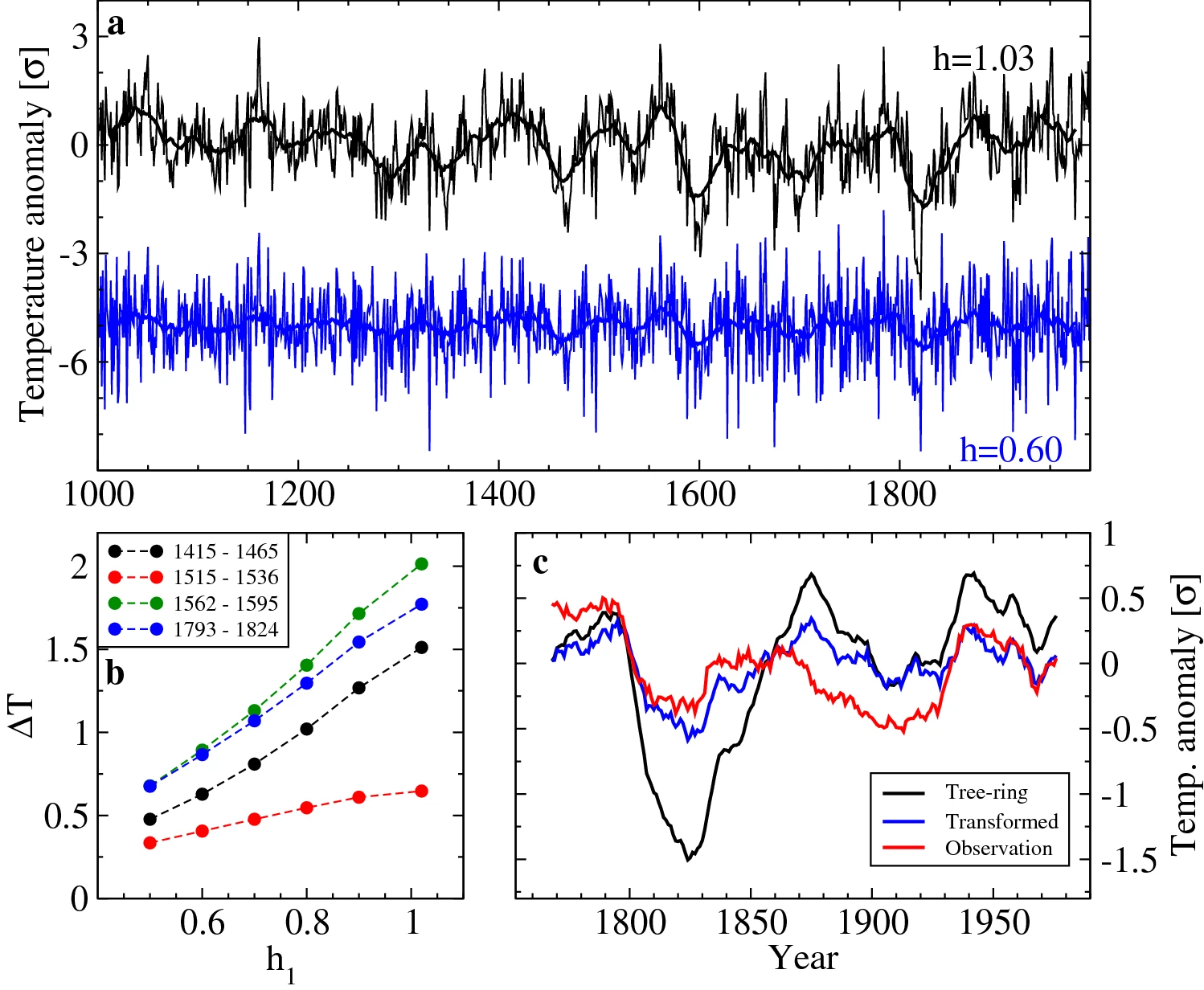

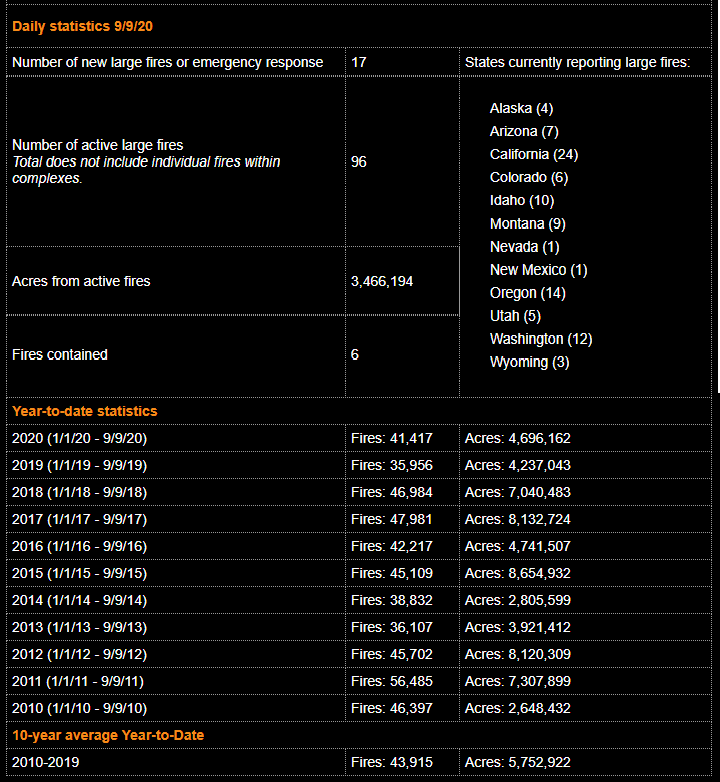

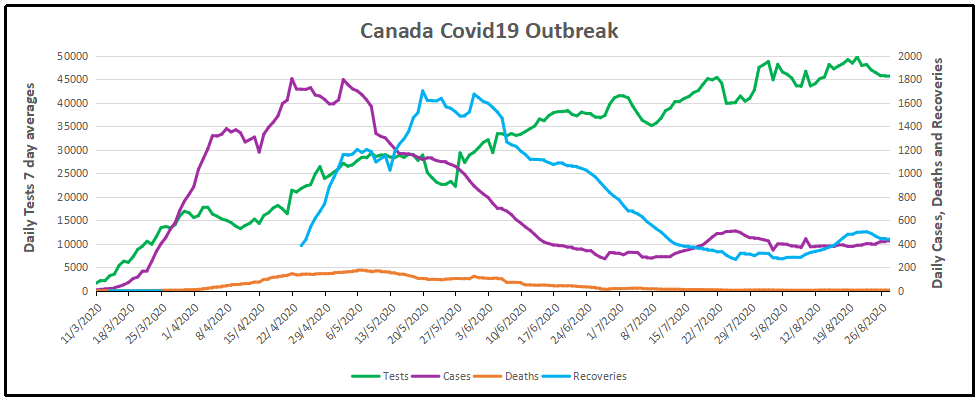

1. The World and the US are not burning.

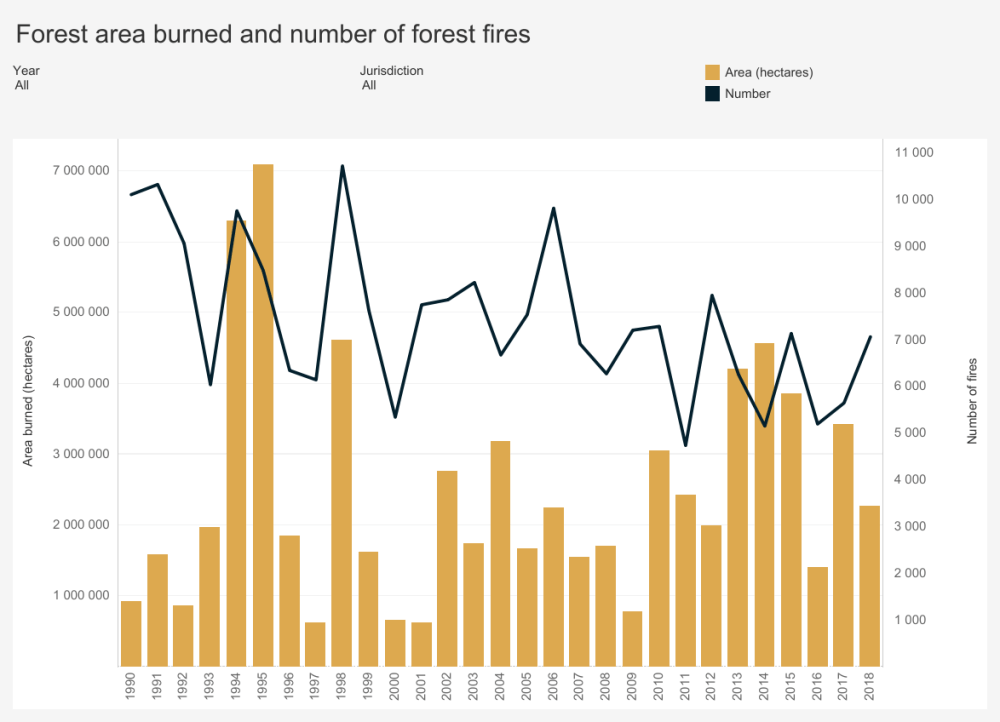

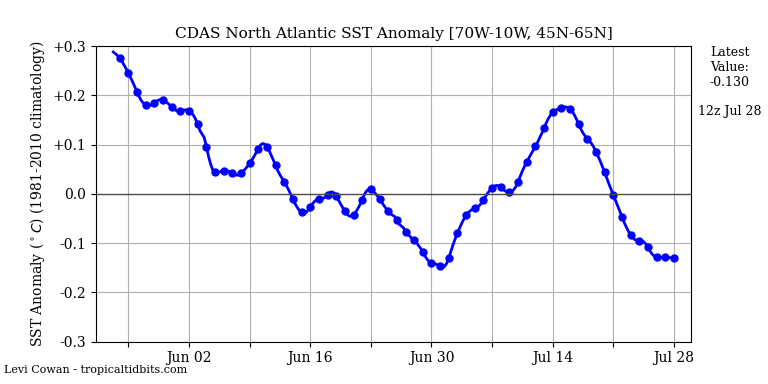

The geographic extent of this summer’s forest fires won’t come close to the aggregate record for the U.S. Far from it. Yes, there are some terrible fires now burning in California, Oregon, and elsewhere, and the total burnt area this summer in the U.S. is likely to exceed the 2017 total. But as the chart above shows, the burnt area in 2017 was less than 20% of the record set way back in 1930. The same is true of the global burnt area, which has declined over many decades.

In fact, this 2006 paper reported the following:

“Analysis of charcoal records in sediments [31] and isotope-ratio records in ice cores [32] suggest that global biomass burning during the past century has been lower than at any time in the past 2000 years. Although the magnitude of the actual differences between pre-industrial and current biomass burning rates may not be as pronounced as suggested by those studies [33], modelling approaches agree with a general decrease of global fire activity at least in past centuries [34]. In spite of this, fire is often quoted as an increasing issue around the globe [11,26–29].”

People have a tendency to exaggerate the significance of current events. Perhaps the youthful can be forgiven for thinking hot summers are a new phenomenon. Incredibly, more “seasoned” folks are often subject to the same fallacies. The fires in California have so impressed climate alarmists that many of them truly believe global warming is the cause of forest fires in recent years, including the confused bureaucrats at Cal Fire, the state’s firefighting agency. Of course, the fires have given fresh fuel to self-interested climate activists and pressure groups, an opportunity for greater exaggeration of an ongoing scare story.

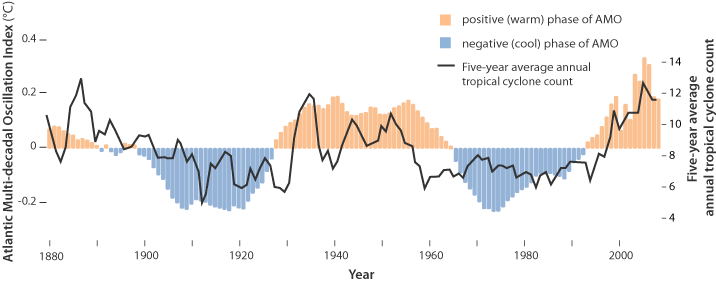

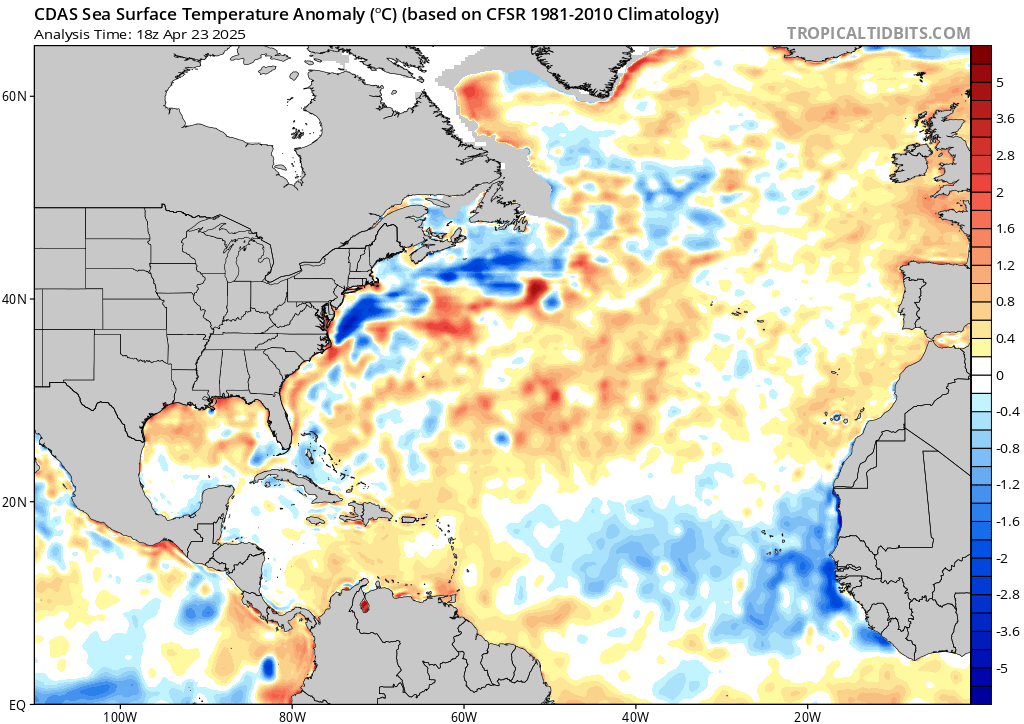

This year, however, and not for the first time, a high-pressure system has been parked over the West, bringing southern winds up the coast along with warmer waters from the south, keeping things warm and dry inland. It’s just weather, though a few arsonists and careless individuals always seem to contribute to the conflagrations. Beyond all that, the impact of a warmer climate on the tendency for biomass to burn is considered ambiguous for realistic climate scenarios.

2. Public forests are no longer managed due to litigation.

According to a 2014 white paper titled; ‘Twenty Years of Forest Service Land Management Litigation’, by Amanda M.A. Miner, Robert W. Malmsheimer, and Denise M. Keele: “This study provides a comprehensive analysis of USDA Forest Service litigation from 1989 to 2008. Using a census and improved analyses, we document the final outcome of the 1125 land management cases filed in federal court. The Forest Service won 53.8% of these cases, lost 23.3%, and settled 22.9%. It won 64.0% of the 669 cases decided by a judge based on cases’ merits. The agency was more likely to lose and settle cases during the last six years; the number of cases initiated during this time varied greatly. The Pacific Northwest region along with the Ninth Circuit Court of Appeals had the most frequent occurrence of cases. Litigants generally challenged vegetative management (e.g. logging) projects, most often by alleging violations of the National Environmental Policy Act and the National Forest Management Act. The results document the continued influence of the legal system on national forest management and describe the complexity of this litigation.”

There is abundant evidence to support the position that when any forest project posits vegetative management in forests as a pretense for a logging operation, salvage or otherwise, litigation is likely to ensue, and in addition to NEPA, the USFS uses the Property Clause to address any potential removal of ‘forest products’. Nevertheless, the USFS currently spends more than 50% of its total budget on wildfire suppression alone; about $1.8 billion annually, while there is scant spending for wildfire prevention.

3. Mega fires are the unnatural result of fire suppression.

And what of the “mega-fires” burning in the West, like the huge Mendocino Complex Fire and last year’s Thomas Fire? Unfortunately, many decades of fire suppression measures — prohibitions on logging, grazing, and controlled burns — have left the forests with too much dead wood and debris, especially on public lands. From the last link:

“Oregon, like much of the western U.S., was ravaged by massive wildfires in the 1930s during the Dust Bowl drought. Megafires were largely contained due to logging and policies to actively manage forests, but there’s been an increasing trend since the 1980s of larger fires.

Active management of the forests and logging kept fires at bay for decades, but that largely ended in the 1980s over concerns too many old growth trees and the northern spotted owl. Lawsuits from environmental groups hamstrung logging and government planners cut back on thinning trees and road maintenance.

[Bob] Zybach [a forester] said Native Americans used controlled burns to manage the landscape in Oregon, Washington and northern California for thousands of years. Tribes would burn up to 1 million acres a year on the west coast to prime the land for hunting and grazing, Zybach’s research has shown.

‘The Indians had lots of big fires, but they were controlled,’ Zybach said. ‘It’s the lack of Indian burning, the lack of grazing’ and other active management techniques that caused fires to become more destructive in the 19th and early 20th centuries before logging operations and forest management techniques got fires under control in the mid-20th Century.”

4. Bad federal forest administration started in 1990s.

Bob Zybach feels like a broken record. Decades ago he warned government officials allowing Oregon’s forests to grow unchecked by proper management would result in catastrophic wildfires.

While some want to blame global warming for the uptick in catastrophic wildfires, Zybach said a change in forest management policies is the main reason Americans are seeing a return to more intense fires, particularly in the Pacific Northwest and California where millions of acres of protected forests stand.

“We knew exactly what would happen if we just walked away,” Zybach, an experienced forester with a PhD in environmental science, told The Daily Caller News Foundation.

Zybach spent two decades as a reforestation contractor before heading to graduate school in the 1990s. Then the Clinton administration in 1994 introduced its plan to protect old growth trees and spotted owls by strictly limiting logging. Less logging also meant government foresters weren’t doing as much active management of forests — thinnings, prescribed burns and other activities to reduce wildfire risk.

Zybach told Evergreen magazine that year the Clinton administration’s plan for “naturally functioning ecosystems” free of human interference ignored history and would fuel “wildfires reminiscent of the Tillamook burn, the 1910 fires and the Yellowstone fire.”

Between 1952 and 1987, western Oregon saw only one major fire above 10,000 acres. The region’s relatively fire-free streak ended with the Silver Complex Fire of 1987 that burned more than 100,000 acres in the Kalmiopsis Wilderness area, torching rare plants and trees the federal government set aside to protect from human activities. The area has burned several more times since the 1980s.

“Mostly fuels were removed through logging, active management — which they stopped — and grazing,” Zybach said in an interview. “You take away logging, grazing and maintenance, and you get firebombs.”

Now, Oregonians are dealing with 13 wildfires engulfing 185,000 acres. California is battling nine fires scorching more than 577,000 acres, mostly in the northern forested parts of the state managed by federal agencies.

The Mendocino Complex Fire quickly spread to become the largest wildfire in California since the 1930s, engulfing more than 283,000 acres. The previous wildfire record was set by 2017’s Thomas Fire that scorched 281,893 acres in Southern California.

While bad fires still happen on state and private lands, most of the massive blazes happen on or around lands managed by the U.S. Forest Service and other federal agencies, Zybach said. Poor management has turned western forests into “slow-motion time bombs,” he said.

A feller buncher removing small trees that act as fuel ladders and transmit fire into the forest canopy.

5. True environmentalism is not nature love, but nature management.

While wildfires do happen across the country, poor management by western states has served to turn entire regions into tinderboxes. By letting nature play out its course so close to civilization, this is the course California and Oregon have taken.

Many in heartland America and along the Eastern Seaboard often see logging and firelines if they travel to a rural area. This is part and parcel of life outside of the city, where everyone knows that because of a few minor eyesores their houses and communities are safer from the primal fury of wildfires.

In other words, leaving the forests to “nature,” and protecting the endangered Spotted Owl created denser forests––300-400 trees per acre rather than 50-80–– with more fuel from the 129 million diseased and dead trees that create more intense and destructive fires. Yet California spends more than ten times as much money on electric vehicle subsidies ($335 million) than on reducing fuel in a mere 60,000 of 33 million acres of forests ($30 million).

Rancher Ross Frank worries that funding to fight fires in Western communities like Chumstick, Wash., has crowded out important land management work. Rowan Moore Gerety/Northwest Public Radio

Once again, global warming “science” is a camouflage for political ideology and gratifying myths about nature and human interactions with it. On the one hand, progressives seek “crises” that justify more government regulation and intrusion that limit citizen autonomy and increase government power. On the other, well-nourished moderns protected by technology from nature’s cruel indifference to all life can afford to indulge myths that give them psychic gratification at little cost to their daily lives.

As usual, bad cultural ideas lie behind these policies and attitudes. Most important is the modern fantasy that before civilization human beings lived in harmony and balance with nature. The rise of cities and agriculture began the rupture with the environment, “disenchanting” nature and reducing it to mere resources to be exploited for profit. In the early 19thcentury, the growth of science that led to the industrial revolution inspired the Romantic movement to contrast industrialism’s “Satanic mills” and the “shades of the prison-house,” with a superior natural world and its “beauteous forms.” In an increasingly secular age, nature now became the Garden of Eden, and technology and science the signs of the fall that has banished us from the paradise enjoyed by humanity before civilization.

The untouched nature glorified by romantic environmentalism, then, is not our home. Ever since the cave men, humans have altered nature to make it more conducive to human survival and flourishing. After the retreat of the ice sheets changed the environment and animal species on which people had depended for food, humans in at least four different regions of the world independently invented agriculture to better manage the food supply. Nor did the American Indians, for example, live “lightly on the land” in a pristine “forest primeval.” They used fire to shape their environment for their own benefit. They burned forests to clear land for cultivation, to create pathways to control the migration of bison and other game, and to promote the growth of trees more useful for them.

Remaining trees and vegetation on the forest floor are more vigorous after removal of small trees for fuels reduction.

And today we continue to improve cultivation techniques and foods to make them more reliable, abundant, and nutritious, not to mention more various and safe. We have been so successful at managing our food supply that today one person out of ten provides food that used to require nine out of ten, obesity has become the plague of poverty, and famines result from political dysfunction rather than nature.

That’s why untouched nature, the wild forests filled with predators, has not been our home. The cultivated nature improved by our creative minds has. True environmentalism is not nature love, but nature management: applying skill and technique to make nature more useful for humans, at the same time conserving resources so that those who come after us will be able to survive. Managing resources and exploiting them for our benefit without destroying them is how we should approach the natural world. We should not squander resources or degrade them, not because of nature, but because when we do so, we are endangering the well-being of ourselves and future generations.

Conclusion

The annual burnt area from wildfires has declined over the past ninety years both in the U.S. and globally. Even this year’s wildfires are unlikely to come close to the average burn extent of the 1930s. The large wildfires this year are due to a combination of decades of poor forest management along with a weather pattern that has trapped warm, dry air over the West. The contention that global warming has played a causal role in the pattern is balderdash, but apparently that explanation seems plausible to the uninformed, and it is typical of the propaganda put forward by climate change interests.

Sources:

https://www.frontpagemag.com/fpm/271044/junk-science-and-leftist-folklore-have-set-bruce-thornton

https://sacredcowchips.net/tag/bob-zybach/

https://www.horsetalk.co.nz/2017/10/13/ecological-imbalance-wildfires-us-rangelands/

http://dailycaller.com/2018/08/08/mismanagement-forests-time-bombs/

Footnote: So how do you want your forest fires, some small ones now or mega fires later?

The paper is

The paper is