Exxon Shareholders Reject Activists May 25

Update May 26 below

May 25, 2016: A shareholder proposition from global warming alarmists was soundly defeated today at the Annual Meeting. Activists took heart that 38% of shares were voted in favor, larger than previous such actions received. It appears that much of that support came from the Norwegian sovereign wealth fund, which is a story in its own right.

The world’s largest sovereign wealth fund announced Tuesday that it would back shareholder resolutions requiring Chevron and ExxonMobil to report on how climate change could threaten assets during extreme weather events or put revenues at risk due to government efforts to transition from fossil fuels to renewable sources.

The company that manages Norway’s $872 billion fund said the boards of directors for the oil giants should better anticipate those risks — as well as any upsides — and report on them to shareholders. (here)

Anyone visiting Norway (as I did last year) will recognize from the prices of everything and the obvious signs of conspicious consumption that modern Norway is a Petro-state. I don’t have Tesla sales statistics handy, but just walking around Oslo, you can clearly see more of them per capita than anywhere outside of Hollywood. (Huge subsidies and free recharging helps.)

Now the Norwegians deserve credit for putting their enormous profits from North Sea oil into a fund for future generations. Don’t see that in Saudia Arabia or Iran, or most other Petro-States. But their acceptance of CO2 warming dogma is as jarring as the Rockefeller Foundation funding anti-petroleum activists. Why all this guilt over energy resources?

Other major investors promoting the action included: The Church Commissioners for England, Trustee of New York State Common Retirement Fund, Amundi, AXA Investment Management, BNP Paribas, CalPERS, and Legal & General Investment Management.

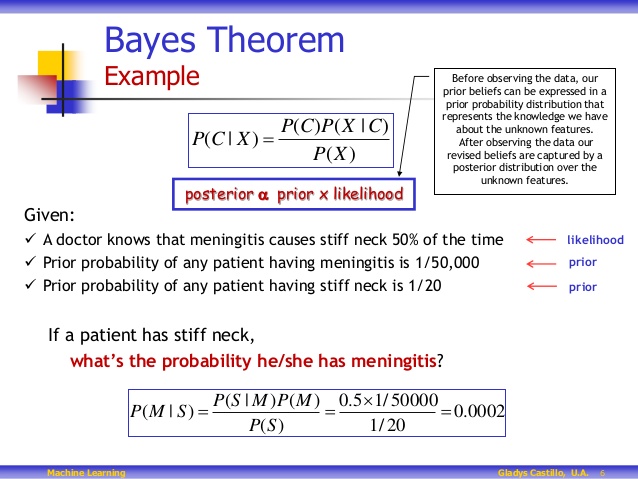

The proposition itself is based upon a flimsy set of suppositions, as explained here: https://rclutz.wordpress.com/2t016/05/01/behind-the-alarmist-scene/

Update May 26

Some sources give more insight into the activism employed,

From CNBC

Earlier this month, a letter signed by 1,000 professors from over 40 global universities, including Oxford and Ivy League colleges like Harvard, was sent by Positive+Investment — a campaign group launched by Cambridge students — to Exxon and Chevron’s top 20 shareholders urging they pass the resolutions.

But institutional shareholders including Norway’s $872 billion sovereign wealth fund, the Church of England, and the U.S.’s largest state pension fund are already throwing their weight behind the climate cause.

Norges Bank Investment Management (NBIM) publicly disclosed that it plans to vote in favor of climate impact assessment reports for both Chevron and Exxon, telling reporters earlier this month that it would relentlessly push the companies to be more open about their climate change strategies, even if the proposals didn’t pass at this year’s AGM.

According to its 2015 holdings report, NBIM holds a 0.85 percent stake in Chevron worth $1.45 billion, and a 0.78 percent stake in Exxon worth $2.54 billion.

And the push will continue according to Washington Examiner:

“The recommendation by Exxon’s board to outright reject every single climate resolution from shareholders sends an incontestable signal to investors: it’s due time to divest from Exxon’s deception,” said May Boeve, executive director of the group 350.org, a leading proponent of the Keep it in the Ground campaign and movement for pension funds, schools and others to divest from investments in fossil fuels. Many scientists blame the greenhouse gases emitted from the burning of fossil fuels, such as crude oil and coal, for man-made climate change.

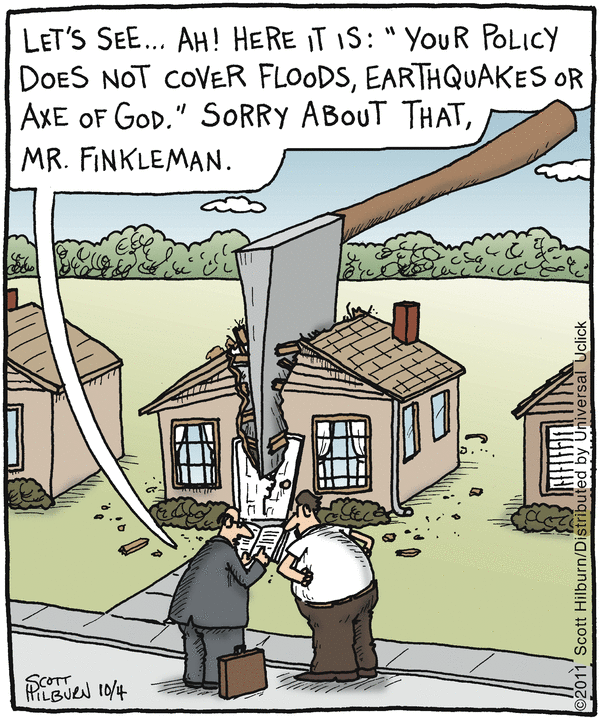

ExxonMobil has been targeted because they have not given an inch to demands from alarmists. But other energy companies all also under attack. Shell shareholders overwhelmingly voted against considering a proposition to convert the company into a renewables business.

Attempts to appease bullies seldom stop them from making more and bigger demands. Those companies now talking “Green” in order to be politically correct on climate change won’t be left alone to conduct their businesses. ExxonMobil knows this already, and has the subpoenas to prove it.