Blaiming Hurricanes on Global Warming Denies the Facts

James Piereson writes at New Criterion An overblown hypothesis. Excerpts in italics with my bolds.

We are well into hurricane season with a dangerous storm lurking off the coast of Florida and now poised to make a run up the east coast of the United States. As happens every year at this time, the appearance of hurricanes provokes speculation about the role of climate change in the formation of these destructive storms.

Climate change theorists assert that warming ocean temperatures are increasing the number and strength of hurricanes that form and make landfall in the United States. As David Leonhardt writes this week in the New York Times, “The frequency of severe hurricanes in the Atlantic Ocean has roughly doubled over the last two decades, and climate change appears to be the reason.” He cites some statistics to support this conclusion, though his review of the facts is far from thorough.

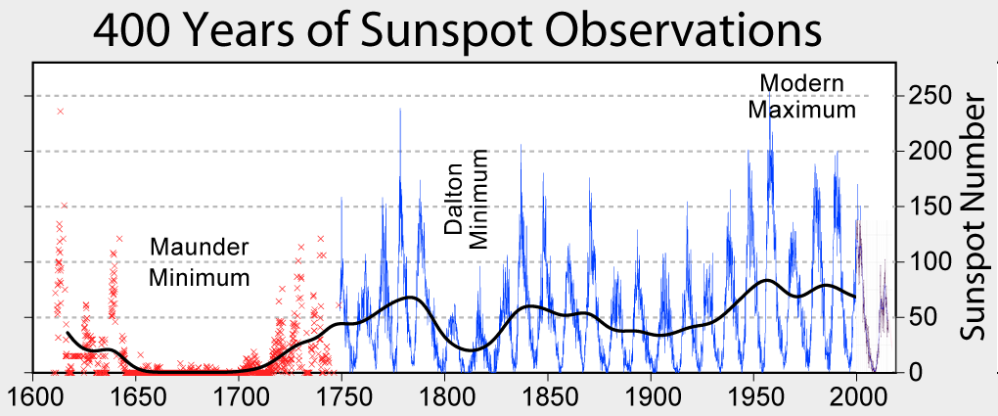

As he notes, the underlying science holds that hurricanes develop in warm ocean waters in late summer, so that over time rising ocean temperatures will generate rising numbers of hurricanes, and stronger ones as well. According to scientists, average ocean temperatures have increased by about one degree Fahrenheit over the past one hundred to a hundred and fifty years, a finding that provides a foundation for the “hurricane hypothesis.” Thus, we hear the refrain that global warming is causing more storms with higher wind speeds, and that these storms last longer, are more destructive, and make landfall more often than in the past.

It is a plausible hypothesis and, unlike many claims in this area, is capable of being tested against the facts. The evidence for it turns out to be quite thin—at least in relation to the certainty with which it is usually expressed.

Looking at the historical data, one does not find a startling increase in hurricane activity in recent decades, and only modest evidence to suggest that hurricanes in the Atlantic basin are increasing either in number or severity.

The National Hurricane Center, a division of the National Weather Service, has compiled reliable information on hurricanes going back to the middle of the nineteenth century—though the information the nhc collects has grown much more reliable in recent decades with the development of satellite imagery and ever-more sensitive instruments with which to measure the strength and windspeeds of hurricanes. There is no shortage of information to test the claims about increasing hurricane activity.

1. Are hurricanes in the Atlantic Ocean increasing in frequency with the passage of time?

The modern era of hurricane tracking and measurement got underway about 1950. From 1950 through 2018, the Hurricane Research Division (HRD) tells us that on average 6.3 named hurricanes formed per year, with a high of fifteen storms in 2005 (the year of Katrina) and a low of two in 1982 and 2013. A named hurricane is one strong enough to be classified between 1 and 5 on the Saffir-Simpson Hurricane scale. Again, these are storms that formed in the Atlantic, but not all of them made landfall. Broken down decade by decade, the averages look like this:

1950-59: 6.9 per year

1960-69: 6.1 per year

1970-79: 5.0 per year

1980-89: 5.2 per year

1990-99: 6.4 per year

2000-09: 7.4 per year

2010-18: 7.0 per year

Conclusion: There has been a modest increase in the number of hurricanes formed per year since 2000, but these rates are not significantly higher than the long-term average and are very close to the rates experienced in the 1950s.

2. Are more hurricanes making landfall in the United States with the passage of time?

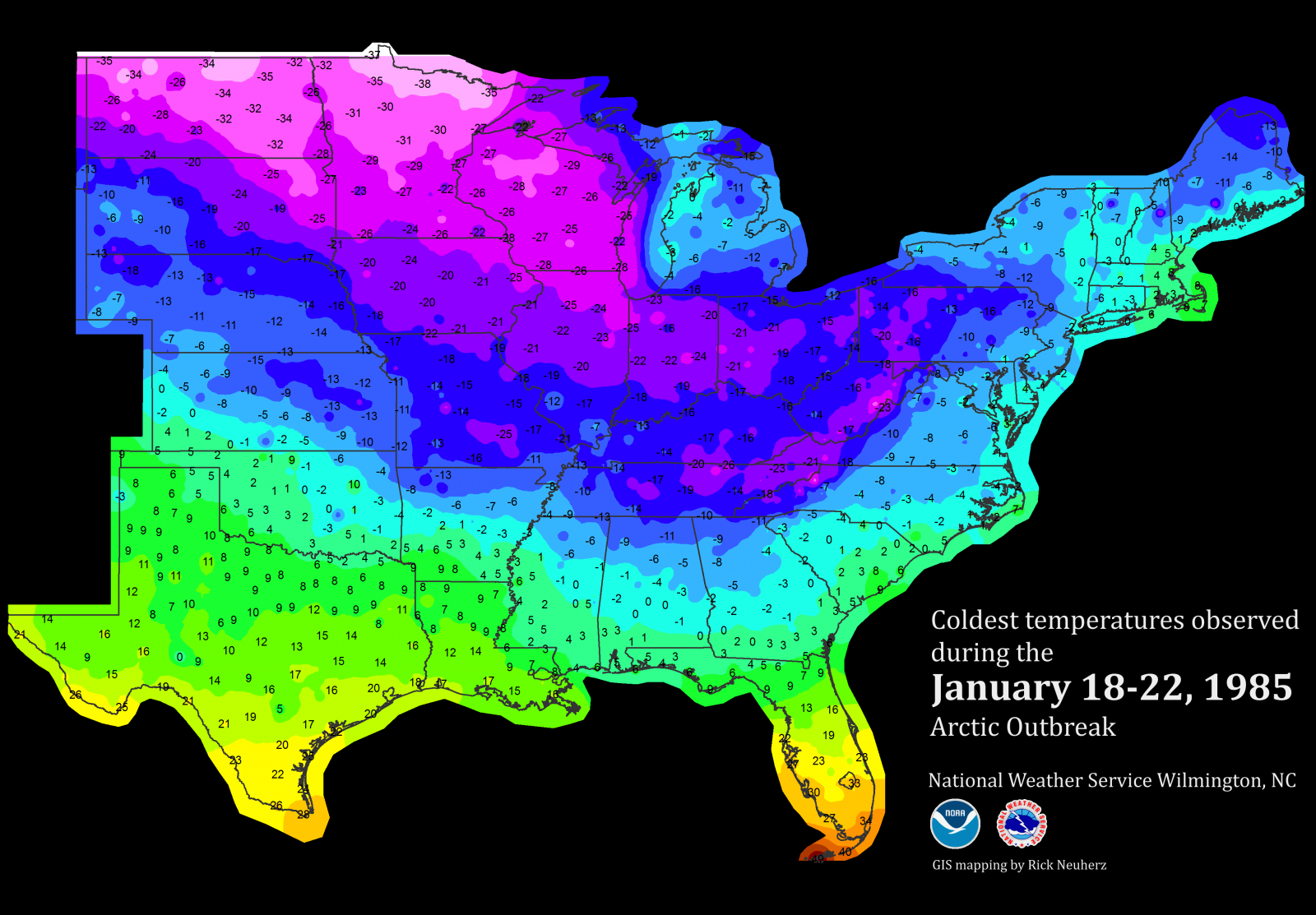

The HRD maintains an accurate list of hurricanes making landfall in the United States going back to 1851 and running through 2018. On average over this hundred-seventy-year period, between one and two hurricanes made landfall per year in the United States. The busiest years for hurricanes since 1950 were 1985, 2004, and 2005, as six named storms made landfall in each of these years. The busiest decade going back to 1850 was the 1940s, when twenty-six hurricanes made landfall; more recently, the busiest decade was between 2000 and 2009, when nineteen hurricanes made landfall.

Average by decade, 1950 to 2018: 15

Average by decade, 1990-2018: 15

Average by decade, 1950-1989: 15

Conclusion: There has been no long-term increase in the number of named hurricanes making landfall in the United States.

3, Are Atlantic hurricanes growing more powerful with the passage of time?

Over the hundred-seventy-year period, just four Category 5 hurricanes (the most powerful of all storms) have made landfall in the United States: The Labor Day hurricane that hit the Florida Keys in 1935; Hurricane Camille, which hit the Gulf coast in 1969; Hurricane Andrew, which hit south Florida in 1992; and Hurricane Michael, which hit Florida and Georgia in 2018. These events appear unrelated to changes in ocean temperatures.

The two most destructive storms to hit the USA occurred in 1900 (Galvaston) and 1926 (Miami), long before the era of rising ocean temperatures.

From 1950 to 2018, an average of 2.7 major hurricanes have formed per year in the Atlantic basin, with highs of eight major hurricanes in 1950 and seven in 2005. There were several years in the period, most recently in 2013 and 1994, when there were no major hurricanes in the Atlantic. (The HRD defines a major hurricane as a storm classified as 3, 4, or 5 on the Saffir-Simpson Scale.)

A total of twelve Category 4 and 5 hurricanes have made landfall in the United States since 1950, following no particular historical pattern or trend.

Conclusion: There has been a slight increase in the frequency of powerful hurricanes since 1990, but mostly in relation to the numbers of such storms from 1970 to 1989, a quiet period for hurricane formation. The frequency of powerful hurricanes from 2000 to 2018 (3.3 per year) mirrors the rates experienced from 1950 to 1969 (also 3.3 per year). Moreover, there is no pattern or trend in the frequency of Category 4 and 5 hurricanes making landfall over the 1950-2018 period.

How, then, in view of these data, should we assess the claims that Atlantic hurricanes are increasing in numbers and strength in recent decades in response to rising atmospheric and ocean temperatures, and are also making landfall at increasing rates?

There has been a modest increase in the frequency of Atlantic hurricanes in recent decades along with a slight increase in their strength from year to year, but no increase in the number of hurricanes making landfall in the United States and no increase since 1950 in the number of the most powerful hurricanes (Category 4 and 5 storms) to hit the U.S. mainland. Moreover, any trend that we find in the frequency and strength of hurricanes in the past few decades is mostly washed out when we compare those rates to the ones experienced in the 1950s and 1960s. This suggests that the frequency and strength, though perhaps increasing of late, are but loosely related to recent measured increases in Atlantic Ocean temperatures.