A placard outside the UN headquarters in New York City, November 2011

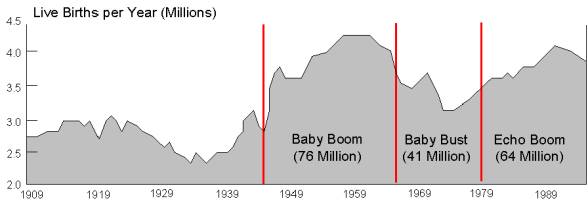

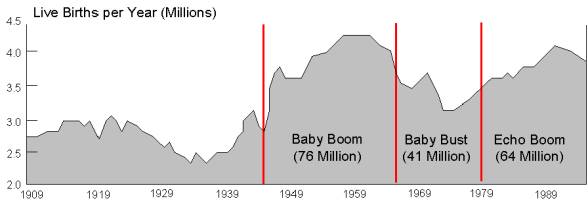

Some years ago I read the book Boom, Bust and Echo. It described how planners for public institutions like schools and hospitals often fail to anticipate demographic shifts. The authors described how in North America, the baby Boom after WWII overcrowded schools, and governments struggled to build and staff more facilities. Just as they were catching up came the sexual revolution and the drop in fertility rates, resulting in a population Bust in children entering the education system. Now the issue was to close schools and retire teachers due to overcapacity, not easy to do with sentimental attachments. Then as the downsizing took hold came the Echo. Baby boomers began bearing children, and even at a lower birth rate, it still meant an increased cohort of students arriving at a diminished system.

The story is similar to what is happening today with world population. Zachary Karabell writes in Foreign Affairs The Population Bust: Demographic Decline and the End of Capitalism as We Know It. Excerpts in italics with my bolds.

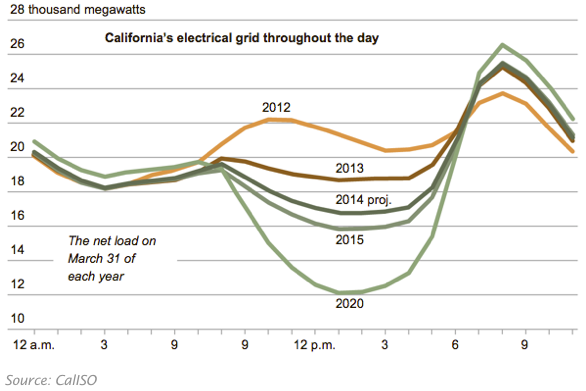

For most of human history, the world’s population grew so slowly that for most people alive, it would have felt static. Between the year 1 and 1700, the human population went from about 200 million to about 600 million; by 1800, it had barely hit one billion. Then, the population exploded, first in the United Kingdom and the United States, next in much of the rest of Europe, and eventually in Asia. By the late 1920s, it had hit two billion. It reached three billion around 1960 and then four billion around 1975. It has nearly doubled since then. There are now some 7.6 billion people living on the planet.

Just as much of the world has come to see rapid population growth as normal and expected, the trends are shifting again, this time into reverse. Most parts of the world are witnessing sharp and sudden contractions in either birthrates or absolute population. The only thing preventing the population in many countries from shrinking more quickly is that death rates are also falling, because people everywhere are living longer. These oscillations are not easy for any society to manage. “Rapid population acceleration and deceleration send shockwaves around the world wherever they occur and have shaped history in ways that are rarely appreciated,” the demographer Paul Morland writes in The Human Tide, his new history of demographics. Morland does not quite believe that “demography is destiny,” as the old adage mistakenly attributed to the French philosopher Auguste Comte would have it. Nor do Darrell Bricker and John Ibbitson, the authors of Empty Planet, a new book on the rapidly shifting demographics of the twenty-first century. But demographics are clearly part of destiny. If their role first in the rise of the West and now in the rise of the rest has been underappreciated, the potential consequences of plateauing and then shrinking populations in the decades ahead are almost wholly ignored.

The mismatch between expectations of a rapidly growing global population (and all the attendant effects on climate, capitalism, and geopolitics) and the reality of both slowing growth rates and absolute contraction is so great that it will pose a considerable threat in the decades ahead. Governments worldwide have evolved to meet the challenge of managing more people, not fewer and not older. Capitalism as a system is particularly vulnerable to a world of less population expansion; a significant portion of the economic growth that has driven capitalism over the past several centuries may have been simply a derivative of more people and younger people consuming more stuff. If the world ahead has fewer people, will there be any real economic growth? We are not only unprepared to answer that question; we are not even starting to ask it.

BOMB OR BUST?

At the heart of The Human Tide and Empty Planet, as well as demography in general, is the odd yet compelling work of the eighteenth-century British scholar Thomas Malthus. Malthus’ 1798 Essay on the Principle of Population argued that growing numbers of people were a looming threat to social and political stability. He was convinced that humans were destined to produce more people than the world could feed, dooming most of society to suffer from food scarcity while the very rich made sure their needs were met. In Malthus’ dire view, that would lead to starvation, privation, and war, which would eventually lead to population contraction, and then the depressing cycle would begin again.

Yet just as Malthus reached his conclusions, the world changed. Increased crop yields, improvements in sanitation, and accelerated urbanization led not to an endless cycle of impoverishment and contraction but to an explosion of global population in the nineteenth century. Morland provides a rigorous and detailed account of how, in the nineteenth century, global population reached its breakout from millennia of prior human history, during which the population had been stagnant, contracting, or inching forward. He starts with the observation that the population begins to grow rapidly when infant mortality declines. Eventually, fertility falls in response to lower infant mortality—but there is a considerable lag, which explains why societies in the modern world can experience such sharp and extreme surges in population. In other words, while infant mortality is high, women tend to give birth to many children, expecting at least some of them to die before reaching maturity. When infant mortality begins to drop, it takes several generations before fertility does, too. So a woman who gives birth to six children suddenly has six children who survive to adulthood instead of, say, three. Her daughters might also have six children each before the next generation of women adjusts, deciding to have smaller families.

The population bust is going global almost as quickly as the population boom did in the twentieth century. The burgeoning of global population in the past two centuries followed almost precisely the patterns of industrialization, modernization, and, crucially, urbanization. It started in the United Kingdom at the end of the nineteenth century (hence the concerns of Malthus), before spreading to the United States and then France and Germany. The trend next hit Japan, India, and China and made its way to Latin America. It finally arrived in sub-Saharan Africa, which has seen its population surge thanks to improvements in medicine and sanitation but has not yet enjoyed the full fruits of industrialization and a rapidly growing middle class.

With the population explosion came a new wave of Malthusian fears, epitomized by the 1968 book The Population Bomb, by Paul Ehrlich, a biologist at Stanford University. Ehrlich argued that plummeting death rates had created an untenable situation of too many people who could not be fed or housed. “The battle to feed all of humanity is over,” he wrote. “In the 1970’s the world will undergo famines—hundreds of millions of people are going to starve to death in spite of any crash programs embarked on now.”

Ehrlich’s prophecy, of course, proved wrong, for reasons that Bricker and Ibbitson elegantly chart in Empty Planet. The green revolution, a series of innovations in agriculture that began in the early twentieth century, accelerated such that crop yields expanded to meet humankind’s needs. Moreover, governments around the world managed to remediate the worst effects of pollution and environmental degradation, at least in terms of daily living standards in multiple megacities, such as Beijing, Cairo, Mexico City, and New Delhi. These cities face acute challenges related to depleted water tables and industrial pollution, but there has been no crisis akin to what was anticipated.

Doesn’t anyone want my Green New Deal?

Yet visions of dystopic population bombs remain deeply entrenched, including at the center of global population calculations: in the forecasts routinely issued by the United Nations. Today, the UN predicts that global population will reach nearly ten billion by 2050. Judging from the evidence presented in Morland’s and Bricker and Ibbitson’s books, it seems likely that this estimate is too high, perhaps substantially. It’s not that anyone is purposely inflating the numbers. Governmental and international statistical agencies do not turn on a dime; they use formulas and assumptions that took years to formalize and will take years to alter. Until very recently, the population assumptions built into most models accurately reflected what was happening. But the sudden ebb of both birthrates and absolute population growth has happened too quickly for the models to adjust in real time. As Bricker and Ibbitson explain,

“The UN is employing a faulty model based on assumptions that worked in the past but that may not apply in the future.”

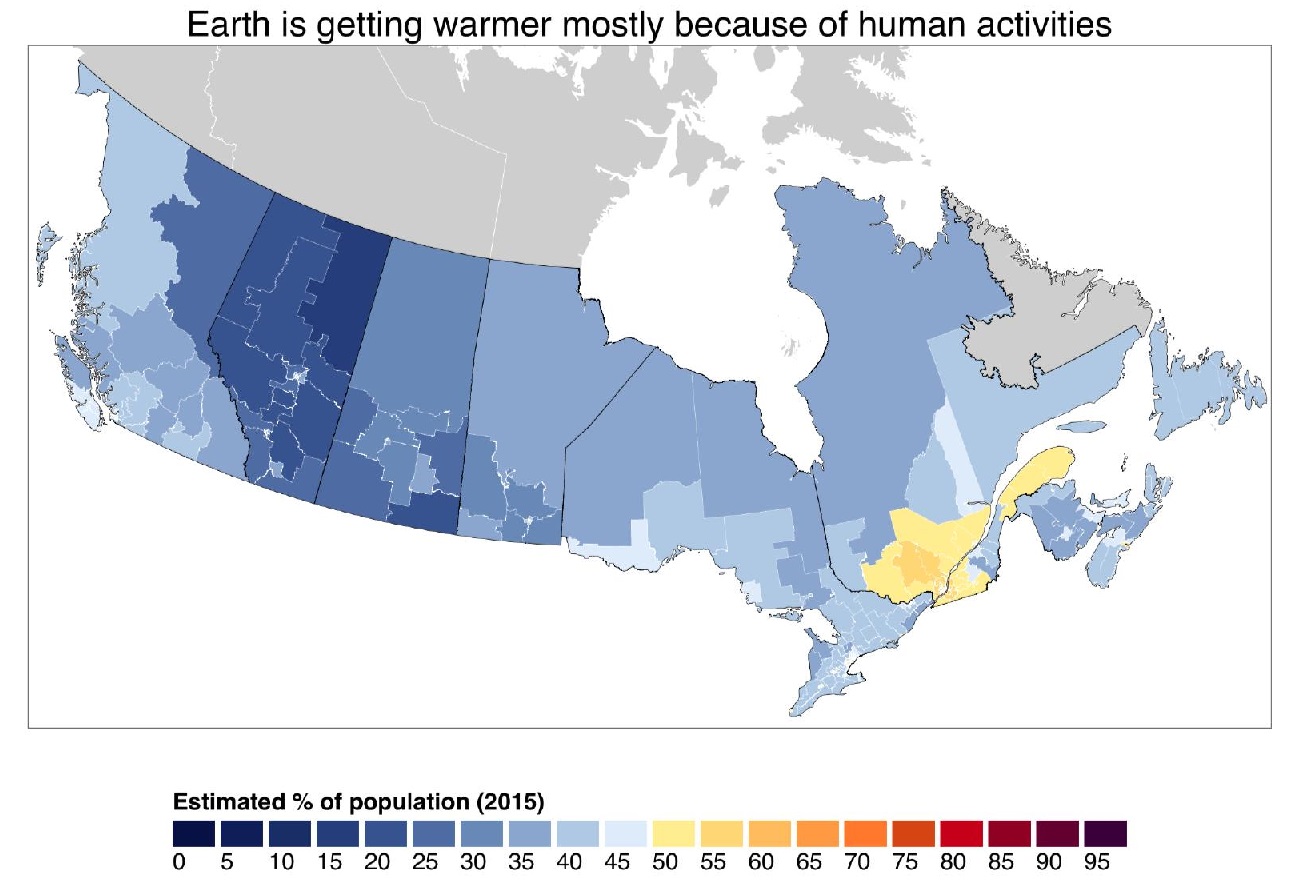

Population expectations aren’t merely of academic interest; they are a key element in how most societies and analysts think about the future of war and conflict. More acutely, they drive fears about climate change and environmental stability—especially as an emerging middle class numbering in the billions demands electricity, food, and all the other accoutrements of modern life and therefore produces more emissions and places greater strain on farms with nutrient-depleted soil and evaporating aquifers. Combined with warming-induced droughts, storms, and shifting weather patterns, these trends would appear to line up for some truly bad times ahead.

Except, argue Bricker and Ibbitson, those numbers and all the doomsday scenarios associated with them are likely wrong. As they write,

“We do not face the challenge of a population bomb but a population bust—a relentless, generation-after-generation culling of the human herd.”

Already, the signs of the coming bust are clear, at least according to the data that Bricker and Ibbitson marshal. Almost every country in Europe now has a fertility rate below the 2.1 births per woman that is needed to maintain a static population. The UN notes that in some European countries, the birthrate has increased in the past decade. But that has merely pushed the overall European birthrate up from 1.5 to 1.6, which means that the population of Europe will still grow older in the coming decades and contract as new births fail to compensate for deaths. That trend is well under way in Japan, whose population has already crested, and in Russia, where the same trends, plus high mortality rates for men, have led to a decline in the population.

What is striking is that the population bust is going global almost as quickly as the population boom did in the twentieth century. Fertility rates in China and India, which together account for nearly 40 percent of the world’s people, are now at or below replacement levels. So, too, are fertility rates in other populous countries, such as Brazil, Malaysia, Mexico, and Thailand. Sub-Saharan Africa remains an outlier in terms of demographics, as do some countries in the Middle East and South Asia, such as Pakistan, but in those places, as well, it is only a matter of time before they catch up, given that more women are becoming educated, more children are surviving their early years, and more people are moving to cities.

Both books note that the demographic collapse could be a bright spot for climate change. Given that carbon emissions are a direct result of more people needing and demanding more stuff—from food and water to cars and entertainment—then it would follow that fewer people would need and demand less. What’s more, larger proportions of the planet will be aging, and the experiences of Japan and the United States are showing that people consume less as they age. A smaller, older population spells some relief from the immense environmental strain of so many people living on one finite globe.

The Reinvention of Chess

That is the plus side of the demographic deflation. Whether the concomitant greening of the world will happen quickly enough to offset the worst-case climate scenarios is an open question—although current trends suggest that if humanity can get through the next 20 to 30 years without irreversibly damaging the ecosystem, the second half of the twenty-first century might be considerably brighter than most now assume.

The downside is that a sudden population contraction will place substantial strain on the global economic system.

Capitalism is, essentially, a system that maximizes more—more output, more goods, and more services. That makes sense, given that it evolved coincidentally with a population surge. The success of capitalism in providing more to more people is undeniable, as are its evident defects in providing every individual with enough. If global population stops expanding and then contracts, capitalism—a system implicitly predicated on ever-burgeoning numbers of people—will likely not be able to thrive in its current form. An aging population will consume more of certain goods, such as health care, but on the whole aging and then decreasing populations will consume less. So much of consumption occurs early in life, as people have children and buy homes, cars, and white goods. That is true not just in the more affluent parts of the world but also in any country that is seeing a middle-class surge.

The future world may be one in which capitalism at best frays and at worst breaks down completely.

But what happens when these trends halt or reverse? Think about the future cost of capital and assumptions of inflation. No capitalist economic system operates on the presumption that there will be zero or negative growth. No one deploys investment capital or loans expecting less tomorrow than today. But in a world of graying and shrinking populations, that is the most likely scenario, as Japan’s aging, graying, and shrinking absolute population now demonstrates. A world of zero to negative population growth is likely to be a world of zero to negative economic growth, because fewer and older people consume less. There is nothing inherently problematic about that, except for the fact that it will completely upend existing financial and economic systems. The future world may be one of enough food and abundant material goods relative to the population; it may also be one in which capitalism at best frays and at worst breaks down completely.

The global financial system is already exceedingly fragile, as evidenced by the 2008 financial crisis. A world with negative economic growth, industrial capacity in excess of what is needed, and trillions of dollars expecting returns when none is forthcoming could spell a series of financial crises. It could even spell the death of capitalism as we know it. As growth grinds to a halt, people may well start demanding a new and different economic system. Add in the effects of automation and artificial intelligence, which are already making millions of jobs redundant, and the result is likely a future in which capitalism is increasingly passé.

If population contraction were acknowledged as the most likely future, one could imagine policies that might preserve and even invigorate the basic contours of capitalism by setting much lower expectations of future returns and focusing society on reducing costs (which technology is already doing) rather than maximizing output.

But those policies would likely be met in the short term by furious opposition from business interests, policymakers, and governments, all of whom would claim that such attitudes are defeatist and could spell an end not just to growth but to prosperity and high standards of living, too. In the absence of such policies, the danger of the coming shift will be compounded by a complete failure to plan for it.

Different countries will reach the breaking point at different times. Right now, the demographic deflation is happening in rich societies that are able to bear the costs of slower or negative growth using the accumulated store of wealth that has been built up over generations. Some societies, such as the United States and Canada, are able to temporarily offset declining population with immigration, although soon, there won’t be enough immigrants left. As for the billions of people in the developing world, the hope is that they become rich before they become old. The alternative is not likely to be pretty: without sufficient per capita affluence, it will be extremely difficult for developing countries to support aging populations.

So the demographic future could end up being a glass half full, by ameliorating the worst effects of climate change and resource depletion, or a glass half empty, by ending capitalism as we know it. Either way, the reversal of population trends is a paradigm shift of the first order and one that is almost completely unrecognized. We are vaguely prepared for a world of more people; we are utterly unprepared for a world of fewer. That is our future, and we are heading there fast.

See also Control Population, Control the Climate. Not.

There is also research to support this hypothesis. When a documentary about a fringe topic, like UFOs, includes the claim that, “This is true,” that actually triggers more skepticism. It encourages the audience to think, “Wait a minute, is this true?” Meanwhile, including a scientists who says, “This is not true,” may actually increase belief, because the audience is impressed that the subject is being taken serious by a scientist, regardless of their ultimate conclusion. But the extent of such backfire effects remains controversial in psychological research – it appears to be very context dependent.

There is also research to support this hypothesis. When a documentary about a fringe topic, like UFOs, includes the claim that, “This is true,” that actually triggers more skepticism. It encourages the audience to think, “Wait a minute, is this true?” Meanwhile, including a scientists who says, “This is not true,” may actually increase belief, because the audience is impressed that the subject is being taken serious by a scientist, regardless of their ultimate conclusion. But the extent of such backfire effects remains controversial in psychological research – it appears to be very context dependent.