Peter Stallinga provides a thorough analysis explaining why models based purely on radiative heat exhanges fail without incorporating other thermodynamic processes. This post is a synopsis of the structure of his position without the extensive mathematical expressions of the relationships discussed. The full text of his paper can be accessed by linking to the title below.

Comprehensive Analytical Study of the Greenhouse Effect of the Atmosphere

By Peter Stallinga. Atmospheric and Climate Sciences, 2020 H/T NoTricksZone. Excerpts in italics with my bolds

Introduction

Climate change is an important societal issue. Large effort in society is spent on addressing it. For adequate measures, it is important that the phenomenon of climate change is well understood, especially the effect of adding carbon dioxide to the atmosphere. In this work, a theoretical fully analytical study is presented of the so-called greenhouse effect of carbon dioxide. The effect of this gas in the atmosphere itself was already determined as being of little importance based on empirical analysis. In the current work, the effect is studied both phenomenologically and analytically.

In a new approach, the atmosphere is solved by taking both radiative as well as thermodynamic processes into account. The model fully fits the empirical data and an analytical equation is given for the atmospheric behavior. Upper limits are found for the greenhouse effect ranging from zero to a couple of mK per ppm CO2. (mK is 1/1000 degree Kelvin). It is shown that it cannot explain the observed correlation of carbon dioxide and surface temperature. This correlation, however, is readily explained by Henry’s Law (outgassing of oceans), with other phenomena insignificant.

Finally, while the greenhouse effect can thus, in a rudimentary way, explain the behavior of the atmosphere of Earth, it fails describing other atmospheres such as that of Mars. Moreover, looking at three cities in Spain, it is found that radiation balances only cannot explain the temperature of these cities. Finally, three data sets with different time scales (60 years, 600 thousand years, and 650 million years) show markedly different behavior, something that is inexplicable in the framework of the greenhouse theory.

The Greenhouse Effect

The greenhouse effect is phenomenologically introduced and compared to the alternative explanation for the data, namely Henry’s Law. The observed correlations between temperature and CO2 are presented; these are the data we are going to attempt to explain.

Henry’s Law

The correlation between temperature and [CO2] is readily explained by another phenomenon, called Henry’s Law: The capacity of liquids to hold gases in solution is depending on temperature. When oceans heat up, the capacity decreases and the oceans thus release CO2 (and other gases) into the atmosphere. When we quantitatively analyze this phenomenon, we see that it perfectly fits the observations, without the need of any feedback [1]. We thus now have an alternative hypothesis for the explanation of the observations presented by Al Gore.

The greenhouse effect can be as good as rejected and Henry’s Law stays firmly standing. We concluded that the effect of anthropogenic CO2 on the climate is negligible and the effect of the ocean temperature on atmospheric [CO2] is exactly, both sign and magnitude, equal to that as expected on basis of Henry’s Law [1].

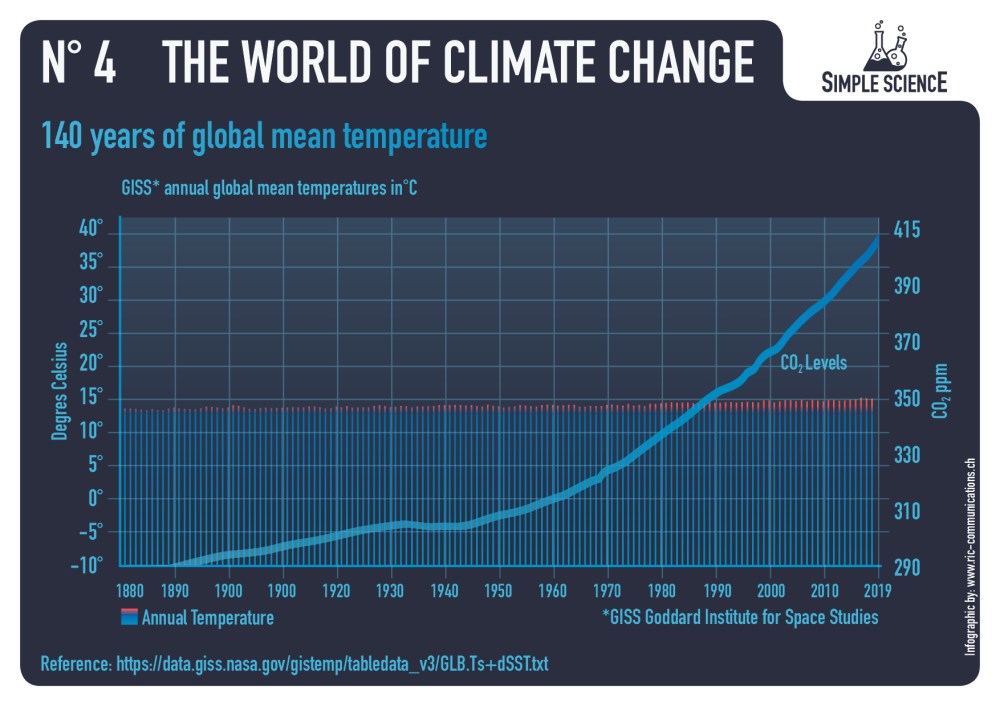

Contemporary Correlation

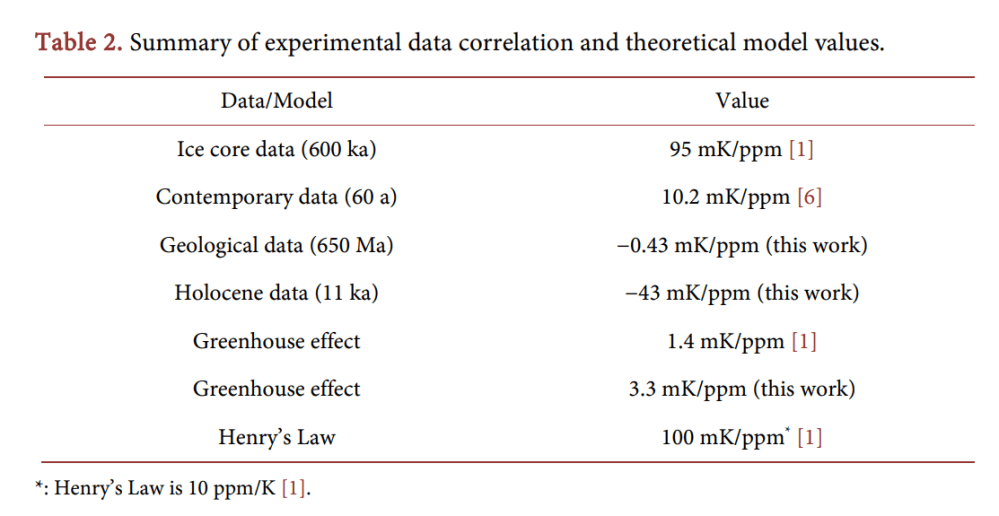

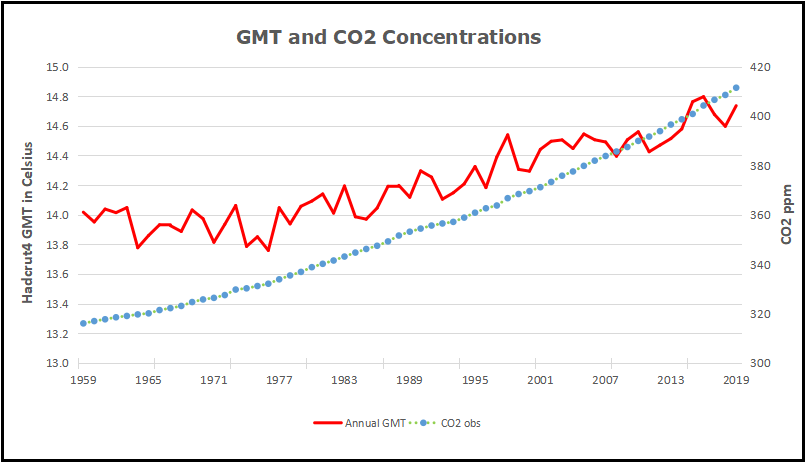

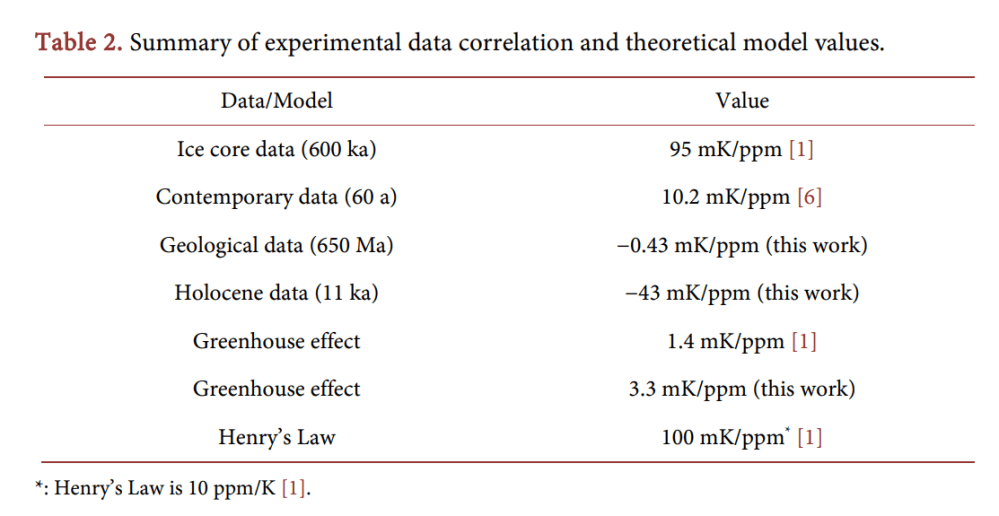

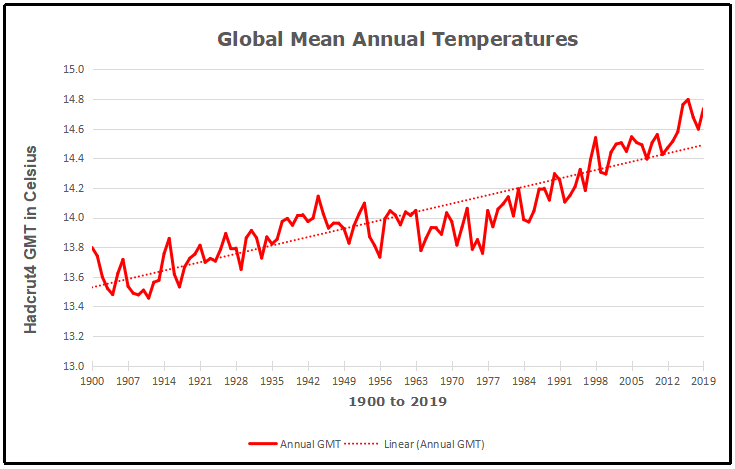

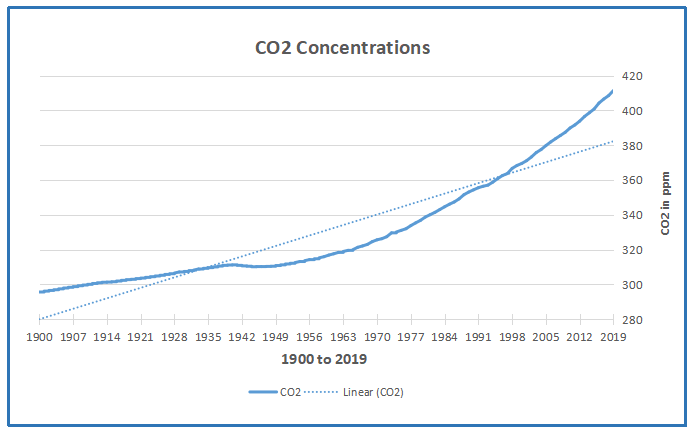

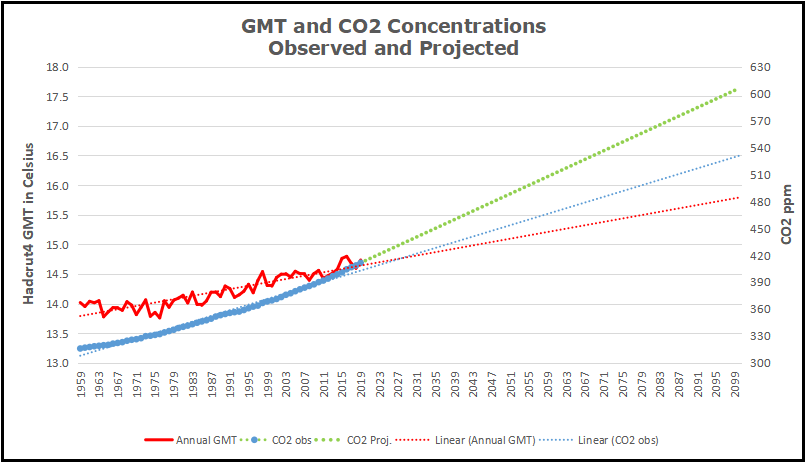

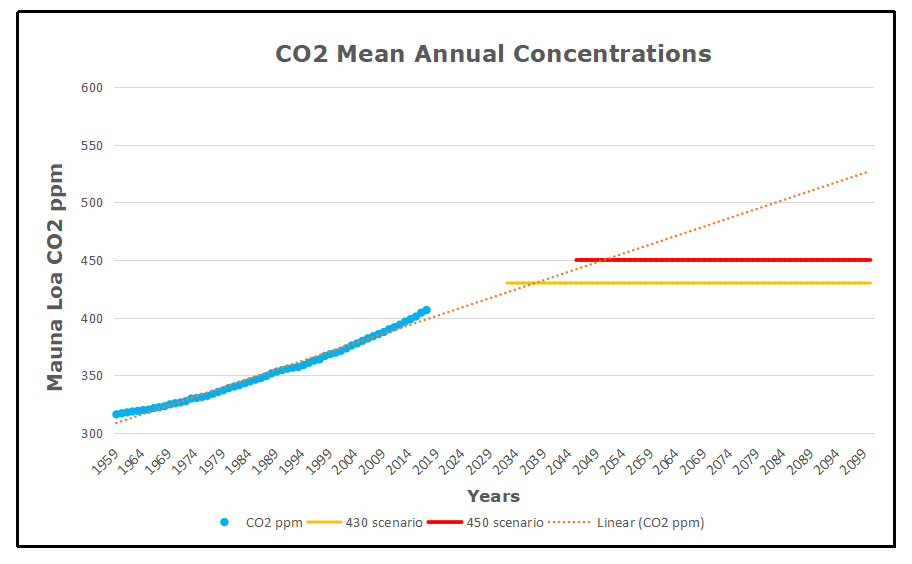

Correlations are best shown in correlation plots; instead of both shown as a time series, they are better shown as one vs. the other; if they are correlated, a straight line should result. Figure 2(b) shows a correlation plot of the same data as used for Figure 2(a) (but no averaging). We see that there is an apparent correlation between the two datasets and we can fit a line to them to find the coefficient. The value is 10.2 mK/ppm. (See Table 2 for a summary of all data sets and models described here).

This experimental value of 10.2 mK/ppm is highly interesting. It is neither close to the value expected for the greenhouse effect (1.4 mK/ppm), nor close to the value of Henry’s Law (100 mK/ppm). Even stranger, it is also not close to the value of the 600-ka ice core data (95 mK/ppm).

A missing response might be explained in a relaxation model. After all, induced changes take time to materialize; the system needs time to settle to the new equilibrium value. However, too big responses compared to the model are not possible. A more likely cause for the divergence between model and data is that the correlation is merely coincidental [2]. In a Henry’s-Law (HL) analysis, the CO2 has no effect on the temperature, but a concurrent temperature rise is merely a coincidence. That is, because the [CO2] rise in contemporary data is possibly of anthropogenic origin and not (much) caused by the temperature rise. In the HL framework, the ca. 0.8 degree temperature rise has contributed a meager 8 ppm to the CO2 in the atmosphere. The rest might be coming from anthropogenic sources, or from nature itself.

If, on the other hand, we want to attribute the temperature rise to CO2, we must build-in a delay, since most of the effect of the alleged greenhouse effect has apparently not occurred, yet. Using the value of 95 mK/ppm (Table 2), the 80 ppm of Figure 2(b) should have produced (will produce?) a staggering 7.6 degree temperature rise. A meager 0.8 degrees is observed after 60 years (13 mK/a. Figure 2). From these data we can deduce a virtual relaxation time τ , namely a relaxation time of about half a millennium. We can thus expect a temperature rise of some tenths of a degree per decade in the coming centuries.

This causes a problem. Having set out to explain things in known physical laws, we have no idea what physical process might be the origin of this relaxation. The radiation balance of the atmosphere has a relaxation time of about a month and a half, as evidenced by the 30-day belay between shortest/longest day and coldest/warmest day [1]. No millennium-scale relaxation mechanisms are easily identifiable in the atmosphere, where the GHE resides. We can even exclude that the oceans act as thermal sinks for the heat generated in the atmosphere, because then the effect in the atmosphere would initially be even larger than theoretically predicted, in an effect called overshoot. We thus conclude that, upon scrutiny, the (alleged) greenhouse effect also has to be rejected as a hypothesis to explain contemporary data.

Can the data be explained by Henry’s Law, instead? The observed correlation is 10 mK/ppm, or conversely 100 ppm/K. That is a factor 10 too big for Henry’s Law, and relaxation processes can only make the effect smaller. We thus exclude Henry’s Law as an explanation for the contemporary steady [CO2] rise in the atmosphere, it is not caused by the steady rise in temperature.

A Radiative Greenhouse Model

A “classic” greenhouse model is presented where energy transfer is uniquely by radiation. First with the atmosphere as single body, then with the atmosphere as an infinite set of identical layers, and finally with a multi-layer model in which the atmosphere is in thermodynamic equilibrium, so that layers get thinner and colder upwards.

Absorption in the Atmosphere

An intrinsic assumption we make here is that all incoming energy comes from radiation from the Sun. Heat coming from the Earth itself, from below the crust, is too small (about 50 mW/m2 ; estimation from the authors) to be significant. Moreover, all heat must be dissipated to the universe by radiation only. Things as evaporation of hot molecules from the top of the atmosphere is too insignificant. This is a rather trivial assumption and will therefore not be further justified. Perhaps more questionable is the assumption that the atmosphere is a well mixed chamber, meaning that all gases occur in the same ratios everywhere. The theoretical greenhouse effect is governed by optical absorption and emission processes in the atmosphere. As such, the Beer-Lambert rule of absorption plays an important role.

The greenhouse effect is often erroneously presented as being caused by the fact that σ x (and thus also α , τ and absorbance) depends on the wavelength. That visible sunlight can reach the surface and infrared terrestrial light cannot easily escape through the atmosphere. This schematic presentation is wrong, however, as we will discuss. It does not matter how and where the solar radiation is absorbed or terrestrial IR radiation is absorbed and reemitted in the atmosphere, if sunlight reaches the surface or not, etc. The only thing that matters is the amount of radiation received by Earth (that is, the amount that is not reflected directly back into space) and amount of IR radiation emitted

Since the absorption coefficient depends on capture cross-sections as well as concentrations, pumping CO2 in the atmosphere might increase heat absorption in the atmosphere and might thus heat it up. However, at first sight, the effect is probably minimal, because nearly all infrared light is already absorbed; at the top of the atmosphere, according to the Beer-Lambert Equation (Equation (2)): J h( ) ≈ 0. We thus do not expect much effect from adding CO2 to the atmosphere as long as there are other channels open for emission. Imagine: if 99% of the light possibly absorbed is already absorbed (say, 350 W/m2 ), doubling CO2 in the atmosphere will not double the absorption (to 700 W/m2 ), but just add something close to 1% to it (3.5 W/m2 ), as Equation (2) tells us. We call this the “forcing” of the atmosphere.

We must remark at this moment that 254K is the temperature of Earth as seen from outer space. Irrespective of any greenhouse or other effect. If we, from outer space, point a radiometer at the planet it will have a temperature signature of 254.0 K. (If we also include the visible light that is reflected (aS), then the total radiation power is equal to that of an black sphere with temperature 4 T S = = σ 278.3 K ). The greenhouse effect does not change the apparent temperature of the planet as seen from outer space, but only that of a hidden layer (e.g., the solid surface). Radiation into space effectively comes from the atmosphere at an altitude where the temperature is 254.0 K, which is in the troposphere at about 6 km height.

In this analysis we assume that radiation balances are determining the atmosphere. The temperature can then be found from the radiation intensity by the inverse function of Stefan-Boltzmann. With the emission α (UDz + )d linearly depending on height, this results in a fourth-root curve of height, with T = 288 K at the surface ( z = 0 ) and about 210 K (−60˚C) at the top of the atmosphere. This is obviously incorrect. In the extreme, in this model attributing all greenhouse effect to CO2, doubling c = [CO2] will double α and that will result in a temperature of 0 T = 313.2 K, or in other words, a sensitivity of ∆∆ = T0 2 [CO 25.1 K 350 ppm 71.7mK ppm ].

A Non-Uniform Atmosphere

In the analysis above, it was assumed that the atmosphere was a homogeneous well-mixed closed box of height h with constant properties everywhere: pressure, concentrations, absorption constants, etc. (Except for a radiation gradient of Figure 4(b)). A real atmosphere, on the other hand, has no upper boundary and is contained by gravity on one side, making it ever thinner with height. We can calculate the properties of such a system, starting with the ideal gas law.

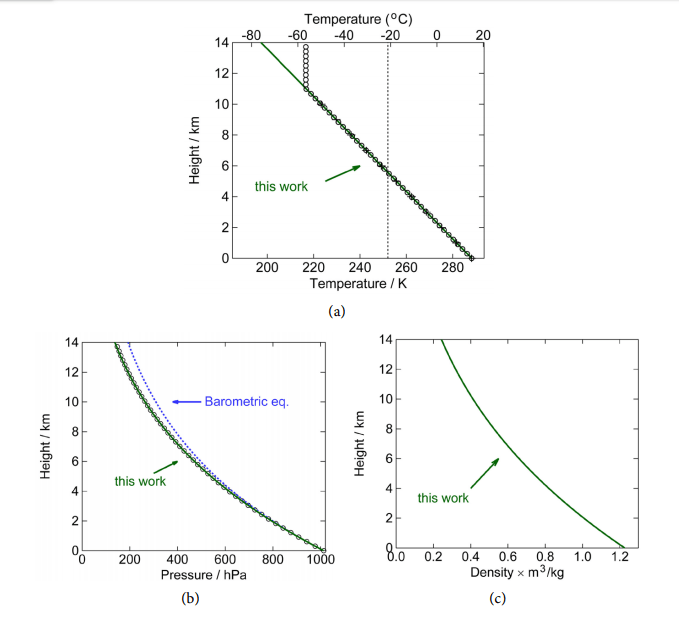

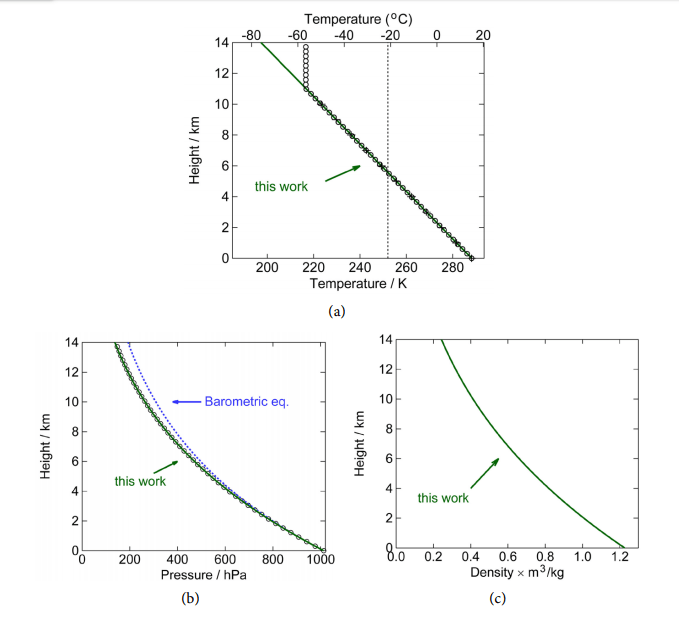

Figure 6. Adiabatic, static atmosphere without heat sources or sinks. (a) Temperature as a function of height, the slope of this linear curve is called the lapse rate and is −6.49 K/km. The black open circles and “+” signs are US standard atmosphere (Refs. [8] and [14], respectively). The green line is Equation (36). The vertical line at 254 K is where the outward radiation apparently is coming from; (b) Pressure as a function of height. The black dots are from Ref. [8]. The blue dashed line is the classical barometric equation, Equation (25), and the green solid line is Equation (38); (c) Density as a function of height, according to Equation (39). (Parameters used: 26 m 4.82 10 kg, − = × p c =1.51 kJ kg K, ⋅ 0 T =15 C, ˚ 0 P =1013.2 hPa, 2 g = 9.81 m s ). The curves here are indistinguishable from the empirical data; the atmosphere is apparently in thermodynamic equilibrium.

We have to make the very important observation that these curves and equations here are independent of any absorption of radiation in the atmosphere, for instance the greenhouse effect discussed earlier (Figure 4(b)). The lapse rate is not caused by radiation, but is a thermodynamic property of the atmosphere. That is, as long as there is thermodynamic equilibrium in the atmosphere, the curves are as given by the above three formulas that are determined by only the surface temperature T0 and total air weight 0P , and physical properties m and p c of the gas and the gravitational constant g. It might, of course, be the case that the atmosphere is not in equilibrium. In that case, mass and heat can be transported through convection, diffusion, conduction and radiation. The latter two only heat (and no mass).

These results are phenomenologically equal to the case of the closed-box Beer-Lambert model. It has the same linear radiation forcing behavior of D(0), depending linearly on total amount of absorbant in the atmosphere. For instance, doubling the total atmosphere with all constituents in it doubles P0 and that doubles the downward radiation (Equation (45)). Moreover, the dependence of temperature on total amount of [CO2] is likewise of the same behavior. If CO2 is the only gas contributing to the greenhouse effect in the atmosphere, the above number would imply a climate sensitivity of ∆T/∆[CO2] =

(25.1 K) /(350 ppm) = 72 mK ppm. Now, estimates are given that CO2 contributes to about 3.62% of the greenhouse effect [18]. Substituting x x σ σ ′ = 1.0362 gives a temperature of 289.2 K, and thus a climate sensitivity of s =∆T/ ∆[CO2 ] = (1.0 K)/( 350 ppm) = 2.9 mK ppm.

Failure of the Model

Now, as mentioned before, we have to conclude that this entire idea of an analysis is wrong, and is only performed here to show what values we might get on basis of the (faulty) analysis. Because of before-mentioned heat creep and thermodynamic equilibrium in general, it does not matter where and how the planet is heated (where radiation is absorbed, etc.), what matters is only the total amount of power, (1− a S) absorbed in the Earth system and U h( ) emitted by it, in equilibrium they are equal.

For ease of calculation we put it all at the surface, but it makes no difference whatsoever. Thus, as a consequence, and even more important to observe, radiation coming from the surface, U (0) , or anywhere else from the atmosphere itself should not be taken into account, since it does not add anything to the total energy input, it is merely a way of redistribution of internal heat, just like transport by convection and evaporation, etc., the final distribution which is given by the equations given here based on ideal gas laws.

The idea that gases in the atmosphere work as some sort of “mirror” to reflect heat back to the surface is incorrect, because a very efficient and functional “mirror” already exists: Because the atmosphere is in thermodynamic equilibrium (as evidenced by the perfect fit of thermodynamic-equilibrium equations to empirical reality, see Figure 6) adding a heat flux F to the system from the top of the atmosphere to the bottom (see Figure 8)—for instance an absorption of IR and reemission downwards—will be fully counteracted by an equal flux F from the bottom of the atmosphere to the top. The net effect will always be zero!

Adding internal radiation to the radiation balance only, and ignoring the annulling other effects, would be allowing an atmosphere to boot-strap itself, heating itself somehow. No object can heat itself to higher temperatures, when it is already in thermal equilibrium. The only radiation that matters is the one coming from the Sun, and it does not matter where and how it enters into the heat balance of the atmosphere. Heat creep (convection, evaporation and radiation) will redistribute the heat to result in the distribution given here (Figure 6); According to Sorokhtin 66.56% by convection, 24.90% by condensation and 8.54% by radiation [19].

An added process (by multiple absorption-emission) might, at best, speed up the equilibration. There is nothing CO2 would add to the current heat balance in the atmosphere, if the outward radiation no longer comes from the surface of the planet, but from a layer high up in the atmosphere. As long as the radiation does not come from the surface, making the layer blacker (more emissive) will radiate—“mirror”—more heat downwards, which is irrelevant (since it will only speed up the rate of thermalization), but also more heat upwards (F’ in Figure 8), cooling down that layer and thermodynamically the surface layer and the entire planet with it! Opening a radiative channel to the cold universe will rather cool an object.

Thermodynamic-Radiative Atmospheric Model

A thermodynamic-radiative model is presented in which each part of the atmosphere is in thermodynamic equilibrium and, moreover, exchanges heat not only by radiation, but also by other ways, such as convection, etc.

This brings us to the final model that will be presented here. It is based on combining the thermodynamic and radiative analyses given above. Considering the fact that the atmosphere is in thermodynamic equilibrium, we must assume that absorbed radiation does get assimilated by the heat bath, just like in classic Beer-Lambert theory. Radiation absorbed is distributed instantaneously all over the atmosphere and the surface. The surface emits with 4 0 σT , a part λ is passing directly to the universe unhindered, 1− λ is absorbed. The atmosphere emits too; the total emissivity equal to ε. Since, for radiation properties, it does not matter where the absorbing/emitting mass resides (z), but only how much radiation it receives and what temperature it has, we start by describing the atmosphere ρ and T not as a function of height z, but as a function of temperature T.

The total energy in the atmosphere can easily be calculated. Since in thermodynamic equilibrium all air packages have the same specific energy given by p c T gz + , independent of z, all mass must have specific energy (per kg) equal to that at z = 0, namely p 0 c T . The total thermodynamic energy of the atmosphere is then total p 0 E cTM = . The atmosphere tries to shed energy by radiation, and receives energy from the surface. The surface also tries to shed energy, either by radiation into the universe, or by transfer to the atmosphere somehow (conduction, etc.). This is a intricate interplay of energy transfer.

We go back to the Beer-Lambert analysis. Once again, this is justified by the assumption that internal absorption is simply recycled back into the system. The heat is rapidly distributed to maintain thermodynamic equilibrium. Radiation leaving the surface 4 σT0 is partly absorbed by the atmosphere and partly transmitted. The absorbed energy goes back to the heat bath p 0 cTM and is redistributed therein. It does not heat up this bath (that would be counting the solar radiation twice), but reabsorbed energy is heat that was not allowed to escape the system and is thus blocked from cooling it.

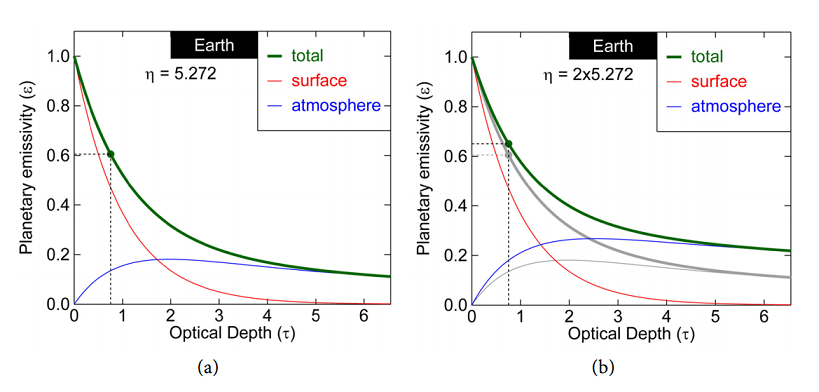

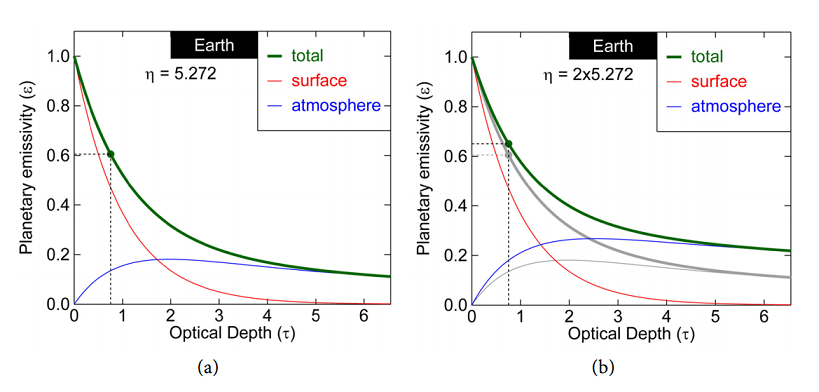

We can now make a plot of the total radiation coming out of the atmosphere as a function of the total optical depth the atmosphere (τ σ= xM m ). Combining Equations (52) and (55), Figure 9 shows the fraction which is the planetary emissivity , as a function of optical optical depth τ = x σ M m, and thermodynamic molecular heat capacity p η = mc k .

Figure 9. Left: Earth planetary emissivity of the fraction of the radiation emitted by the surface that is escaping from the top of the atmosphere. 1 is like a black body, 0 is white. The red curve is the contribution from the surface and the blue curve from the atmosphere. The sum is , shown by the green curve. Right: The effect of augmenting the specific heat p c of air is an increment of the planetary emissivity and thus cooling of the surface if η = mc k p increases, but also a heating up of the atmosphere from where now more radiation originates. (Gray lines are the situation of left figure, colored lines are for a doubling of p c ).

The effect of the atmosphere can be found by knowing the thermodynamic parameter and the optical parameter, more precisely the molecular heat capacity, η = mc k p , and optical depth, x τ σ= M m. The latter is complicated, since it is not simply a matter of a linear function of M. We have to know the complete spectrum. But before we continue, it has to be pointed out that the emissivity Equation (Equation (56)) is a monotonously decreasing function of τ for any value of η . That means that increasing the optical depth of the atmosphere will always reduce the emissivity and increase the surface temperature, in contrast to earlier thoughts we had. Reabsorbed radiation goes back to the heat bath and gets a second change to be radiated out to the universe, maybe through another channel: Emitted at a wavelength for which the atmosphere is more transparent, or maybe from a place higher up in the atmosphere.

Comparing to Reality

Section 5 discusses some test cases of the model. They show mixed success.

Mars

The relevant parameters of the NASA Mars Fact Sheet [22] are given in Table 4. Because most of the atmosphere consists of CO2 (sic), the average molecular mass of molecules is close to the value of CO2 (44.01 g/mol), the fact sheet gives 43.34 g/mol [23]. Therefore, the atmosphere has 3.955 kmol/m2 of molecules. 95.32% of that is CO2, that is 3.770 kmol/m2 . That is much more than above any point on Earth. Yet, the effect of all that CO2 is unmeasurable. The black body (atmosphereless) temperature is bb, T ♂ = 209.8 K, which can be calculated on basis of the solar irradiance 2 W♂ = 586.2 W m and the albedo of Mars ( a♂ = 0.250 ): 4 σTbb,♂ = (1 4 − a W ♂) ♂ . The real temperature of the Mars surface is just this 210 K, making the measured greenhouse effect within the measurement error.

Of course, what is important is not so much how much CO2 is absorbing, but how much is the atmosphere in its entirety absorbing. Better to say, how much it is letting through. Even with CO2 fully saturated, nearly all radiated heat easily escapes the atmosphere. A tiny unmeasurable effect remains. Now, doubling it will have no effect. Imagine 1% of the spectrum is covered, in which part 90% of the radiation is absorbed. Thus 99.1% of all radiation escapes. Doubling this constituent will make the absorption in that 1% part only go to 99%; still 99.01% of all radiation escapes. In this particular case of Mars, CO2 has little effect in whatever quantity it is in the atmosphere.

Earth

Ångström, in his classical work, wrote “[…] it is clear, first, that no more than about 16 percent of earth’s radiation can be absorbed by atmospheric carbon dioxide, and secondly, that the total absorption is very little dependent on the changes in the atmospheric carbon dioxide content, as long as it is not smaller than 0.2 of the existing value” [5].

This basically states that there is no further contribution to the greenhouse effect from CO2 for concentrations above, about, 60 ppm. It is another way of saying that a radiation window that is closed cannot further contribute to greenhouse effect. That is, as long as there are other windows still open. This is not true, however, because absorption lines have tails that can never saturate, absorption can be much larger. A HITRAN simulation of only CO2 absorption (with concentrations as found in the Earth atmosphere) results in absorption of 17.4% (See Figure 11; direct emission 82.6%), close to the value estimated by Ångström. Yet, as we have seen, for Mars, with 30 times more CO2, this absorption is 26.4%. It shows the complexity of the subject. This manuscript is not about numerical simulations, but about analytical understanding of the greenhouse effect. We will now make an empirical estimation here in the framework of our analytical model.

The real emissivity of Earth (surface plus atmosphere) can easily be determined on basis of empirical data, according to Equation (57), where we defined the emissivity through the ratio of radiation coming out to the radiation emitted by the surface. Taking the thermodynamic parameters ( p m c, ) as constant and as used before (Table 3), from the equations we find that this value occurs for an optical depth of τ = 0.754 (See the green dot of Figure 9). We can even see that 77.7% (0.470) of the radiation going into space comes from the surface and 22.3% (0.135) from the atmosphere. This contrasts the notion mentioned earlier that the radiation comes from 6 km altitude (most still comes from the surface), and also contrasts the values of 28.5% direct emission found earlier for a radiation-only atmosphere. Yet, we also see that if the opacity of the atmosphere increases, more radiation, also in absolute terms, comes from the atmosphere. This is the cooling effect of the atmosphere described earlier.

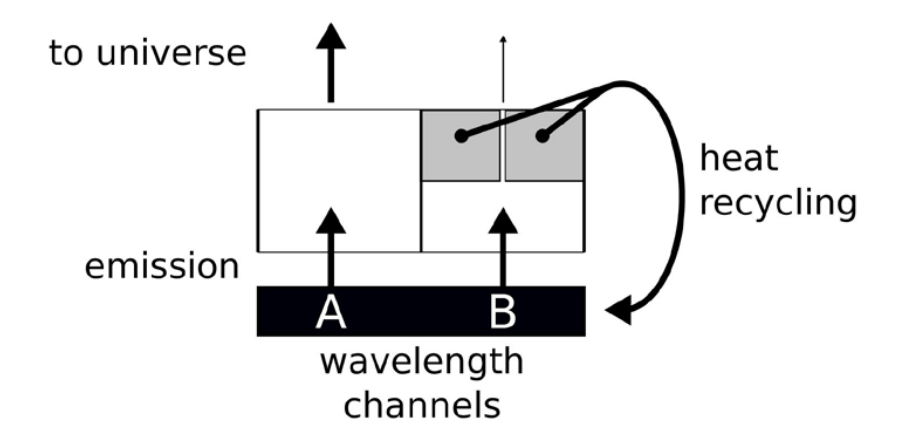

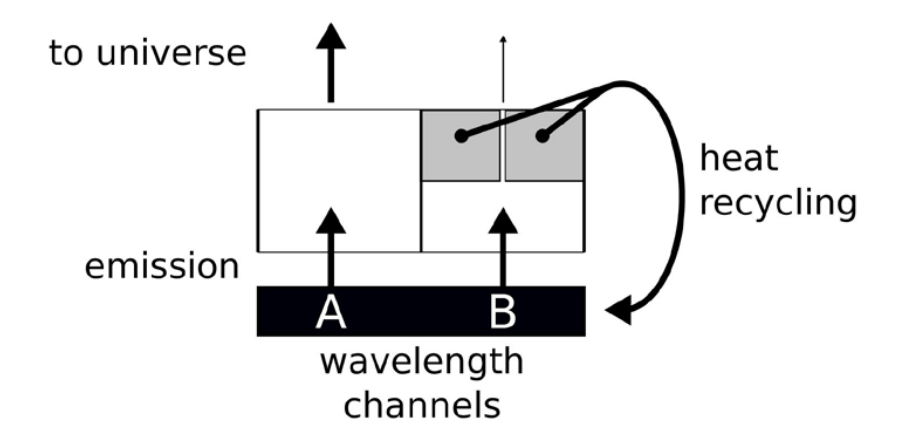

Figure 13. Schematic picture of what happens at Earth. Two channels, one (A) if fully open and one (B) is nearly closed. Closing B further has little-to-no effect.

However, the situation is much closer to situation (f) of Figure 10. That is, a part of the spectrum emission is fully open, and the part of CO2 is as good as closed (shown black in the figure). This situation is depicted in Figure 13, with an open channel A and a closed channel B. Now, further closing the channel (B) that is already as good as closed has little-to-no effect, as long as a significant part of the rest of the spectrum is open.

Note that this is not envisaged in a radiation balance analysis.

In that model, once a heat package has “decided” to opt for a certain wavelength, the only way to make it out of the atmosphere is by multiple-emission-absorptions events, or crawling its way back to the surface. As such, adding CO2 to a closed channel still has a lot of impact. In the current thermodynamic-radiative model, absorbed radiation is given back to the heat bath that is the surface plus the atmosphere ( p 0 cTM ), from where it can have a second chance of escaping into the universe, by the same or by a different channel. To say it in another way, the best-case climate sensitivity of CO2 is zero. The optical length of CO2 in the atmosphere is about 25 m. That is, 25 meters up in the air the radiation emitted by the surface in the spectrum of CO2 is already attenuated by a factor e. In this 25-m layer resides only 1/1773th part of the atmosphere (and CO2). The total transmission of the entire atmosphere is thus exp 1773 (− ) which any calculator shows as zero. Doubling the CO2 in the atmosphere, will have no measurable effect, exp 3546 0. (− ≈) Heat had no chance escaping to the universe through this channel, and now even less so. As long as there is a sliver of the emission spectrum for which the atmosphere is transparent the effect of doubling agents such as CO2 that have a spectrum that is close to saturation is close to nil. This is the lower limit of the effect.

Sevilla-Córdoba-Granada

In this thermodynamic analysis, the temperature at a point on the planet is for a certain radiative input (1 , − a S) mainly determined by the altitude z. The radiative greenhouse effect states it mainly depends on the total amount of carbon dioxide floating above the point. To test these hypotheses, we can look at cities with the same or similar radiative solar input, at the same latitude on the planet, but at different altitudes. Without doing an exhaustive study, we take as example three neighboring cities in the south of Spain,namely Sevilla, Córdoba and Granada, each at a different elevation (Table 5).

The question now is, why is Granada not much warmer in 2019 than Sevilla was in 1951? It is actually still colder. This seriously undermines the idea that carbon dioxide is determining the temperature on our planet. Figure 14 plots the temperature of these cities versus the carbon content above them. The linear regression quality parameter is R2 = 0.21. Meaning, temperature is not well correlated with [CO2].

Venus

When we look at reality, the greenhouse effect on Venus is enormous. Comparing the real temperature 737 K with the blackbody temperature based on the solar radiance and albedo of only 226.6 K, we determine that the emissivity of Venus is very small: 0.00894. For these values we see that the radiation no longer comes from the surface at all, but from the atmosphere, instead, that is very opaque with an optical depth τ of about 81 (see Figure 12). Venus connects to the universe at a high altitude in the atmosphere. The heat finds it way to the surface by thermodynamic means, resulting in a high surface temperature.

Geological Time Scales

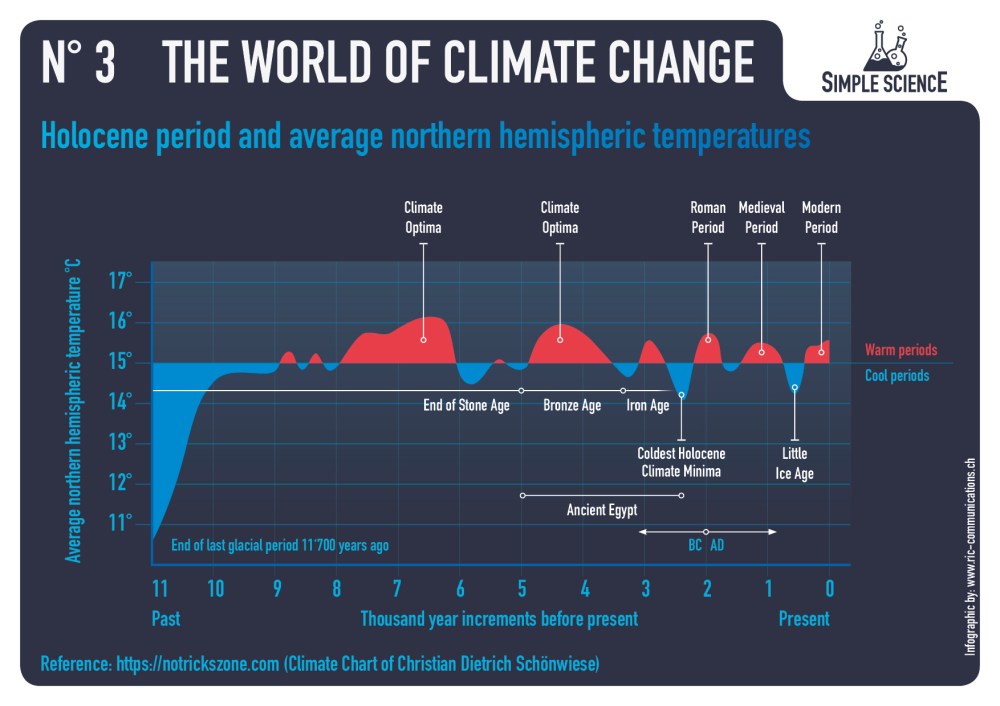

Figure 16. (a) (Holocene) ten-thousand-year time scale data of temperature (blue curve) and [CO2] (purple curve) of Greenland; (b) A correlation curve shows an absence of correlation between the two on this time scale. Plots made with the help of WebPlotDigitizer 4.2 [29] from a plot of based on data of GISP 2 (temperature) and EPICA C (CO2), found at Climate4You [31].

Another experiment nature throws at us is the geological time scale, from times long before humans appeared on this planet. Figure 15 shows these data and it is obvious that in this time scale there is no correlation between carbon-dioxide concentrations in the air and surface temperatures. In fact, a fitting to the data results in a correlation of dT/ d[CO2] = -0.43 mK ppm, which is probably accidental, because we do not know any theory that might explain an inverse correlation between the two quantities. A similar non-correlation we see in the Holocene data of Greenland, see Figure 16. Here a (pseudo)correlation of dT/ d[CO2] = -0.43 mK ppm, is found, two orders-of-magnitude more than in the paleontological data of Figure 15. These results undermine the hypothesis that only CO2 is climate forcing, something that some climatologists claim.

Other Effects: Feedback, Delay, Water

Section 6 augments the model by including feedback and secondary effects, such as water. It also tries to establish relaxation times of the system.

Delay

We might think that the atmosphere did not have time yet to reach the new equilibrium. The observed effects are then always less than the one calculated. For the greenhouse effect, however, we need to explain that the signal is larger than theory predicts. Considering the fact that our calculations may be wrong, we can, still, make an estimation about how long it takes to reach the equilibrium, and turn the observed short-term contemporary 10.2 mK/ppm into the observed long-term 95 mK/ppm. The specific heat capacity of air is p c = ⋅ 1.51 kJ K kg. The pressure is 1013.2 Pa, so the mass density is 3 2 0 M Pg = = × 10.3 10 kg m . We found a radiative forcing of 2 w = 8.6 mW m per ppm (Equation (61)), and a temperature effect of ∆T/∆ [CO2] = 3.3 mK ppm (Equation (60)). The characteristic temperature adjustment time of just the atmosphere alone is then 46 days, That is about a month and a half, a value very similar to the one we found empirically in the phase shift of yearly-periodic solar radiation and temperature data, namely about 1.2 months [32] and a simple relaxation analysis of daily temperature variations which gives 23 days [1]. We can thus exclude any substantial delay effects in the greenhouse effect [CO2] → T on the time scales of the contemporary and ice-core-drilling datasets, 60 a and 600 ka, respectively. On the other hand, we can expect long delays between T and [CO2] in the framework of Henry’s Law. Imagine the atmosphere warms up for some reason (maybe solar activity). This warmed up air must then heat up the relevant layer of the ocean and expose this layer to the surface where the surplus CO2 can outgas.

Water Effect

Water has several effects. First it will change the specific heat of the air ( p c ) and thus the lapse rate of the atmosphere, Equation (36). This will slightly lower the temperature at the surface. As a secondary effect, it will increase dramatically the absorption cross section for infrared, and this will change the ground temperature T0 . The feedback effect of water on outgoing radiation is beyond the Barkhausen criterion and thus, any addition of water to the atmosphere, however tiny, would result in a run-away scenario. However, this effect is fully canceled by the albedo effect, resulting in a net negative effect of water on the temperature.

Conclusions

We have analyzed here the greenhouse effect, using fully analytical techniques, without reverting anywhere to finite-elements calculations. This gave important insight into the phenomenon. An important conclusion is that the analysis in terms of radiation-balances-only cannot explain the situation in the atmosphere. In the extreme case, a differential equation of layers with absorption coefficients, etc., gave the same results as a much simpler 2-box mixed chamber model. However, the underlying assumptions in these calculations are not physical.

Therefore we set out to model the greenhouse effect ab initio, and came up with the thermodynamic-radiation model. The atmosphere is close to thermodynamic equilibrium and based on that we can calculate where and how radiation is absorbed and emitted. This model can explain phenomenologically and analytically how big the effect of the atmosphere is, specifically Equations (56) and (58).

Continuing with the reasoning, we find that the alleged greenhouse effect cannot explain the empirical data—orders of magnitude are missing. There where Henry’s Law—outgassing of oceans—easily can explain all observed phenomena.

Moreover, the greenhouse hypothesis—as presented here—cannot explain the atmosphere on Mars, nor can it explain the geological data, where no correlation between [CO2] and temperature is observed. Nor can it explain why a different correlation is observed in contemporary data of the last 60 years compared to historical data (600 thousand years).

We thus reject the anthropogenic global warming (AGW) hypothesis, both on basis of empirical grounds as well as a theoretical analysis

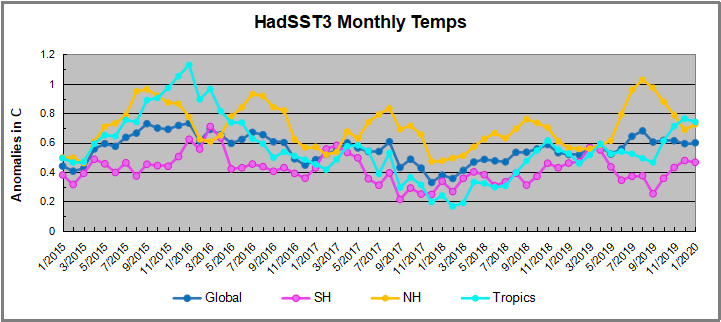

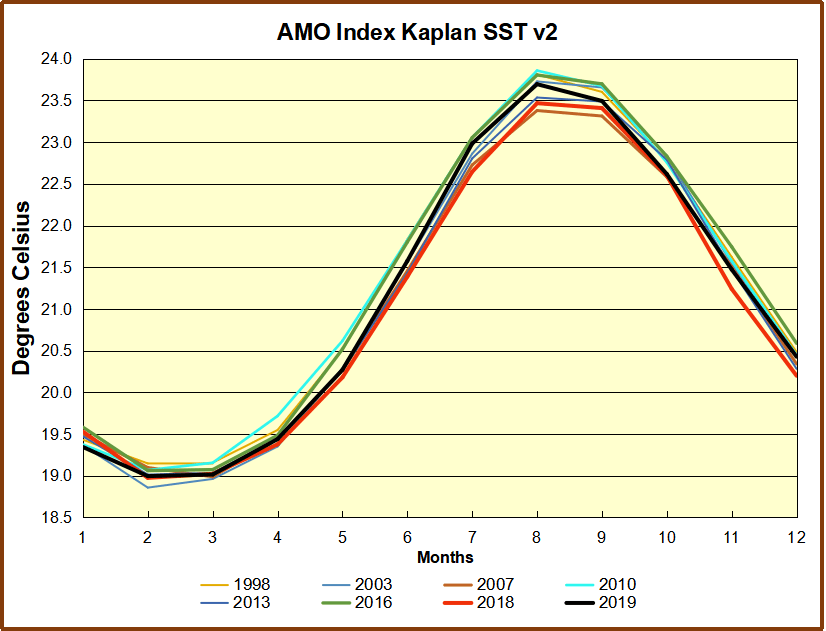

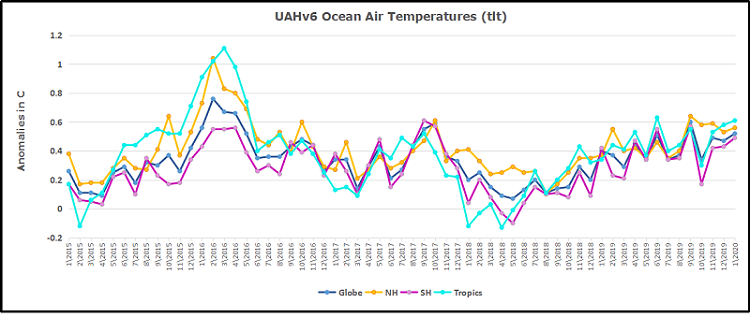

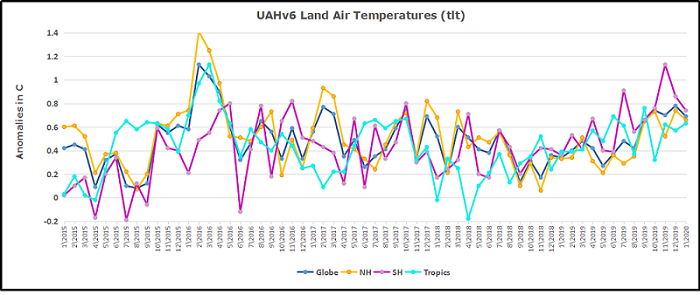

The best context for understanding decadal temperature changes comes from the world’s sea surface temperatures (SST), for several reasons:

The best context for understanding decadal temperature changes comes from the world’s sea surface temperatures (SST), for several reasons:

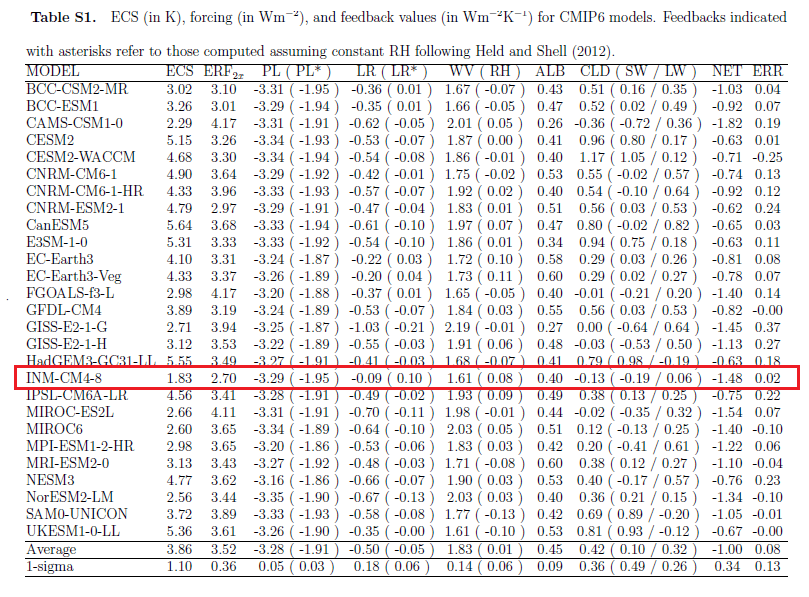

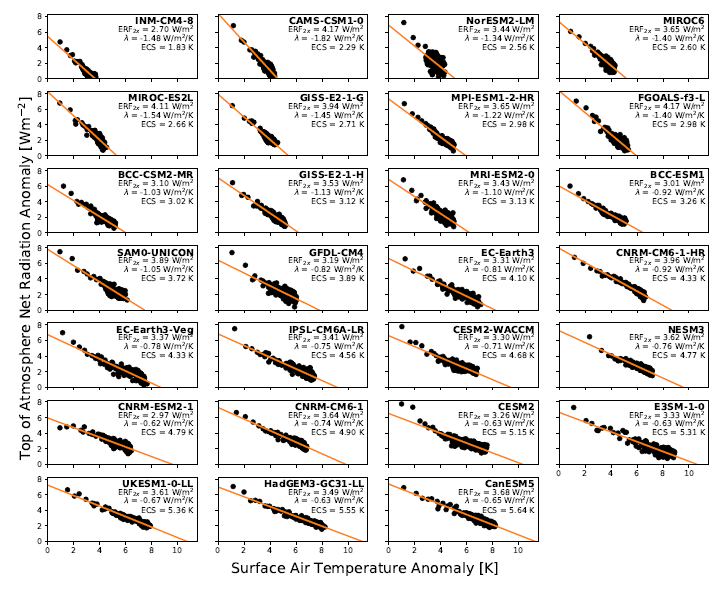

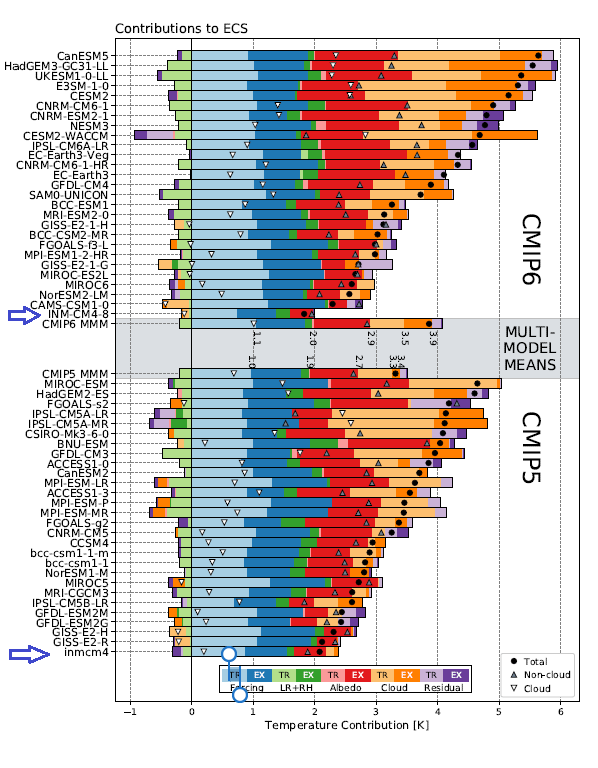

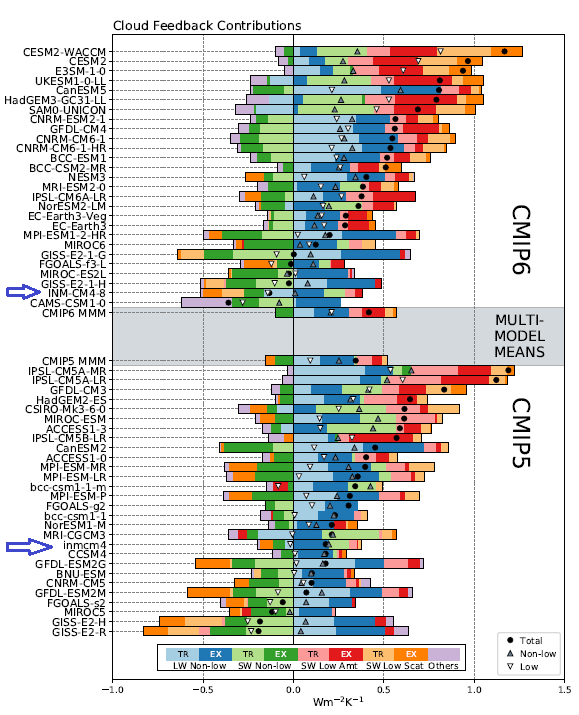

Several posts here discuss INM-CM4, the Good CMIP5 climate model since it alone closely replicates the Hadcrut temperature record, as well as approximating BEST and satellite datasets. This post is prompted by recent studies comparing various CMIP6 models, the new generation intending to hindcast history through 2014, and forecast to 2100.

Several posts here discuss INM-CM4, the Good CMIP5 climate model since it alone closely replicates the Hadcrut temperature record, as well as approximating BEST and satellite datasets. This post is prompted by recent studies comparing various CMIP6 models, the new generation intending to hindcast history through 2014, and forecast to 2100.

is the net TOA radiative flux anomaly,

is the net TOA radiative flux anomaly, is the radiative forcing,

is the radiative forcing, is the radiative feedback parameter, and

is the radiative feedback parameter, and is the global mean surface air temperature anomaly.

is the global mean surface air temperature anomaly. is positive down and

is positive down and  is negative for a stable system.

is negative for a stable system.

is the radiative forcing due to doubled CO

is the radiative forcing due to doubled CO  . .

. . has increased slightly on average in CMIP6 and its intermodel standard deviation has been reduced by nearly 30% from 0.50 Wm^2 in CMIP5 to 0.36 Wm^2 in CMIP6 (Figure 1b).

has increased slightly on average in CMIP6 and its intermodel standard deviation has been reduced by nearly 30% from 0.50 Wm^2 in CMIP5 to 0.36 Wm^2 in CMIP6 (Figure 1b).