Rise of the Dominion Effect

In 2008, although Dominion was in many counties in New York and had an insignificant presence in Wisconsin, it had no presence in the rest of the USA. Dominion built up its presence in 2012, increased it in 2016, and increased it further in 2020.

Background from Shocking History of Dominion Voting Excerpts in italics with my bolds.

Dominion Voting Systems Corp. is the Canadian company behind the ballot switching software.

Dominion was founded in 2003, with a mission to provide electronic voting systems friendly for progressives. Because of such partisanship, it languished with almost no customers for the next 5-6 years, until the Obama administration came to power. In 2010, the Obama administration confiscated electronic voting systems assets (software, intellectual property, manufacturing tools, customer base, etc.) from two established American companies, and gave them to Dominion. At the same time, Dominion got some employees and assets from a foreign EVS company, tied to Hugo Chavez.

Its software has been used by some 40% of the voters in this election, mostly by Democrat-controlled states and election commissions. Apparently, no protections were put in place against ballot switching, deletion, or creation. According to Dominion’s own website, it software was used in “battleground” states and the largest Democrat states, including MI, GA, AZ, NV, NM, CO, AK, UT, NJ, CA, NY.

The Dominion Effect on Vote Counting

From Fraudspotters Statistical Evidence of Dominion Election Fraud? Time to Audit the Machines. Excerpts in italics with my bolds.

Overview

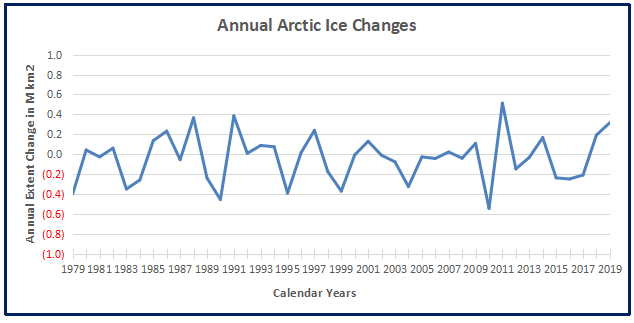

Statistical analysis of past presidential races supports the view that in 2020, in counties where Dominion Machines were deployed, the voting outcomes were on average (nationwide) approximately 1.5% higher for Joe Biden and 1.5% lower for Donald Trump after adjusting for other demographic and past voting preferences. Upon running hundreds of models, I would say the national average effect appears to be somewhere between 1.0% and 1.6%.

For Dominion to have switched the election from Trump to Biden, it would have had to have increased Biden outcomes (with a corresponding reduction in Trump outcomes) by 0.3% in Georgia, 0.6% in Arizona, 2.1% in Wisconsin, and 2.5% in Nevada. The apparent average “Dominion Effect” is greater than the margin in Arizona and Georgia, and close to the margin for Wisconsin and Nevada. It is not hard to picture a scenario where the actual effect in Wisconsin and Nevada was greater than the national average and would have changed the current reported outcome in those two states.

Assuming the “Dominion Effect” is real, it is possible that an audit of these machines would overturn the election.

These results are scientifically valid and typically have a p-value of less than 1%, meaning the chances of this math occurring randomly are less than 1 in 100. This article, and its FAQ, shows many ways to model the “Dominion Effect.”

The best way to restore faith in the system is to audit the Dominion voting machines in Arizona, Georgia, Nevada, and Wisconsin.

Discussion

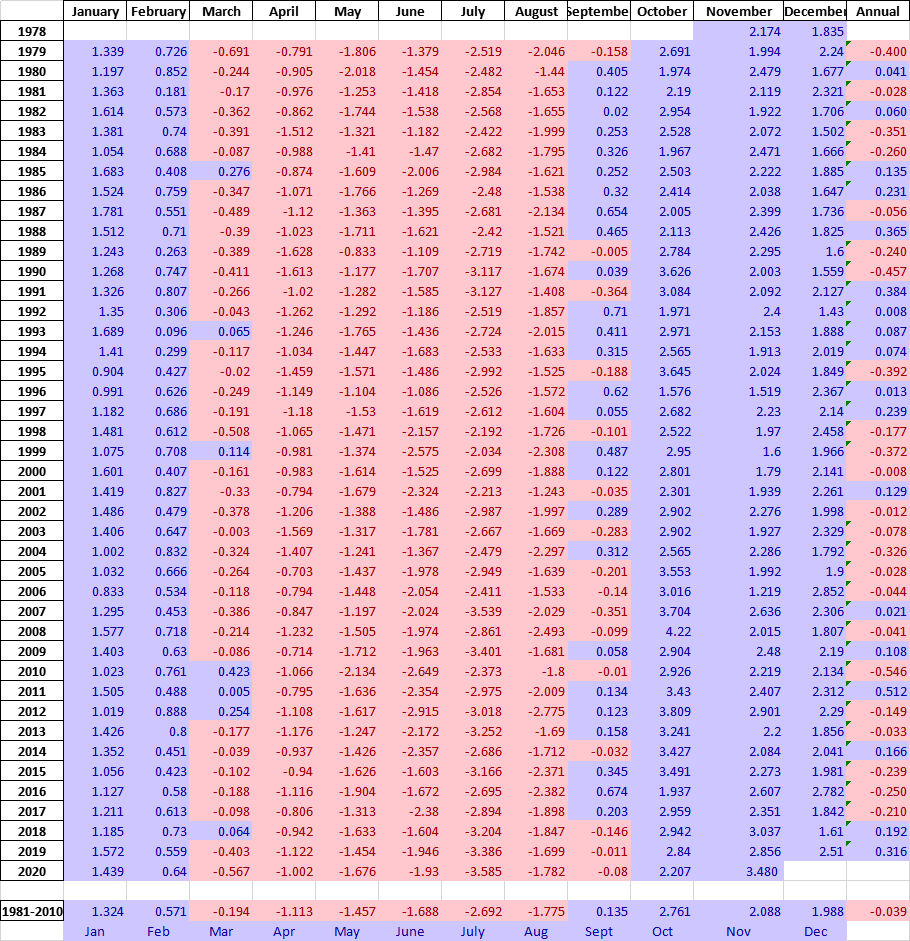

To do this study, we will link results from 2008 to 2020 by each county, parish, or in some cases city. Since this is usually based on county, we will refer to it as county in this article.

By comparing the county to itself, we are constructing the test similar to how a drug company would test the effects of its proposed therapy. In this case, we have 3,050 counties that do not have Dominion in 2008. In 2020, 657 of the counties have Dominion while 2,388 do not. If we assume that the same societal forces are acting upon all of these counties equally, then in comparing the average change from 2008 to 2020 for Dominion counties versus non Dominion counties, we should have a similar change in voter share. In this regard, it is as if Dominion is the proposed treatment, and non-Dominion is the placebo.

When doing this analysis, we do NOT see a change that is constant across counties. In fact, below are the results comparing 2008 to 2020. A verbal description is “the average US county’s percentage of vote for the Democrat presidential candidate was 8.4 percentage points less Democrat in 2020 (Biden vs. Trump) than in 2008. (Obama vs. McCain). However, despite this 8.4%-point decrease, Dominion counties only decreased 6.4% points, while the non-Dominion counties decreased 9.0% points.”

Unlike a drug company’s test of a new treatment, our counties were not randomly selected to be “treated” by Dominion. These counties chose to install Dominion. Was there selection bias? We should control for other factors to see if the presence of Dominion still significantly affects results.

We can obtain demographic data on a county level basis from the U.S. department of agriculture. By attaching this data on a county basis to our already existing dataset, and running multiple linear regression, we obtain the following results. You’ll notice that Dominion’s p-value became more significant as we controlled for other variables. In some cases Dominion is more significant than the control variables.

To provide a basic interpretation, look at the sign of the coefficient. It is telling you whether the demographic factor increased or decreased Democratic presidential voter percentage. So, from 2008 to 2020:

- The more rural, the less the Democratic share

- The more manufacturing dependent, the less the Democratic share

- The more a county is considered a “high natural amenity,” the more the Democratic share if we consider counties equally weighted but not if we give larger counties more weight. Note this variable has a less significant p-value than some of the others.

- The more a county is considered “high creative class,” the more the Democratic share

- The more a county is considered “low education,” the more the Democratic share

- The more the population increased, the more the Democratic share

- The more international immigration, the more the Democratic share, although one measure had this value with a questionable p-value.

- And most importantly, if Dominion was installed, there was approximately a 1.5%-point increase in Democratic share which also corresponds to a 1.5%-point Republican decrease, so a total swing of 3% points.

If the “Dominion Effect” is real, would it have affected the election?

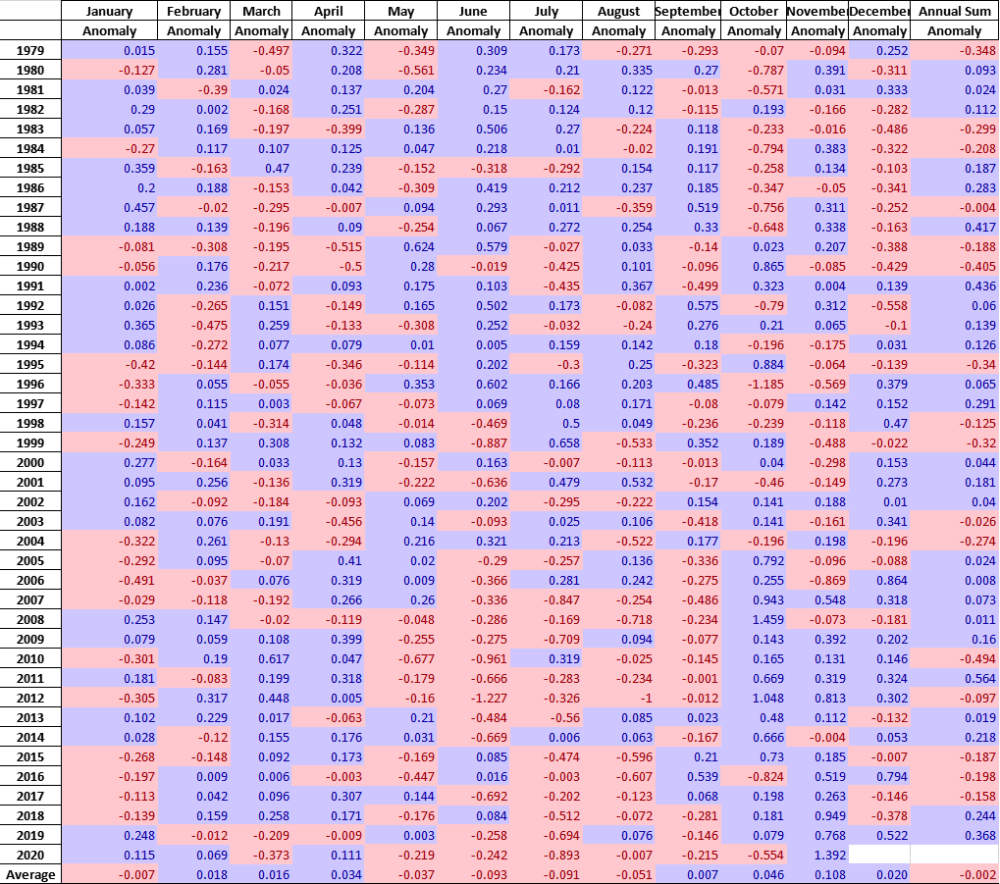

This article showed a range of estimates for the “Dominion Effect,” the more persuasive being from the multiple linear regression analysis:

Multiple Linear Regress: Ordinary Least Squares: 1.65%

Multiple Linear Regress: Weighted Least Squares: 1.55%

I find the weighted least square model the most persuasive and refer to it often in the FAQ.

If there is a Dominion Effect, it adds that percentage to Democrat presidential vote and subtracts from Republican. If the Dominion Effect is real, it may have affected this close election. For Dominion to have switched the election from Trump to Biden, it would have had to increase Democratic presidential outcomes by 0.3% and reduced Republican outcomes by 0.3% in Georgia. The factors for the other states are 0.6% in Arizona, 2.1% in Wisconsin, and 2.5% in Nevada. Click here to see the math.

If you believe the Dominion Effect is real, it is not hard to believe that this effect would be greater in swing states and could have swung these four states into Biden’s column, putting the electoral college in his favor.

Are there really enough machines in Wisconsin to have changed the outcome there?

If you go to verifiedvoting.org, and selection Dominion, 2020, Wisconsin, and download the data, you’ll see that they are saying 527 precincts, 640,215 registered voters are on Dominion machines. The state only has a 20k vote difference among Biden and Trump. And, in my paper, the Dominion effect was calculated on a county basis, not precinct basis. To the extent counties are split on which machine they used, then my paper is underestimating the Dominion effect: the effect is likely bigger on a precinct by precinct basis; I don’t have the data to go to that detail.

But to answer the question: yes, based on published, public information, there are enough machines to change the election in Wisconsin.

Have you really accounted for very large and very small counties?

In our model, we are already using these adjustments:

- weighting by county size

- a field called “RuralUrbanContinuumCode2013”

These should adjust for county size, but in effort to address concerns of readers, I ran the model with two new flags:

- 657 counties with highest number of voters in 2008

- 657 counties with lowest number of voters in 2008

The Dominion Effect is still 1.55% and the p-values are 0.00% (traditional) 0.09% (robust). These p-values are suggesting less than 1 in 1000 chance of randomly occurring.

To further address this, I ran an additional model which also includes a field for the population per square mile. This model produces identical results of Dominion Effect of 1.55% and a p-value of 0.00% and 0.09%.

Adam Anderson, CEO of Innovex Downhole Solutions, wrote the letter below to Steve Rendle, CEO of North Face’s parent, VF Corporation, in response to the latter’s refusal to fulfill a shirt order for the oil and gas company. Mr. Rendle has not responded to date. H/T

Adam Anderson, CEO of Innovex Downhole Solutions, wrote the letter below to Steve Rendle, CEO of North Face’s parent, VF Corporation, in response to the latter’s refusal to fulfill a shirt order for the oil and gas company. Mr. Rendle has not responded to date. H/T

The usual suspects reported U.N. Secretary General Antonio Guterres announcing that humans are at war upon nature. For example, NY Daily News (in italics with my bolds):

The usual suspects reported U.N. Secretary General Antonio Guterres announcing that humans are at war upon nature. For example, NY Daily News (in italics with my bolds):

The age of distributed computers and internet connectivity results in everyone from time to time receiving phishing emails. Just opening the link can get malware installed on your notebook, and can even generate a ransom demand from those who kidnapped your device. The same kind of criminals working 24/7 to steal from you are suspected of using their methods to steal the Office of the President of the US.

The age of distributed computers and internet connectivity results in everyone from time to time receiving phishing emails. Just opening the link can get malware installed on your notebook, and can even generate a ransom demand from those who kidnapped your device. The same kind of criminals working 24/7 to steal from you are suspected of using their methods to steal the Office of the President of the US.